- Author:

- Robert Ladd

- Subject:

- Literature, Visual Arts, Film and Music Production

- Material Type:

- Activity/Lab, Homework/Assignment, Lecture, Primary Source, Textbook

- Level:

- Community College / Lower Division, College / Upper Division

- Tags:

- License:

- Public Domain Dedication

- Language:

- English

- Media Formats:

- Audio, Text/HTML, Video

Introduction to Cinema: Study Abroad

Overview

This text was enthusiastically adapted from Russell Sharman's incredible Moving Pictures, linked here, and was adapted specifically to focus on cinema regarding Tokyo for the purposes of Study Abroad.

Welcome to Tokyo in Film!

What is Cinema?

Is it the same as a movie or a film?

Does it include digital video, broadcast content, and streaming media?

Is it a highbrow term reserved only for European and art house feature films?

Or is it a catch-all for any time a series of still images run together to produce the illusion of movement, whether in a multi-plex theater or the 5-inch screen of a smartphone?

Technically, the word itself derives from the ancient Greek kinema, meaning movement. Historically, it’s a shortened version of the French cinematographe, an invention of two brothers, Auguste, and Louis Lumiere, that combined kinema with another Greek root, graphien, meaning to write or record.

The “recording of movement” seems as good a place as any to begin an exploration of the moving image. And cinema seems broad (or vague) enough to capture the essence of the form, whether we use it specifically in reference to that art house film or to refer to the more commonplace production and consumption of movies, TV, streaming series, videos, interactive gaming, VR, AR or whatever new technology mediates our experience of the moving image. Because ultimately, that’s what all of the above have in common: the moving image. Cinema, in that sense, stands at the intersection of art and technology like nothing else. As an art form, it would not exist without the technology required to capture the moving image. But the mere ability to record a moving image would be meaningless without the art required to capture our imagination.

But cinema is much more than the intersection of art and technology. It is also, and maybe more importantly, a powerful medium of communication. Like language itself, cinema is a surrounding and enveloping substance that carries with it what it means to be human in a specific time and place. That is to say, it mediates our experience of the world, helps us make sense of things, and, in doing so, often helps shape the world itself. It’s why we often find ourselves confronted by some extraordinary event and find the only way to describe it is: “It was like a movie.”

In fact, for more than a century, filmmakers and audiences have collaborated on a massive, ongoing, largely unconscious social experiment: the development of a cinematic language, the fundamental and increasingly complex rules for how cinema communicates meaning. There is a syntax, a grammar, to cinema that has developed over time. And these rules, as with any language, are iterative; that is, they form and evolve through repetition, both within and between each generation. As children, we are socialized into ways of seeing through children’s programming, cartoons, and YouTube videos. As adults, we become more sophisticated in our understanding of the rules and able to innovate, re-combine, and become creative with the language. And every generation or so, we are confronted with great leaps forward in technology that re-orient and often advance our understanding of how language works.

And therein lies the critical difference between cinematic language and every other means of communication. The innovations and complexity of modern written languages have taken more than 5,000 years to develop. Multiply that by at least 10 for spoken language.

Cinematic language has taken just a little more than 100 years to come into its own.

In January 1896, those two brothers, Auguste, and Louis Lumiere, set up their cinematographe, a combination motion picture camera and projector, at a café in Lyon, France, and presented their short film, L’arrivée d’un train en gare de La Ciotat (Arrival of a Train at La Ciotat Station) to a paying audience. It was a simple, aptly titled film of a train pulling into a station. The static camera positioned near the tracks captured a few would-be passengers milling about as the train arrived, growing larger and larger in the frame until it steamed past and slowed to a stop. There was no editing, just one continuous shot. A mere 50 seconds long…

And it blew the minds of everyone who saw it.

Accounts vary as to the specifics of the audience's reaction. Some claim the moving image of a train hurtling toward the screen struck fear among those in attendance, driving them from their seats in a panic. Others underplay the reaction, noting only that no one had seen anything like it. Which, of course, wasn’t entirely true either. It wasn’t the first motion picture. The Lumiere brothers had projected a series of 10 short films in Paris the year before. An American inventor, Woodville Latham, had developed his own projection system that same year. And Thomas Edison had invented a similar apparatus before that.

But one thing is certain: that early film, as simple as it was, changed how we see the world and ourselves. From the early actualite documentary short films of the Lumieres to the wild, theatrical flights of fancy of Georges Melies, to the epic narrative films of Lois Weber and D. W. Griffith, the new medium slowly but surely developed its own unique cinematic language. Primitive at first, limited in its visual vocabulary, but with unlimited potential. And as filmmakers learned how to use that language to re-create the world around them through moving pictures, we learned right along with them. Soon we were no longer awed (much less terrified) by a two-dimensional image of a train pulling into a station, but we were no less enchanted by the possibilities of the medium with the addition of narrative structure, editing, production design, and (eventually) sound and color cinematography.

Since that January day in Lyon, we have all been active participants in this ongoing development of a cinematic language. The novelty short films of those early pioneers gave way to a global entertainment industry centered on Hollywood and its factory-like production of discrete, 90-minute narrative feature films. The invention of broadcast technology in the first half of the 20th century gave way to the rise of television programming and serialized story-telling. And as the internet revolution at the end of the 20th century gave way to the streaming content of the 21st, from binge-worthy series lasting years on end to one-minute videos on social media platforms like Snapchat and TikTok. Each evolution of the form borrowed from and built on what came before, both in terms of how filmmakers tell their stories and how we experience them. And in as much as we may be mystified and even amused by the audience's reaction to that simple depiction of a train pulling into a station back in 1896, imagine how that same audience would respond to the last Avengers film projected in IMAX 3D.

We’ve certainly come a long, long way.

This book is an exploration of the evolution of cinema: the art and technology of moving pictures. But it is also an introduction to the fundamentals of the form that have remained relatively constant for more than 100 years. Just as the text you are reading right now defies easy categorization – is it a book, an online resource, an open source text – modern cinema exists across multiple platforms – is it a movie, a video, theatrical, or streaming – but the fundamentals of communication, the syntax, grammar, and rules of language, written or cinematic, remain relatively constant.

We will begin with an overview of how moving pictures work, literally and figuratively, from the neurological phenomena behind the illusion of movement to the invisible techniques and generally agreed-upon conventions that form the basis of cinematic language.

Then, we’ll take each aspect of how cinema is created in turn: production design, narrative structure, cinematography, editing, sound, and performance. Whether it’s released in a theater as a 2-hour spectacle or streaming online in 5-minute increments, every iteration of cinema includes these elements, and they are each critical in our understanding of film form, how movies do what they do to us, and why we let them.

The second section takes all of this accumulated knowledge of how cinema communicates and applies it to what, exactly, cinema is communicating. That is, we’ll take a long, hard look at the content of cinema, how that has changed over time, and how, for better or worse, it often hasn’t. This section will take seriously the idea that cinema both influences and is influenced by the society in which it is produced. And given the porous borders of the information age, that “society” is increasingly global. Cinema then, not unlike literature, can be viewed and analyzed as a kind of cultural document, a neutral reflection of society in a moment of time, or it can be viewed as a powerful tool for social change (or for the resistance of change as the case may be).

This emphasis on content inevitably leads to an exploration of power and representation. Who is on screen? Who is behind the camera? If cinema is as powerful a medium as I contend, it stands to reason that it matters deeply who controls the means of communication.

There is an ancient story about a king who was so smitten by a particular bird's song that he ordered his wisest and most accomplished scientists to identify its source. How could it sing so beautifully? What apparatus lay behind such a sweet sound? So they did the only thing they could think to do: they killed the bird and dissected it to find the source of its song. Of course, by killing the bird, they killed its song.

The analysis of an art form, even one as dominated by technology as cinema, always runs the risk of killing the source of its beauty. By taking it apart, piece by piece, there’s a chance we’ll lose sight of the whole, that ineffable quality that makes art so much more than the sum of its parts. Throughout this text, my hope is that by gaining a deeper understanding of how cinema works, in both form and content, you’ll appreciate its beauty even more.

In other words, I don’t want to kill the bird.

As much as cinema is an ongoing, collaborative social experiment, one in which we are all participants, it also carries with it a certain magic. And like any good magic show, we all know it’s an illusion. We all know that even the world’s greatest magician can’t really make an object float or see a person in half (without serious legal implications). It’s all a trick. A sleight of hand that maintains the illusion. But we’ve all agreed to allow ourselves to be fooled. In fact, we’ve often paid good money for the privilege. Cinema is no different. A century of tricks used to fool an audience that’s been in on it from the very beginning. We laugh, cry, or scream at the screen, openly and unapologetically manipulated by the medium. And that’s how we like it.

This text is dedicated to revealing the tricks without ruining the illusion. To look behind the curtain to see that the wizard is one of us. That in fact, we are the wizard (great movie, by the way). Hopefully, by doing so, we will only deepen our appreciation of cinema in all its forms and enjoy the artistry of a well-crafted illusion that much more.

Video Attributions:

‘L’arrivée d’un train en gare de La Ciotat (Arrival of a Train)’ by Lumière Brothers. by EcoworldReactor. Standard Vimeo License.

Tokyo in Film

Did you know Tokyo isn’t a city at all? – it’s a metropolis comprising 26 different cities, a handful of towns and villages, and 23 central wards. That is not just a remarkable fact; it’s vital to understanding Tokyo. With around 14 million people living over 2,191 sq km – Tokyo has no single mood. Each city has its own disposition, which we will discover when we go from Shinjuku's grunginess and Shibuya's effortless chik to the old-fashioned charm of Ikebukuro (hopefully, it won't be raining this time).

Where else but Tokyo can we order a coffee from a robot or have the checkout machine recognize our items by shape to calculate the bill?

For this reason, the films we analyze will involve Japan and specifically feature Tokyo, and through our exploratory assignments, we will attempt to recreate those scenes. For this class, the films (and anime) we will analyze are:

Film List:

- Adrift in Tokyo, Satoshi Miki

- Akira, Katsuhiro Ôtomo

- Aggretsuko, Rareko

- Fast and Furious: Tokyo Drift, Justin Lin

- First Love, Takashi Miike

- Godzilla Minus One (2023), Takashi Yamazaki

- Initial D, Andrew Lau, Alan Mak, Ralph Rieckermann

- Jujutsu Kaisen (Shibuya Incident), Sunghoo Park

- Kill Bill Vol. 1, Quentin Tarantino

- Like Someone in Love, Abbas Kiarostami and Banafsheh Modaressi

- Midnight Diner: Tokyo Stories, Kaoru Kobayashi

- Samurai Champloo, Shinichiro Watanabe

- Shoplifters, Hirokazu Koreeda

- Spirited Away, Hayao Miyazaki

- The Seven Samurai, Akira Kurosawa

- Tokyo Ghoul, Shûhei Morita

- Your Name, Makoto Shinkai

Honors Projects

- Honors Project: A comparative analysis of Ikiru, Akira Kurosawa, and the film Living a 2022 remake of Kurosawa's iconic film, by Oliver Hermanus - comparative analysis: You will explore themes of life, death, and the search for meaning within the context of two different cultural and temporal settings.

- Honors Project: Environmentalism in Miyazaki's work: Castle in the Sky and Nausicaä of the Valley of the Wind, Hayao Miyazaki - analysis assignment: Your analysis will delve into how these films depict human interaction with the environment and the implications of these interactions on both society and nature.

Week One, Module One - How to Watch a Movie

Step One: Evolve an optic nerve that “refreshes” at a rate of about 13 to 30 hertz in a normal active state.[1] That’s 13 to 30 cycles per second. Fortunately, that bit has already been taken care of over the past several million years. You have one of them in your head right now.

Step Two: Project a series of still images captured in sequence at a rate at least twice that of your optic nerve’s ability to respond. Let’s say 24 images, or frames, per second.

Step Three: Don’t talk during the movie. That’s super annoying.

Okay, that last part is optional (though it is super annoying), but here’s the point: Cinema is built on a lie. It is not, in fact, a “motion” picture. It is, at a minimum, 24 still images flying past your retinas every second. Your brain interprets those dozens of photographs per second as movement, but it’s actually just the illusion of movement, a trick of the mind known as beta movement: the neurological phenomenon that interprets two stimuli shown in quick succession as the movement of a single object.

An example of beta movement.

Because all of this happens so fast, faster than our optic nerves and synaptic responses can perceive, the mechanics are invisible. There may be 24 individual photographs flashing before our eyes every second, but all we see is one continuous moving picture. It’s a trick. An illusion.

The same applies to cinematic language. The way cinema communicates is the product of many different tools and techniques, from production design to narrative structure to lighting, camera movement, sound design, performance and editing. But all of these are employed to manipulate the viewer without us ever noticing. In fact, that’s kind of the point. The tools and techniques – the mechanics of the form – are invisible. There may be a thousand different elements flashing before our eyes – a subtle dolly-in here, a rack focus there, a bit of color in the set design that echoes in the wardrobe of the protagonist, a music cue that signals the emotional state of a character, a cut on an action that matches an identical action in the next scene, and on and on and on – but all we see is one continuous moving picture. A trick. An illusion.

In this chapter, we’ll explore how cinematic language works, a bit like breaking down the grammar and rules of spoken language, and then we’ll take a look at how to watch cinema with these “rules” in mind. We may not be able to speed up the refresh rate of our optic nerve to catch each of those still images, but we can train our interpretive skills to see how filmmakers use the various tools and techniques at their disposal.

CINEMATIC LANGUAGE

Like any language, we can break cinematic language down to its most fundamental elements. Before grammar and syntax can shape meaning by arranging words or phrases in a particular order, the words themselves must be built up from letters, characters, or symbols. The basic building blocks. In cinema, those basic building blocks are shots. A shot is one continuous capture of a span of action by a motion picture camera. It could last minutes (or even hours) or could last less than a second. Basically, a shot is everything that happens within the frame of the camera – that is, the visible border of the captured image – from the moment the director calls “Action!” to the moment she calls “Cut!”

These discrete shots rarely mean much in isolation. They are full of potential and may be quite interesting to look at on their own, but cinema is built up from the juxtaposition of these shots, dozens or hundreds of them, arranged in a particular order – a cinematic syntax – that renders a story with a collectively discernible meaning. We have a word for that, too: Editing. Editing arranges shots into patterns that make up scenes, sequences, and acts to tell a story, just like other forms of language communicate through words, sentences, and paragraphs.

We have developed a cinematic language from these basic building blocks, a set of rules and conventions by which cinema communicates meaning to the viewer. And by “we,” I mean all of us, filmmakers and audiences alike, from the earliest motion picture to the latest VR experience. Cinematic language – just like any other language – is an organic, constantly evolving, shared form of communication. It is an iterative process that is refined each time a filmmaker builds a story through a discrete number of shots and each time an audience responds to that iteration, accepting or rejecting but always engaging in the process. Together, we have developed a visual lexicon. A lexicon describes the shared set of meaningful units in any language. Think of it as the list of all available words and parts of words in a language we carry around in our heads. A visual lexicon is likewise the shared set of meaningful units in our collective cinematic language: images, angles, transitions, and camera moves that we all understand to mean something when employed in a motion picture.

But here’s the trick: We’re not supposed to notice any of it. The visual lexicon that underpins our cinematic language is invisible, or at least, it is meant to recede into the background of our comprehension. Cinema can’t communicate without it, but if we pay too much attention to it, we’ll miss what it all means. A nifty little paradox. But not so strange or unfamiliar when you think about it. It’s precisely the same with any other language. As you read these characters, words, sentences, and paragraphs, you are not stopping to parse each unit of meaning, analyze the syntax, or double-check the sentence structure. All those rules fade to the background of your own fluency, and the meaning communicated becomes clear (or at least, I sure hope it does). And that goes double for spoken language. We speak and comprehend fluently in grammar and syntax, never pausing over the rules that have become second nature, invisible, and unnoticed.

So, what are some of those meaningful units of our cinematic language? Perhaps not surprisingly, a lot of them are based on how we experience the world in our everyday lives. Camera placement, for example, can subtly orient our perspective on a character or situation. Place the camera mere inches from a character’s face – known as a close-up –and we’ll feel more intimately connected to their experience than if the camera were further away, as in a medium shot or long shot. Place the camera below the eye-line of a character, pointing up – known as a low-angle shot – and that character will feel dominant, powerful, and worthy of respect. We are literally looking up to them. Place the camera at eye level; we feel like equals. Let the camera hover above a character or situation – known as a high-angle shot – and we feel like gods, looking down on everyone and everything. Each choice affects how we see and interpret the shot, scene, and story.

We can say the same about transitions from shot to shot. Think of them as conjunctions in grammar, words meant to connect ideas seamlessly. The more obvious examples, like fade-ins, fade-outs, or long dissolves, are still drawn from our experience. Think of a slow fade-out, where the screen drifts into blackness, as an echo of our experience of falling asleep, drifting out of consciousness. In fact, fade-outs are most often used in cinema to indicate the close of an act or segment of a story, much like the end of a long day. Dissolves are not unlike how we remember events from our own experience, one moment bleeding into and overlapping with another.

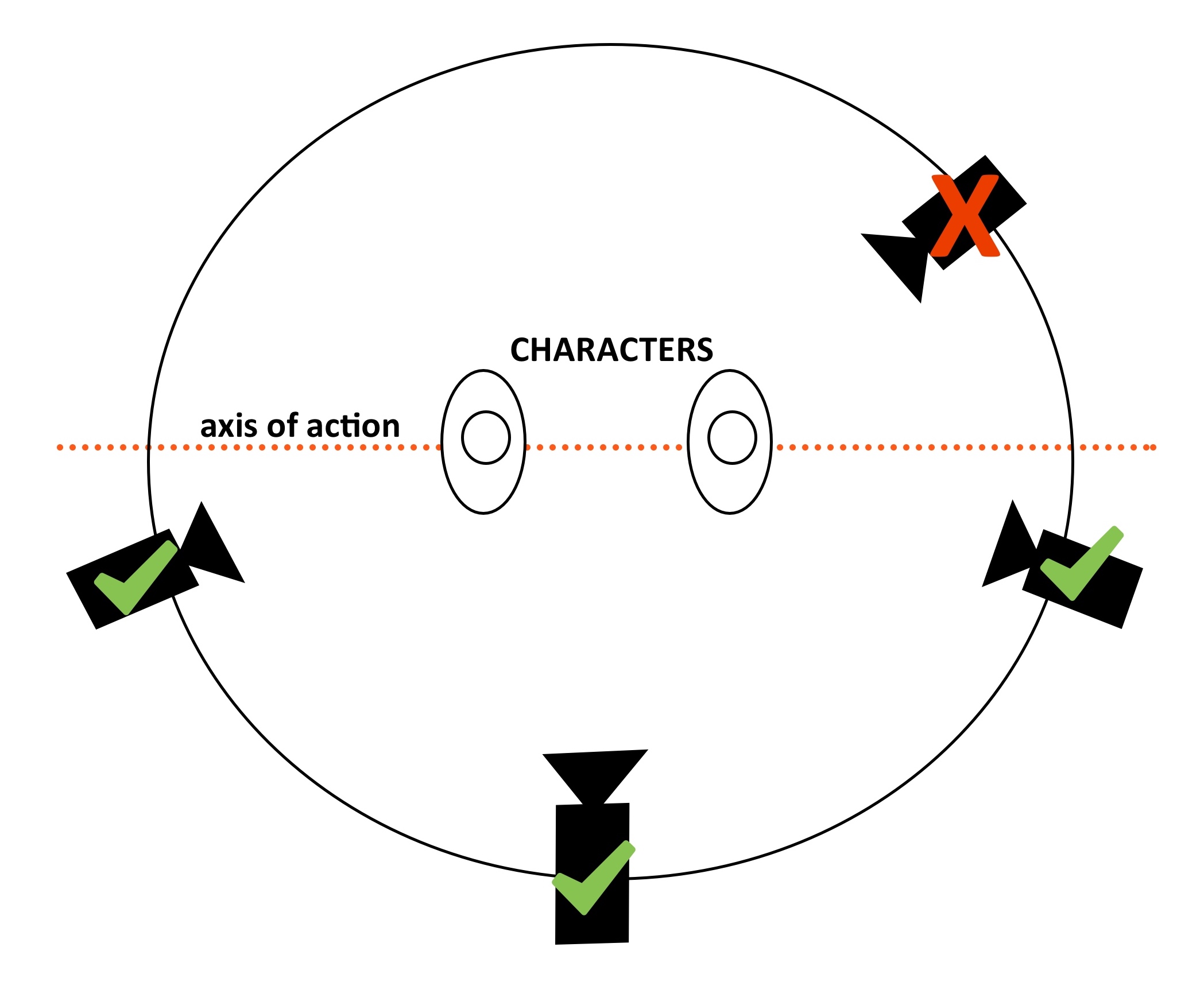

But perhaps the most common and least noticed transition, by design, is a hard cut that bridges some physical action on screen. It’s called cutting on action, and it’s a critical part of our visual lexicon, enabling filmmakers to join shots, often from radically different angles and positions, while remaining largely invisible to the viewer. The concept is simple: whenever a filmmaker wants to cut from one shot to the next for a new angle on a scene, she ends the first shot in the middle of some on-screen action, opening a door or setting down a glass, then begins the next shot in the middle of that same action. The viewer’s eye is drawn to the action on screen and not the cut itself, rendering the transition relatively seamless, if not invisible to the viewer.

Camera placement and transitions, along with camera movement, lighting style, color palette, and a host of other elements, make up the visual lexicon of cinematic language, all of which we will explore in the chapters to follow. In the hands of a gifted filmmaker, these subtle adjustments work together to create a coherent whole that communicates effectively (and invisibly). In the hands of not-so-gifted filmmakers, these choices can feel haphazard, unmotivated, or perhaps worse, “showy” – all style and no substance – creating a dissonant, ineffective cinematic experience. But even then, the techniques themselves remain largely invisible. We are left with the feeling that it was a “bad” movie, even if we can’t quite explain why. (Though by the end of this book, you should be able to explain why in great detail, probably to the great annoyance of your date. You’re welcome.)

EXPLICIT AND IMPLICIT MEANING

Once we have a grasp on these small, meaningful units of our collective cinematic language, we can begin to analyze how they work together to communicate bigger, more complex ideas.

Take the work of Lynne Ramsay, for example. As a director, Ramsay builds a cinematic experience by paying attention to the details, the little things we might otherwise never notice:

Cinema, like literature, builds up meaning through the creative combination of these smaller units, but, also like literature, the whole is – or should be – much more than the sum of its parts. For example, Moby Dick is a novel that explores the nature of obsession, the futility of revenge, and humanity’s essential conflict with nature. But in the more than 200,000 words that make up that book, few, if any, of them communicate those ideas directly. In fact, we can distinguish between explicit meaning, that is, the obvious, directly expressed meaning of a work of art, be it a novel, painting or film, and implicit meaning, the deeper, essential meaning, suggested but not necessarily directly expressed by any one element. Moby Dick is explicitly about a man trying to catch a whale, but as any literature professor will tell you, it was never really about the whale.

That comparison between cinema and literature is not accidental. Both start with the same fundamental element, that is, a story. As we will explore in a later chapter, cinema begins with the written word in the form of a screenplay before a single frame is photographed. And like any literary form, screenplays are built around a narrative structure. Yes, that’s a fancy way of saying story, but it’s more than simply a plot or an explicit sequence of events. A well-conceived narrative structure provides a foundation for that deeper, implicit meaning a filmmaker, or really any storyteller, will explore through their work.

Another way to think about that deeper, implicit meaning is as a theme, an idea that unifies every element of the work gives it coherence, and communicates what the work is really about. And really great cinema manages to suggest and express that theme through every shot, scene, and sequence. Every camera angle and camera move, every line of dialogue and sound effect, and every music cue and editing transition will underscore, emphasize, and point to that theme without ever needing to spell it out or make it explicit. An essential part of analyzing cinema is identifying that thematic intent and tracing its presence throughout.

Unless there is no thematic intent or the filmmaker did not take the time to make it a unifying idea. Then, you may have a “bad” movie on your hands. But at least you’re well on your way to understanding why!

So far, this discussion of explicit and implicit meaning, theme, and narrative structure points to a deep kinship between cinema and literature. But cinema has far more tools and techniques at its disposal to communicate meaning, implicit or otherwise. Sound, performance, and visual composition all point to deep ties with music, theater, and painting or photography as well. And while each of those art forms employs its own strategies for communicating explicit and implicit meaning, cinema draws on all of them at once in a complex, multi-layered system.

Let’s take sound, for example. As you know from the brief history of cinema in the last chapter, cinema existed long before the introduction of synchronized sound in 1927, but since then, sound has become an equal partner with the moving image in the communication of meaning. Sound can shape how we perceive an image, just as an image can change how we perceive a sound. It’s a relationship we call co-expressive.

This is perhaps most obvious in the use of music. A non-diegetic musical score, that is, music that only the audience can hear as it exists outside the world of the characters, can drive us toward an action-packed climax or sweep us up in a romantic moment. Or it can contradict what we see on the screen, creating a sense of unease at an otherwise happy family gathering or making us laugh during a moment of excruciating violence. In fact, this powerful combination of moving images and music pre-dates synchronized sound. Even some of the earliest silent films were shipped to theaters with a musical score meant to be played during projection.

But as powerful as music can be, sound in cinema is much more than just music. Sound design includes music but also dialog, sound effects, and ambient sound to create a rich sonic context for what we see on the screen. From the crunch of leaves underfoot to the steady hum of city traffic to the subtle crackle of a cigarette burning, what we hear – and what we don’t hear – can put us in the scene with the characters in a way that images alone could never do, and as a result, add immeasurably to the effective communication of both explicit and implicit meaning.

We can say the same about the relationship between cinema and theater. Both use a carefully planned mise-en-scene – the overall look of the production, including set design, costume, and make-up – to evoke a sense of place and visual continuity. Both employ the talents of well-trained actors to embody characters and enact the narrative structure laid out in the script.

Let’s focus on acting for a moment. Theater, like cinema, relies on actors’ performances to communicate not only the subtleties of human behavior but also the interplay of explicit and implicit meaning. How an actor interprets a line of dialog can make all the difference in how a performance shifts our perspective, draws us in or pushes us away. And nothing ruins a cinematic or theatrical experience like “bad” acting. But what do we really mean by that? Often it means the performance wasn’t connected to the thematic intent of the story, the unifying idea that holds it all together. We’ll even use words like, “The actors seemed like they were in a different movie from everyone else.” That could be because the director didn’t clarify a theme in the first place, or perhaps they didn’t shape or direct an actor’s performance toward one. It could also simply be poor casting.

All of the above applies to both cinema and theater, but cinema has one distinct advantage: the intimacy and flexibility of the camera. Unlike theater, where your experience of a performance is dictated by how far you are from the stage, the filmmaker has complete control over your point of view. She can pull you in close, allowing you to observe every tiny detail of a character’s expression, or she can push you out further than the cheapest seats in a theater, showing you a vast and potentially limitless context. And perhaps most importantly, cinema can move between these points of view in the blink of an eye, manipulating space and time in a way live theater never can. All of those choices affect how we engage the thematic intent of the story and how we connect to what that particular cinematic experience really means. And because of that, in cinema, whether we realize it or not, we identify most closely with the camera. No matter how much we feel for our hero up on the screen, we view it all through the lens of the camera.

And that central importance of the camera is why the most prominent tool cinema has at its disposal in communicating meaning is visual composition. Despite the above emphasis on the importance of sound, cinema is still described as a visual medium. Even the title of this chapter is How to Watch a Movie. It is not so surprising when you think about the lineage of cinema and its origin in the fixed images of the camera obscura, daguerreotypes, and series photography. All of which owe a debt to painting as an art form and a form of communication. In fact, the cinematic concept of framing has a clear connection to the literal frame, or physical border, of paintings. One of the most powerful tools filmmakers – and photographers and painters – have for communicating explicit and implicit meaning is simply what they place inside the frame and what they leave out.

Another word for this is composition, the arrangement of people, objects, and settings within the frame of an image. And if you’ve ever pulled out your phone to snap a selfie or maybe a photo of your meal to post on social media (I know, I’m old, but really? Why is that a thing?), you are intimately aware of the power of composition. Adjusting your phone this way and that to get just the right angle, to include just the right bits of your outfit, maybe edge Greg out of the frame just in case things don’t work out (sorry, Greg). The point is that composing a shot is a powerful way to tell stories about ourselves daily. Filmmakers, the really good ones, are masters of this technique. Once you understand this principle, you can start to analyze how a filmmaker uses composition to serve their underlying thematic intent to help tell their story.

One of the most important ways a filmmaker uses composition to tell their story is through repetition, a pattern of recurring images that echoes a similar framing and connects to a central idea. And like the relationship between shots and editing – where individual shots only really make sense once they are juxtaposed with others – a well-composed image may be exciting or even beautiful on its own, but it only starts to make sense in relation to the implicit meaning or theme of the overall work when we see it as part of a pattern.

Take, for example, Stanley Kubrick and his use of one-point perspective:

Or how Barry Jenkins uses color in Moonlight (2016):

Or how Sofia Coppola tends to trap her protagonists in gilded cages:

These recurring images are part of that largely invisible cinematic language. We aren’t necessarily supposed to notice them, but we are meant to feel their effects. And it’s not just visual patterns that can serve the filmmaker’s purposes. Recurring patterns, or motifs, can emerge in the sound design, narrative structure, mise-en-scene, dialog, and music.

But one distinction should be made between how we think about composition and patterns in cinema and how we think about those concepts in photography or painting. While all of the above employ framing to achieve their effects, photography and painting are limited to what the artist fixed in that frame at the moment of creation. Only cinema adds an entirely new and distinct dimension to the composition: movement. That includes movement within the frame – as actors and objects move freely, recomposing themselves within the fixed frame of a shot – as well as the movement of the frame itself, as the filmmaker moves the camera in the setting and around those same actors and objects. This increases the compositional possibilities exponentially for cinema, allowing filmmakers to layer in even more patterns that serve the story and help us connect to their thematic intent.

FORM, CONTENT, AND THE POWER OF CINEMA

As we become more attuned to the various tools and techniques filmmakers use to communicate their ideas, we can analyze their effectiveness better. We’ll be able to see what was once invisible. It's a kind of magic trick in itself. But as I tried to make clear from the beginning, my goal is not to focus solely on form, to dissect cinema into its constituent parts and lose sight of its overall power. Like any art form, cinema is more than the sum of its parts. And it should be clear already that form and content go hand in hand. Pure form, all technique, and no substance is meaningless. And pure content, all story and no style is didactic and, frankly, boring. How the story is told is as important as what the story is about.

However, just as we can analyze technique, the formal properties of cinema, to better understand how a story is communicated, we can also analyze content, that is, what stories are communicating, to better understand how they fit into the wider cultural context. Again, Cinema, like literature, can represent valuable cultural documents, reflecting our ideas, values, and morals back to us as filmmakers and audiences.

We’ll spend more time on content analysis – the idea of cinema as a cultural document – in the last couple of chapters of this book, but I want to take a moment to highlight one aspect of that analysis in advance. I’ve discussed at length the idea of cinematic language and the fact that, as a form of communication, it is largely invisible or subconscious. Interestingly, the same can be said for cinematic content. Or, more specifically, the cultural norms that shape cinematic content. Cinema is an art form like any other, shaped by humans bound up in a given historical and cultural context. No matter how enlightened and advanced those humans may be, that historical and cultural context is so vast and complex that they cannot possibly grasp every aspect of how it shapes their view of the world. Inevitably, those cultural blind spots, the unexamined norms and values that make us who we are, filter into the cinematic stories we tell and how we tell them.

The result is a kind of cultural feedback loop where cinema both influences and is influenced by the context in which it is created.

Because of this, on the whole, cinema is inherently conservative. That is to say, as a form of communication, it is more effective at conserving or re-affirming a particular view of the world than challenging or changing it. This is due in part to the economic reality that cinema, historically a very expensive medium, must appeal to the masses to survive. As such, it tends to avoid offending our collective sensibilities to make us feel better about who we already think we are. It is also partly due to the social reality that the people who historically had access to the capital required to produce that very expensive medium tend to all look alike. That is, primarily white and mostly men. And when the same kind of people with the same kind of experiences tend to have the most consistent access to the medium, we tend to get the same kinds of stories, reproducing the same, often unexamined, norms, values, and ideas.

But that doesn’t mean cinema can’t challenge the status quo or at least reflect real, systemic change in the wider culture already underway. That’s what makes the study of cinema, particularly in regard to content, so endlessly fascinating. Whether it’s tracking the way cinema reflects the dominant cultural norms of a given period or the way it sometimes rides the leading edge of change in those same norms, cinema is a window – or frame (see what I did there) – through which we can observe the mechanics of cultural production, the inner-workings of how meaning is produced, shared, and sometimes broken down over time.

EVERYONE’S A CRITIC

One final word on how to watch a movie before we move on to the specific tools and techniques employed by filmmakers. In as much as cinema is a cultural phenomenon, a mass medium with a crucial role in the production of meaning, it’s also an art form meant to entertain. And while I think one can assess the difference between a “good” movie and a “bad” movie in terms of its effectiveness, that has little to do with whether one likes it or not.

In other words, you don’t have to necessarily like a movie to analyze its use of a unifying theme or how the filmmaker employs mise-en-scene, narrative structure, cinematography, sound, and editing to communicate that theme effectively. Citizen Kane (Orson Welles, 1941), arguably one of the greatest films ever made, is an incredibly effective motion picture. But it’s not my favorite. Between you and me, I don’t even really like it all that much. But I still show it to my students every semester. This means I’ve seen it dozens and dozens of times, and it never ceases to astonish me with its formal technique and innovative use of cinematic language.

Fortunately, the opposite is also true: You can really like a movie that isn’t necessarily all that good. Maybe there’s no unifying theme, the cinematography is all style and no substance (or no style and no substance), the narrative structure is made out of toothpicks, and the acting is equally thin and wooden. (That’s right, Twilight, I’m looking at you.) Who cares? You like it. You’ve watched it more often than I’ve seen Citizen Kane, and you still like it.

That’s great. Embrace it because taste in cinema is subjective. But analysis of cinema doesn’t have to be. You can analyze anything. Even things you don’t like.

Video and Image Attributions:

An example of beta movement. Public Domain Image.

Lynne Ramsay – The Poetry of Details by Tony Zhou. Standard Vimeo License.

Kubrick // One-Point Perspective by kogonada. Standard Vimeo License.

MOONLIGHT // BLUE by Russell Leigh Sharman. Standard Vimeo License.

Have You Noticed This About Sofia Coppola’s Films? by Fandor. Standard YouTube License.

- Okay, it's actually a lot more complicated than that. Optic nerves don't "refresh" in the way we normally think of that term. In fact, the optic nerve is part of a complex system that incudes your eyeballs, retinas and brain, each of which performs at varying degrees of efficiency and changes as we age. But the numbers here are a good rule of thumb for thinking about how quickly we can process images. For more on how the optic nerve works, check this out: https://wolfcrow.com/notes-by-dr-optoglass-motion-and-the-frame-rate-of-the-human-eye/ ↵

Final Project: Short Film Schedule

Final Project

Purpose:

Work in a self-selected team of three students to create a short film (plus titles and credits).

You may negotiate a larger team if you have a clear production plan, and we will be creating a 2 to 8-minute piece short film. Even though we are working in groups, we also need to work together to support each other's "productions."

Here are six themes to use as a taking-off point, and please also consider that these are natural extensions of our film analysis and journal assignments (especially the explorative assignments)!

- Animation and Movement

- Slice of Life

- Choreography and Story-Telling

- Recreating a Scene

- Pastiche/Emulating Cinematography (recreating an iconic shot)

- Deconstructing Narrative.

Please also read this article by Mark Billen at FX. He is the creator of Hitfilm, the free film editor I encourage everyone to use for this project. He gives great suggestions on brainstorming, how to come up with ideas, and what to film.

Task:

Please remember that this is not a film school. We will study many aspects of film and its creation but expect insight and appreciation, not mastery, of this discipline. We don't expect great acting, fancy VFX, complex sets, or the use of high-quality equipment.

Choose your film's subject to minimize the impact of limited production resources. As for all assignments this semester, we will primarily evaluate the student and group's process, intermediate materials, and technical appreciation of cinematography.

The project development stages will follow this criteria:

Checkpoints:

- Module One:

- Group Selection

- Brainstorming ideas.

- Greenlight: convince one instructor to be your producer.

- Module Two:

- Explorative exercises.

- Automatic writing without editing the whole idea.

- Class presentation of Final Project proposals.

- Preproduction: your producer approves your script, shot list, or other preproduction.

- Module Three:

- Storyboard: bring 3 video clips/photographs/writings related to your ideas.

- Animatic: your producer approves a rough edit from preproduction materials and found footage.

- Module Four:

- Raw footage: your producer approves your footage.

- Module Five:

- Rough cut: your producer signs off on the first complete edit.

- Post-production and editing are complete; the final film is due at 11:59 p.m. May 26th.

- Self-evaluations are due May 28th. It may include up to one page of text.

The checkpoints are pass/fail; however, we essentially have to pass to continue. For the first, the group must sell the instructor on your concept and the practicality of executing the production plan. That instructor will then agree to be your producer for the remainder of the project. Don't structure this as a single pitch. Instead, please work with your group and the instructor to set reasonable expectations and a plan to achieve them.

You will then meet with your producer regularly during class, office hours, and appointments. You must receive approval for each checkpoint by the specified deadline.

These deadlines aim not to have you submit something at that time but to create a process that encourages continued contact throughout production.

We expect you'll receive signoff well before the weekly deadlines in the natural course of working with your producer.

Educational Goals

- Practice and then demonstrate the technical skills you acquired during the semester.

- Iterate on a production, refining your work and learning from peers, mistakes, and serendipity.

- Create a physical artifact for your portfolio.

- Experience the complete production cycle and the thrill of creation.

Pick up a camera. Shoot something. No matter how small, cheesy, or whether your friends and your sister star in it. Put your name on it as director. Now you're a director. Everything after that, you're just negotiating your budget and your fee.

Requirements

To ensure everyone has sufficient support, we expect you to volunteer to act or crew for another project for a 2-hour session (it is okay if nobody takes you up on this, and you don't have to act if you're not comfortable with that)

- Preproduction materials:

- The script if there is dialogue.

- Storyboard

- Shot list

- Schedule for shoots, reserved equipment and spaces, actors, VFX, editing, and screenings

- The animatic is an outline of your film as a 540p MP4 constructed from storyboard panels, still frames, and/or existing footage (the usual copyright and plagiarism restrictions do not apply since this will not be public)

- Animatic (optional!)

- An actual video that approximates the pace, audio, and shots of your film without actually requiring real footage

- Examples:

- Use this Premiere project as your working draft, which continually improves as footage comes in during production.

- Footage

- Dailies plus B-roll coverage

- Multiple takes of all of the key shots

- About 10x as much footage as your expected running time to provide

- Rough cut:

- A coarsely edited collection of your footage as a 540p or 360p MP4. Audio can be a placeholder from the animatic, and there is no expectation of VFX or post. You can have up to two still shots from the storyboard or found footage if you haven't completed production.

- Final film:

- 2 to 8-minute final product (plus titles and credits) film in 720p MP4 format

- Must include titles, credits, and copyright information.

- For extra credit, include a "behind the scenes" reel showing some elements of your process, also in 720 MP4 format and less than 150 MB. For example, how did you create certain tracking shots, the set and takes, VFX breakdowns, etc?

- Your film may be stop motion, a documentary, a sequence of freeze-frame live-action stills, live-action, or animation.

- Your film must demonstrate knowledge of topics covered in class through:

- Camera footage you filmed (i.e., it can't be 100% animation, found footage, etc.)

- Intentional lighting

- Editing in the continuity/IMR style

- Audio is optional but highly recommended

Planning and scheduling your work is hard, sometimes. Here is a sample schedule for your group:

Week 1: Module One and Module Two

- Day 1-2: Group Selection and Brainstorming Ideas

- Day 3-4: Pitch your concept to the instructor and get a producer on board

- Day 5-6: Explorative exercises and automatic writing

- Day 7: Class presentation of Final Project proposals

Week 2: Module Two and Module Three

- Day 8-9: Preproduction: Producer approves script, shot list, or other preproduction elements

- Day 10-11: Storyboard creation (3 video clips/photographs/writings related to ideas)

- Day 12-13: Animatic: Producer approves a rough edit from preproduction materials and found footage

Week 3: Module Four and Module Five

- Day 14-15: Raw footage production: Producer approves your footage

- Day 16-17: Rough cut editing: Producer signs off on the first complete edit

- Day 18-20: Finalize post-production and editing, complete "behind the scenes" reel for extra credit

- Day 21: Final film, including titles, credits, and copyright information, is due at 11:59 p.m. on May 26th

Post-Completion Day

- Day 22: Self-evaluations are due by May 24th (up to one page of text)

You may submit your response in either a written, oral, or video format uploaded into the Google Classroom.

Iconic Films Shot in Tokyo

Filming a Scene:

For our film project, we will be recreating scenes from the films we analyze in this course, and our excursions are meant to facilitate this process. That being said, we cannot analyze every film that has taken place in Tokyo. This map lists some of those other locations; if you would like to recreate a scene from a film or series on this list for your final project, please submit a proposal with your group.

Other Iconic Films Shot in Tokyo:

- Tokyo Story (1953):

- Director: Yasujirō Ozu.

- Explores the generational divide in post-war Tokyo.

- Lost in Translation (2003):

- Director: Sofia Coppola.

- Portrays the bond between two lonely strangers against Tokyo’s neon-lit cityscape.

- The Fast and the Furious: Tokyo Drift (2006):

- Tokyo’s underworld adrenaline-fueled car racing culture takes center stage.

- Tokyo! (2008):

- Anthology of three short films by different directors presenting a surreal depiction of Tokyo.

- Like Someone in Love (2012):

- Director: Abbas Kiarostami.

- Delicately explores interpersonal relationships in Tokyo.

- The Wolverine (2013):

- Showcases Tokyo’s modernity, from skyscrapers to efficient bullet trains.

- Your Name (2016):

- Director: Makoto Shinkai.

- Animated film painting a vivid picture of Tokyo through two protagonists.

- Shoplifters (2018):

- Director: Hirokazu Kore-eda.

- Offers a poignant exploration of Tokyo’s marginalized communities.

- Tokyo Ghoul (2017):

- Live-action adaptation of the popular manga series, presenting a darker, supernatural side of Tokyo.

- Weathering With You (2019):

- Director: Makoto Shinkai.

- Animated film presenting Tokyo’s unpredictable weather patterns as a central narrative element.

Tokyo on the Small Screen: TV Shows Set in Tokyo:

- Tokyo Trial (2016-2017):

- Historical drama focusing on the international military tribunal held in Tokyo.

- Midnight Diner: Tokyo Stories (2016-present):

- Anthology series with heartwarming tales centered around a late-night diner in Tokyo.

- The Naked Director (2019-present):

- Biographical drama set in the 1980s, exploring the rise of adult video director Toru Muranishi.

- Alice in Borderland (2020-present):

- Thrilling series based on a manga, presenting a dystopian version of Tokyo.

- Tokyo Revengers (2021-present):

- Action-packed anime series about a man who travels back in time to save his girlfriend and change his regretful past.

Tokyo for the Young: Animated Films Set in Tokyo:

- Pom Poko (1994):

- Studio Ghibli film depicting raccoons fighting against urban development in Tokyo.

- Digimon Adventure: Our War Game! (2000):

- Popular anime film featuring Tokyo landmarks during a city-wide internet outage.

- Tokyo Godfathers (2003):

- Tells the story of three homeless people finding a baby on Christmas Eve in Tokyo.

- Tamagotchi: The Movie (2007):

- Sets the popular virtual pet franchise film in Tokyo and the Tamagotchi Planet.

- Summer Wars (2009):

- Presents a virtual world threatening to destroy Tokyo unless a young math genius can stop it.

Explorative Assignment, Slice-of-Life

Option One: Slice-of-Life Mini-Narrative (Fictionalizing Reality) Group Assignment

Purpose: To capture and then recreate a slice-of-life scene, offering students a chance to explore the nuances of everyday interactions and how they can be translated into a film narrative.

Preproduction Materials:

- Unscripted Dialogue Capture: Students film an unscripted, natural conversation, focusing on capturing genuine interactions. To create an unscripted review of a film viewed together at a local cinema, emulating the slice-of-life style of animation/filmmaking.

- Film Review: With your chosen group, visit a local cinema and view a film together with a local audience. I recommend the Toho cinema in Shinjuku for the iconic Godzilla statue.

Turn-in Methods:

- Footage: Raw footage of the unique, unscripted scene wherein the "actors" review the film they watched at the local cinema and their experience of viewing a film with a Japanese audience.

Option Two: Recreating a Scene; Jujutsu Kaisen: Shibuya Sky

Purpose: Work as a team on your group project to use the aforementioned sites from the excursions in this module to create your final project. You may work to complete any of the following portions of the final assignment:

Turn-in Methods:

- Storyboard: A storyboard that visually maps out each shot, tailored to the locations available.

- Shot List and Schedule: This is a comprehensive shot list and schedule that organizes shoots, equipment, and actor availability.

- Footage: Raw footage of the recreated scenes, demonstrating the application of cinematography techniques.

Please note that each of these items needs to be completed.

Task:

Complete the aforementioned journal following one of the prompts (noting that it is not imperative that you answer every question unless it is related and relevant to your overall point).

You may submit your response in either a written, oral, or video format uploaded into the Google Classroom.

Week One, Module Two - Mise-en-Scène

Allow me to introduce a word destined to impress your friends and family when you trot it out at the next cocktail party: Mise-en-Scène. And even if you don’t frequent erudite cocktail parties, and who does these days (a shame), it’s still a handy term to have around. It’s French (obviously), and it literally means “putting on stage.”

Why French? Sometimes, we like to feel fancy, and let’s face it: to an American, French is fancy.

But the idea is simple. Borrowed from theater, it refers to every element in the frame that contributes to the overall look of a film. And I mean everything: set design, costume, hair, make-up, color scheme, framing, composition, lighting… Basically, if you can see it, it contributes to the mise-en-scène.

I could have started with any number of different tools or techniques filmmakers use to create a cinematic experience. The narrative might seem a more obvious starting point. Cinema can’t exist without a story; chronologically speaking, it all starts with the screenplay. Or I could have led off with cinematography. After all, we often think of cinema as a visual medium. But mise-en-scène captures much more than any one tool or technique in isolation. It’s more an aesthetic context in which everything else takes place, the unifying look, or even feel, of a film or series.

And this is probably as good a time as any to discuss the role of a director in cinema. There’s a school of thought out there, known as the auteur theory, that claims the director is the “author” of a work of cinema, not unlike the author of a novel, and that they alone are ultimately responsible for what we see on the screen. Cinema requires dozens, if not hundreds, of professionals dedicated to bringing a story to life. The screenwriter writes the script, the production designer designs the sets, the cinematographer photographs the scenes, the sound crew captures the sound, the editor connects the shots together, and each of them has whole teams of experts working below them to make it all work on screen. But if there’s any hope of that final product having a unified aesthetic and a coherent, underlying theme that ties it all together, it needs a singular vision to give it direction. That, really, is the job of a director. To ensure everyone is moving in the same direction, making the same work of art. And they do that not so much by managing people – they have an assistant director and producers for that – they do it by managing mise-en-scène, shaping the overall look and feel of the final product. While mise-en-scène has many moving parts and many different professionals in charge of shaping those individual parts into something coherent, it’s the one element of cinema that is most clearly the responsibility of the director.

This talent for shaping mise-en-scène is one reason we can readily identify great directors' work. Think about the films of Alfred Hitchcock, Agnes Varda, Wes Anderson, Yosujiro Ozu, Claire Denis, or Steven Speilberg (and if some of those names are unfamiliar, seek them out!). If we know their work at all, most of us could pick out one of their films after just a few minutes, even if we had never seen it before. This is not just because of some signature flourish or idiosyncratic visual habit (though that’s often part of it) but because their films have a certain look to them, a certain aesthetic that saturates the screen.

Take the films of Claire Denis, for example:

Denis’s films generate an enveloping atmosphere that you can almost taste and feel, and all of that is part of her consistent (and brilliant) use of mise-en-scène.

Or how about the films of Wes Anderson:

Anderson’s films consistently use symmetrical compositions, smooth, precise tracking shots, and slow motion, but it’s the overall effect, the mise-en-scène that makes the impression (check out more break-downs of Wes Anderson’s style here and here).

Because mise-en-scène refers to this “overall look,” it can feel rather broad (and even vague) as a concept. So, let’s break it down into four elements of design: setting, character, lighting, and composition. We’ll tackle each one in turn.

SETTING

Nothing we see on the screen in the cinema is there by accident. Everything is carefully planned, arranged, and even fabricated – sometimes using computer-generated imagery (CGI) – to serve the story and create a unified aesthetic.

That goes double for the setting.

If mise-en-scène is the overall aesthetic context for a film or series, the setting is the literal context, the space actors and objects inhabit for every scene. And this is much more than simply the location. It’s how that location, whether it’s an existing space occupied for filming or one purpose-built on a soundstage, is designed to serve the vision of the director.

As we saw in Chapter One, in the early days of motion pictures, when cinematic language was still in its infancy, not much thought was given to the design of a setting (or editing or performance, and no one was even thinking about sound yet). But it didn’t take long for filmmakers to realize they could employ the same tricks of set design they used in theater for the cinema.

One of the pioneers of this was the French filmmaker Georges Méliès. Take, for example, his 1903 film The Kingdom of the Fairies:

Méliès’s use of elaborate sets, along with equally elaborate costumes, hairstyles, make-up, and even the hand-tinting of the film itself, all contribute to the fantastical look and feel of the film. He brought a similar design sensibility to all of his films, including the ground-breaking 1902 film A Trip to the Moon.

A decade or so later, this attention to detail in the design elements of cinema had become commonplace. Indeed, many of the more well-known early silent films are famous for their sophisticated mise-en-scène, particularly in regard to setting, often above all else.

Check out this scene again from D. W. Griffith’s Intolerance (1916):

The set design alone is staggering. Built in the middle of Los Angeles, it took four years to dismantle it.

Or consider the opening of Fritz Lange’s Metropolis (1927):

The film draws us into a mechanized, dystopian future – one of the first science-fiction films in history – and its success lies in its careful design of the setting to serve that narrative purpose.

Once filmmakers realized the importance of setting as an element of design and what it contributed to the overall look of their films, it wasn’t long before a position was created to oversee it all: the production designer. The production designer is the point person for the overall aesthetic design of a film or series. Working closely with the director, they help translate the aesthetic vision for the project – its mise-en-scène – to the various design departments, including set design, art department, costume, hair, and make-up. But arguably, their most important job is to make sure the setting matches that aesthetic vision, specifically through set design and set decoration.

Set design is precisely what it sounds like the design and construction of the setting for any given scene in a film or series. Plenty of productions use existing locations and don’t necessarily have to build much of anything (though that doesn’t mean there isn’t an element of design involved, as we shall see). But when production requires complete control over the filming environment, production designers, along with conceptual artists, construction engineers, and sometimes a whole army of artisans, must create each setting, or set, from the ground up. And since these sets have to hold up under the strain of a large film crew working in and around them for days and even weeks, they require as much planning and careful construction as any real-life home, building, or interplanetary city out there.

Take a look at the incredible detail involved in bringing the set design to life for Thor: Ragnarok (Taika Waititi, 2017):

D. W. Griffith can take a seat.

These sets may be built on-site to blend in with the surrounding landscape, or they may be built within a large, windowless, sound-proof building called a soundstage. A soundstage provides the control over the environment production designers need to give the director precisely the look and feel she wants from a particular scene. On a big enough soundstage, a production designer can fabricate interiors and exteriors, sections of buildings, and even small villages. And since it is all shielded from the outside, the production has complete control over lighting and sound. It can be dawn or twilight for 12 hours a day. And a shot will never be interrupted by an airplane flying loudly overhead.

The use of soundstages is particularly helpful when producing serialized content. A TV or streaming series, especially one that uses the same few locations over and over – the family home, the mobster’s headquarters, the king’s palace – needs access to those sets for months at a time, year after year, for as long as we keep watching. Of all those series you binge-watch on the weekends (or during the week when you should be reading this), almost all of them depend upon sets built from the ground up and housed on soundstages for years on end.

Of course, sometimes, the setting of a particular production requires more than a production designer can deliver with the materials available (or the time or the budget, as the case may be). In that case, the setting must be augmented with computer-generated imagery (CGI). The most common way this is implemented is through the use of green screen technology. The idea is fairly simple. The set is dressed with a backdrop of bright green (or blue; the actual color isn’t terribly important), and the scene is filmed as usual. Then, in post-production, the software picks out that particular color and replaces it with imagery either filmed elsewhere or generated by digital artists, a process called keying. For this to work, no other object or article of clothing can match that shade of green, or it will be replaced as well. And with ever-improving technology, the sky is no longer the limit to what designers can offer up for the screen.

Whether the production designer is building the set from the ground up on a soundstage or simply using an existing location, the setting is still a kind of blank canvas until that space is filled with all of the essential details that really tell the story. That’s where set design meets set decoration. Still under the supervision of the production designer, set decorating falls to any number of skilled artisans in the art department. They design everything from the color on the walls, to the texture of the drapes, to the style of the furniture, to every ashtray, book, and family photo that might show up on screen. And that goes for existing locations as well. A film production using someone’s actual home for a scene will likely replace all of the furniture, repaint the walls, and fill it with their own odds and ends that help tell the cinematic story. And then, hopefully, put it all back the way they found it when they’re done.

Take a look at the ways the production designer for the Netflix series The Crown converts existing locations into a Buckingham Palace throne room or the Queen’s private apartment:

This is where storytelling through the physical environment – the setting – can really come alive. Every object placed just so on a set adds to the mise-en-scène and helps tell the story. Those objects could be in the background providing context – framed photos, a trophy, an antique clock – or they could be picked up and handled by characters in a scene – a glass of whisky, a pack of cigarettes, a loaded gun. We even have a name for those objects, props, short for “property” and also borrowed from theater, and a name for the person in charge of keeping track of them all, a prop master.

As should be clear by now, setting is one of the most important design elements in creating a consistent mise-en-scène. Not simply the location – a suburban home, a high-rise office building, a spaceport on Mos Eisley – but all of the details that fill that location, make it come alive as a lived-in space, and most importantly, help tell the cinematic story. One way we can begin to see the filmmaker's intention, to understand how she is subtly (and maybe not so subtly) manipulating our emotions through cinematic language, is to pay attention to these details. The very details we’re not supposed to notice.

CHARACTER

Character is a term that will come up a lot. We use it to describe how a screenwriter invents believable characters that inhabit a narrative structure. And we use it to describe how an actor inhabits that character in their performance. But we can also examine how the physical design of a character, through costume, make-up, and hairstyle, not only contributes to the mise-en-scène but also helps fully realize the work of both screenwriters and actors.

Typically, when we think of “character design,” we might immediately think of fantastic creatures dreamed up in a special effects studio. They might be animated through CGI, fabricated from latex, and worn by an actor. And all of that is a reasonable way to think about the concept of character design. But in some ways, that is just a much more extreme version of how I would like to frame the work of costume designers and hair and make-up professionals.

Just as a screenwriter must create – or design – a character on the page and an actor must create – or design – their approach to inhabiting that character, the wardrobe, hair, and make-up departments must also design how that character is going to look on screen. This design element is, of course, more obvious the less familiar the world of the character might be. The clothing, hair, and make-up of characters inhabiting worlds in a distant time period or even more distant galaxy will inevitably draw our attention. (Though even there, the intention is to add to the mise-en-scène without distracting us from the story.) But even when the context is closer to home, a story set in our time, in our culture, maybe even our own hometown, every element of the clothes, the hair, and the make-up is carefully chosen, sometimes made from scratch, to fit that context and those particular characters. In other words, each character’s look is carefully designed to support the overall mise-en-scène and help tell the story.

Take costume design, for example. We often think of “costume” as another word for disguise or playing a character. But the last thing a filmmaker wants is for the audience to think of their characters as actors in disguise or playing dress-up. They want us to see the characters. Period. The wardrobe should fit the time, place, and, most importantly, the character. Once that is established, the designer can layer in more subtle hints about the larger context, the underlying theme, by adding a touch of color that serves as a visual motif or introducing some alteration in the wardrobe that dramatizes some narrative shift:

What is important to note is that costume design in film is not about fashion or what looks “good” on an actor. It’s about what looks right on a character, what fits the setting, and the film's overall look.

These same principles can be applied to hair and make-up. As with costume design, it’s easy to think of the more extreme examples of hair and make-up design, especially when the setting calls for something historic, other-worldly, or… horrifying. The special effects make-up for the gory bits of your favorite horror films can sometimes take center stage. But these elements are often not meant to draw our attention at all. To achieve that, perhaps ironically, hair and make-up require even more attention from their respective designers. This is due in part to the technical requirements of filming. Bright lights can reveal every distracting blemish or poorly applied foundation, and as camera and image technology improves, the techniques required to hide the fact that actors are even wearing make-up must be continually refined. But it is also because hair and make-up are incredibly personal and intimately connected to the character:

And while all of this is tremendously important for the audience, it is even more important for the actor playing the character. We’ll discuss the various ways an actor approaches their performance in detail in another chapter, but for now, it’s important to note how much actors rely upon the design of their character through costume, hair, and make-up. Putting on the wardrobe, seeing themselves in another era, a different hairstyle, looking older or younger, helps the actor literally and metaphorically step into the life of someone else and do so believably enough that we no longer see the actor, only the character in the story.

LIGHTING

The first two elements of design in mise-en-scène – setting and character – fall squarely under the supervision of the production designer and the art department. The next two – lighting and composition – fall to the cinematographer and the camera department but are just as important as elements of design in the overall look of the film. We will take a deeper dive into each in a later chapter on cinematography, but for now, let’s take a quick look at how these elements fit into mise-en-scène.

As should be obvious, you can’t have a cinema without light. Light exposes the image and, of course, allows us to see it. But it’s the creative use of light, or lighting, that makes it an element design. A cinematographer can illuminate a given scene with practical light, that is, light from lamps and other fixtures that are part of the set design, set lights, light fixtures that are off-camera and specifically designed to light a film set, or even available light, light from the sun or whatever permanent fixtures are at a given location. But in each case, the cinematographer is not simply throwing a light switch; they are shaping that light, making it work for the scene and the story as a whole. They do this by emphasizing different aspects of lighting direction and intensity. A key light, for example, is the primary light that illuminates a subject. A fill light fills out the shadows a strong key light might create. And a backlight helps separate the subject from the background. And it’s the consistent use of a particular lighting design that makes it a powerful part of mise-en-scène.

Two basic approaches to lighting style can illustrate the point. Low-key lighting refers to a lighting design where the key light remains subtle and even subordinate to other lighting sources. The result? A high-contrast lighting design that makes consistent use of harsh shadows. Another word for this is chiaroscuro lighting (this time, we’re stealing a fancy word from Italian). Think of old detective movies with the private eye stalking around the dark streets of San Francisco.

The Big Combo, 1955, Joseph H. Lewis, dir.

Classic low-key lighting design.

High-key lighting refers to a lighting design where the key light remains the dominant source, resulting in a low-contrast, even flat, or washed-out look to the image. Think of art-house dramas set in stark, snowy landscapes or even big Hollywood comedies that try to avoid “interesting” shadows that might distract us from the joke.

In either case, the cinematographer, working closely with the director and production designer, is using light as an element of design, contributing to the overall mise-en-scène.

COMPOSITION

The fourth and final design element in considering mise-en-scène – one that I touched on in the last chapter and will receive much more attention in the chapter on cinematography – is composition. As discussed in Chapter Two, composition refers to the arrangement of people, objects, and settings within the frame of an image. And because we are talking about moving pictures, there are really two important components of composition: framing, which even photographers must master, and movement. In the case of cinematic composition, movement refers to movement within the frame as well as movement of the frame as the cinematographer moves the camera through the scene. All of which are critical aspects of how we experience mise-en-scène.

Like lighting, composition falls under the responsibility of the cinematographer. While there are many technical and artistic considerations when it comes to framing and movement, cinematographers are also keenly aware of the design element of composition. In fact, they often describe at least part of their job as designing a shot. Part of this process involves arranging people, objects, and settings in the frame to achieve a sense of balance and proportion, often dividing the frame into thirds horizontally and vertically to ensure proper distribution. We call this the rule of thirds, and it’s fairly common in photography. In fact, take out your phone right now, open the camera app, and you’re likely to see a faint grid across the screen. That’s there to help you balance the composition of your selfie according to the rule of thirds. Another important part of the process of designing a shot is the choreography involved in moving the camera through the scene, whether on wheels, on a crane, or strapped to a camera person.

Again, we’ll spend more time on this subject in a later chapter, but take a look at how Japanese filmmaker Akira Kurosawa approaches the composition of movement in designing his shots:

Or how Andrea Arnold uses framing and composition to communicate isolation, captivity, or a deep connection to the earth:

A thoughtfully composed frame does more than create a pleasing image. It can isolate characters, focus our attention, and draw us into the story without us ever really noticing the technique itself.

Unless we know to look for it.

CINEMATIC STYLE

Taken together, setting, character, lighting, and composition make up the key elements of design in creating an effective and coherent mise-en-scène. As discussed earlier, it’s one of the ways we can pick out the work of great filmmakers. A consistent mise-en-scène becomes a kind of signature style of a filmmaker.

But it can also mark the signature style of a particular genre or type of cinema. Take film noir, for example. Remember those detective movies I mentioned earlier? They are part of a whole trend in filmmaking that began in the 1940s with titles like The Maltese Falcon (John Huston, 1941), Double Indemnity (Billy Wilder, 1944), and The Big Sleep (Howard Hawks, 1946). These films and many more are part of a style of filmmaking that includes a gritty, urban setting, tough, no-nonsense characters, low-key lighting, and off-balance compositions. Sometimes, they feature a private detective on a case, but not always. Usually, they were filmed in black and white, but not always. In fact, film noir – which literally means “dark film” in French (what is with all the French?!) – has been historically difficult to define because the specific elements can vary so widely. However, one easy way to identify a film as part of that tradition is through its mise-en-scène. Mise-en-scène isn’t about any one element; it’s that overall look, the whole, that is greater than the sum of its parts.

And that can extend to a whole national trend in cinema as well. Because cinema is so profoundly connected to a particular cultural context, part of that gives and takes in the cultural production of meaning, it should come as no surprise that there are specific periods in a given place and time where cinema can take on a kind of national style. Where cinema artists in that same place and time are all speaking the same cinematic language, as a result, produces a unified, identifiable style, which is another way of saying a consistent mise-en-scène.

One example of this can be found in the films produced in Japan.

According to the Center for Japanese Studies, the Japanese cinematic style is a set of cinematographic techniques commonly detected in Japanese filmmaking of all ages, such as long ASL (average shot length), static or slow camera movement, emotions expressed via natural phenomenons, and, to a lesser extent, deep focus shots, flat lighting, and shots empty of reference (as in lingering on details that don’t directly connect to the narrative). The Japenese Cinema Archives terms this Japenese Cinimalism.

There is perhaps no better representative of Japanese cinema than the aforementioned Akira Kurosawa. For a taste, here is a video of thirty-five scenes from Kurosawa’s iconic remake of Shakespeare’s King Lear, Ran, one of his “color” period films.

However, there is an entirely different kind of medium that Japan is more famous for in modern America: animation. It may be obvious, but animation is an entirely different medium than traditional cinema.