Fundamentals of Laboratory Management

Overview

Fundamentals of Laboratory Management is designed to serve as an introductory guide to laboratory, quality and regulatory system in laboratories for biotechnicians.

Introduction to Lab & Quality Management System

Laboratory or lab is a place where experiments are carried for the purpose of enhancing scientific knowledge or translating the acquired knowledge for the welfare of mankind[1].

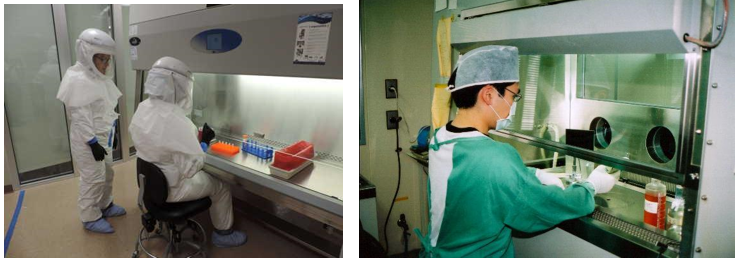

This chapter is designed to serve as an introductory guide to laboratory and quality system in laboratories for biotechnicians. Biotechnicians are personnel that work in laboratories and help scientists/ researchers on various projects. Their role in the lab may include set-up, maintenance, preparing reagents, operating equipment, assisting in experiments, data recording and analysis. It is important for biotechnicians to understand how a laboratory functions, what are the different types of labs and how quality system plays an important role in maintaining consistency in laboratory as well as a framework for quality. Quality has to be built into the processes and protocols in the labs to generate accurate and precise results. Labs can be divided into three categories based on the type of the research that is being conducted: Basic research, translational research, and clinical research.

Basic (pure or fundamental) research is driven by a scientist’s curiosity to find answer to a scientific question. Basic research aims to formulate theory that explain research findings and enhance fundamental understanding of a scientific area. For example, a lab working on basic science question may be determining genetic code of a newly found species of bacteria or studying specific proteins related to the life cycle of SARS-CoV-2 virus that causes coronavirus diseases (COVID).

Translational research implements a ‘bench-to-bedside’ approach. It aims to translate the basic scientific discoveries and research results into medical diagnosis and treatment practices for patients. Translational research involves the use of the fundamental knowledge (a majority of which is gained through the basic sciences) towards practical applications such as developing diagnosis methods or candidate molecules that could serve as therapeutic drug for diseases. For example, the development COVID vaccine is based on the in depth understanding of biology of the virus.

Clinical research involves development and evaluation of the efficacy and safety of new treatments, medications, and diagnostic techniques in patients. The research may enroll volunteers or patients to evaluate or test new medications or interventions. For example, the vaccine produced by Pfizer to prevent COVID was tested in more than 40,000 volunteers before being deemed safe for use on the general public. Clinical research may focus on a medication, medical devices or new therapeutic approaches (treatment research), preventing a disorder or disease from developing or returning (Prevention Research), developing new or better identification techniques (Diagnostic Research), detect diseases or disorders (Screening Research), or prediction of disorders by identifying the genes and biomarkers responsible for certain diseases (Genetic Studies). (www.fda.gov)

1. Types of Lab

Research labs can be found in universities or private companies. In general, there are 4 different types of laboratories.

- Research labs in university generally focus on addressing a question or a hypothesis related to basic science or translational research.

- Research labs in companies address aspects related to commercializing scientific discoveries and processes for making products such as drugs or pharmaceutical and improving services such as diagnostics.

- Core labs support the research of others by providing specific services. For example, using a specific type of assay or in an area of expertise. An example of a core lab is a transgenic mouse core lab provides support services to generate and facilitate the use of mouse models for various diseases.

- Clinical labs are service-based entities involved in analyzing clinical samples. The majority of work in these labs supports patient care and clinical trials. For example, a clinical lab may study patient samples to study the effectiveness of the antibiotics that are prescribed to them.

These labs are described in details in the next section.

Research Labs in Universities: In a large university, there can be different schools focusing on areas such as medicine, nursing, dentistry , law, etc. For example, University of Maryland at Baltimore is a well-recognized public research institute in the nation. It has 7 schools, of which one is the School of Medicine. The School of Medicine has 25 departments, such as biochemistry and molecular biology, family medicine, neurosurgery etc. It also has 10 Research Centers such as Center for Integrative Medicine or Center for Vaccine Development and Global Health. It houses 2 Institutes that work on genome sciences and human virology. These department, centers and institutes may have more than 100 labs working on different scientific areas related to basic, translational, or clinical research. Biotechnicians or laboratory technicians are needed in each and every lab to assist in multiple projects.

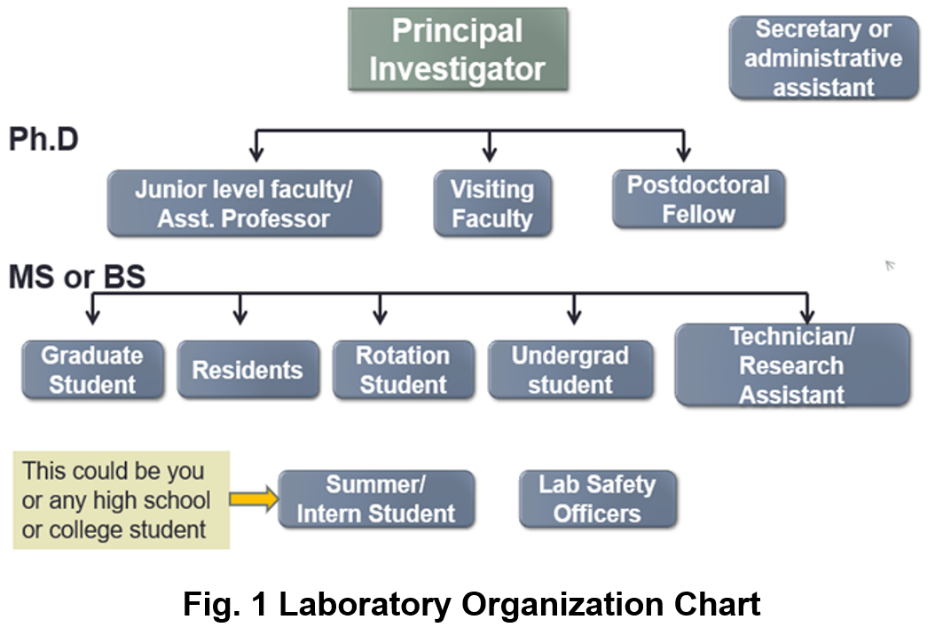

The labs in university or academia are usually structured. A lab is usually headed by an individual called the “Principal Investigator” (PI). A PI may have a PhD and/or MD, and not only decides the type of research conducted, but even specific research problems. For example, a PI who has a degree in biology may be working on bacterial genomics and evolution or development of new vaccination strategies. The PI has to apply for grants from time to time to obtain funding money for supporting their research as well as the research staff in their lab from appropriate government agencies (such as National Institute of Health[1]), foundations (such as Bill and Melinda Gates Foundation[2], Burroughs Welcome Fund[3]) or private companies. A big lab may have the primary research staff that includes assistant professors, visiting faculty, and postdoctoral fellows. These lab personnel typically have PhDs or MDs and are semi-independent. They are capable of handling independent projects within the lab, although they may be reporting to the PI.

Graduate students are engaged in research on their PhD project(s). The lab can also have medical residents, undergraduate students, or research technicians. Many labs also accept summer students or internship students for a brief period of time to allow them to have research experience. The lab personnel can have varying educational background, such as high school diploma, college certificate, associate, bachelor’s degree or higher.

Research Labs in Companies: Biotech Companies provide products and/or services utilizing biotechnology. The products produced can vary from food supplement to medications. Cetus, the first biotech company, was established in 1971 by Nobel L aureate Donald Gasler and others[1]. Cetus primarily developed microbial processes for producing chemical feedstocks such as propylene oxide and antibiotic intermediates. Another major biotechnology company called Genentech was founded in 1976 by Dr. Herbert W Boyer and Robert A Swanson. It was also the first biotech company backed by funding to explore the commercial potential of genetic engineering for drug discovery[2]. Genentech’s primary accomplishment was the production of human somatostatin in 1977. This was the first successful expression of human genes in bacteria. Genentech also made the first human synthetic insulin in bacteria in 1978. Before this, insulin was derived from the pancreas of cows and pigs. It is astonishing to learn that two tons of pig parts were needed to extract just eight ounces of insulin. Thus, the synthetic and large scale production of insulin helped meet supply demands. Genentech is a part of Roche since 2009. Today there are thousands of biotech companies in US that produce drugs, pharmaceutics and products for human health. Table 1 shows the top 10 Biopharma Clusters[3] and Biotech Companies[4] in USA.

Table 1 : Top 10 Biopharma Clusters and Biotech Companies in USA

Top 10 BioPharma Clusters

| Top 10 Biotech Companies

|

Maryland, Washington DC and Virginia comprise the BioHealth Capital Region (BHCR). There are 1,800 life sciences companies, over 70 federal labs and world class academic and research institution[5]. BHCR and is considered as the fourth largest Biopharma cluster in the nation. Hence there is a huge need of workforce at various levels that can help these companies and labs thrive.

Biotechnician or a laboratory/ manufacturing technician must have knowledge and skills so they are able to assist and perform biological experiments and processes in biotech or pharmaceutical industry they work in. Their work can often involves making buffers, solutions, and media. They must be able to always follow directions accurately. To perform any procedure in a biotech company, technicians are trained and able to follow Standard Operating Procedures (SOPs), which means they must follow always the exact same steps to complete a procedure. They must be completely knowledgeable of practices and procedures that prevent contamination from pathogens (aseptic techniques) and take great care to keep the work area free of any contamination. They should be able to follow Good Laboratory Practices (the regulations that help laboratories operate). Technicians should also be able to perform experiments, analyze data, produce graphs and maintain appropriate laboratory notebook or records as required.

Core Labs: Core Lab supports biological, clinical, and animal research by providing cost-effective laboratory testing or analysis. Typically core labs have state-of-the-art technology not easily affordable by every lab, so they provide the service and expertise to all in in universities, research institutes, and commercial laboratories. Core facilities might specialize in technology such as DNA (Deoxyribonucleic acid) sequencing, imaging, bioinformatics, cell/ tissue culture and flow cytometry, lab animal services, biorepository, pathology/ histology, pharmaceutical and drug discovery and more. The focus of core lab is to provide services which is very specialized so that the researchers do not have to become expert in all the areas. The technicians working in core laboratories should have the basic skills and the aptitude and intent to learn the particular skill in the core lab that they want to work in. They also might need to work with different people every day and should be capable of good communication skills.

Some examples of a core lab are as follows:

- Bioinformatics: Help researchers understand large and/or complex data sets using computer analysis and statistics.

- Flow Cytometry: Flow cytometry is a method in which researchers sort cells into groups by labeling them with different colored fluorescent labels. Personnel specialize in the use of flow cytometry equipment and analysis software.

- Lab Animal Services: Personnel in this area care for animals being used for research; monitor for proper care and treatment; maintain approved protocols and ensure appropriate use of animals per protocols.

- Mass Spectrometry: provides a wide variety of chemical analyses using mass spectrometry techniques for organic and biological samples.

- Nuclear Magnetic Resonance (NMR): NMR is a series of techniques to determine chemical structures. Researchers in this lab specialize in the use of the equipment and software related to NMR.

- Nuclear Acid and Protein Research: Scientists in these labs are experts in techniques related to isolating and analyzing nucleic acids (DNA, RNA) and proteins

- Pathology: Personnel in this lab prepare, stain and examine tissues microscopically, including using various types of microscopes, web‐based data applications, and 3‐D imaging.

Clinical Labs: A medical or clinical laboratory is a place where tests are usually done on clinical specimens in order to obtain information about the health of a patient as pertaining to the diagnosis, treatment, and prevention of disease. Research in clinical labs deal primarily with the clinical samples. Clinical laboratory staff are responsible for conducting studies to examine the safety and efficacy of new drugs in humans. This job involves performing routine sample analysis from patients, maintaining, and reviewing documents to ensure that they are in compliance with protocols, regulatory requirements, and SOPs (standard operating procedures). Here are some examples of clinical laboratories and the role of lab personnel:

- Blood bank: Personnel in this lab specialize in delivery and appropriate treatment with blood and blood products.

- Microbiology: Personnel in this lab analyze samples for pathogens such as bacteria, yeast.

- Immunogenetics: Personnel in this lab perform tests associated with HLA‐typing, a kind of genetic test to increase successful outcomes following transplants and for diagnosis of diseases.

- Molecular Genetics: Personnel in this lab perform assays that help with diagnosis and treatment of genetic disorders, such as clotting disorders and cancer predisposition syndromes.

- Stem Cell Lab: Personnel in this lab prepare progenitor/stem cell populations for transplantation, process and store donor samples, and perform assays to enrich sub‐populations of cells within the sample prior to transplant.

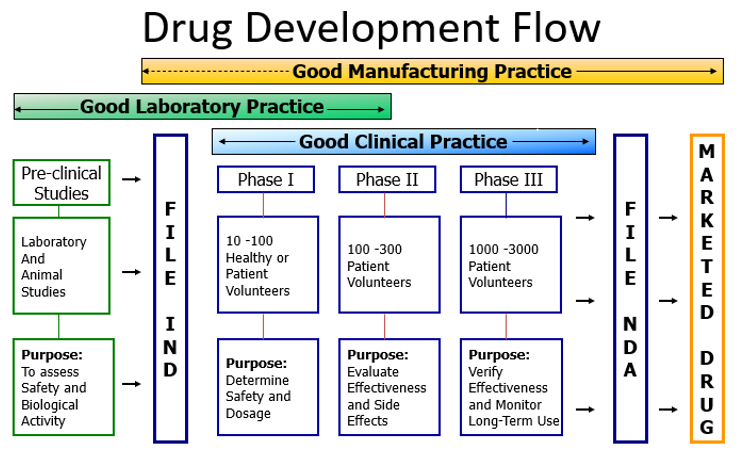

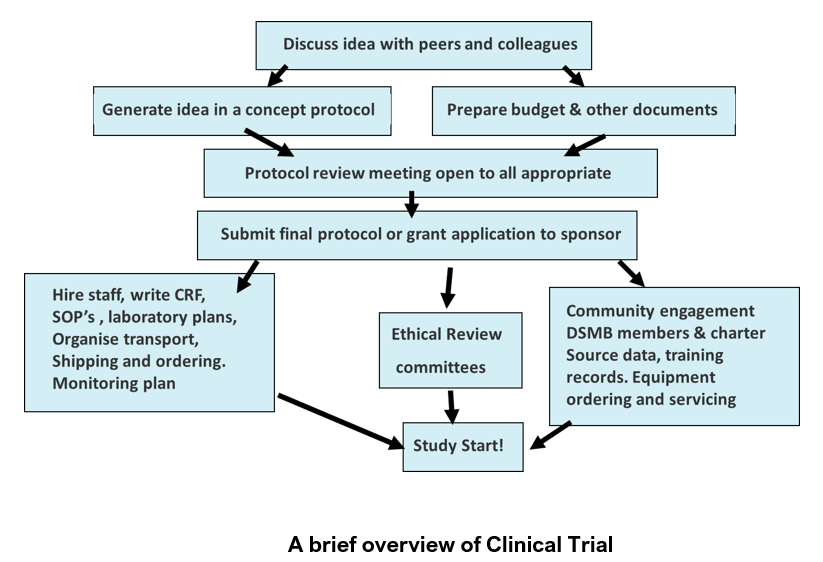

Clinical trials are experiments that involves determining outcomes of a new treatment such as vaccine, drug, dietary supplement and medical devices in human participants. Patient research in clinical labs is divided into 4 phases[6].

- Phase I trials: There are often conducted in 20-100 (small number) of healthy volunteers or people with disease/ condition. They involve first-in-human evaluation to evaluate safety, tolerability, and determine appropriate dosage. About 70% of the drugs move to the next phase.

- Phase II trials: Intervention given to a larger group (100-300) with disease/ condition to evaluate effectiveness and safety. About 33% of the drugs move to the next phase.

- Phase III trials: Intervention given to large groups (upto thousands) to confirm effectiveness, monitor side effects, compare to other treatments, and collect information that will allow it to be used safely. About 25-30% drugs move to the next phase.

- Phase IV trials: Thousands of volunteers may participate in the study to determine safety and efficacy of the drug. Post marketing studies determine additional information including risks, benefits, and optimal use of an intervention.

To get a full spectrum of idea of the numerous lab technician jobs, their roles and the salaries, take a look at the job descriptions at www.biotech-careers.org or click on the specific type of role(s) in table 2 that incite your interest.

Table 2: Laboratory Technician jobs in various fields

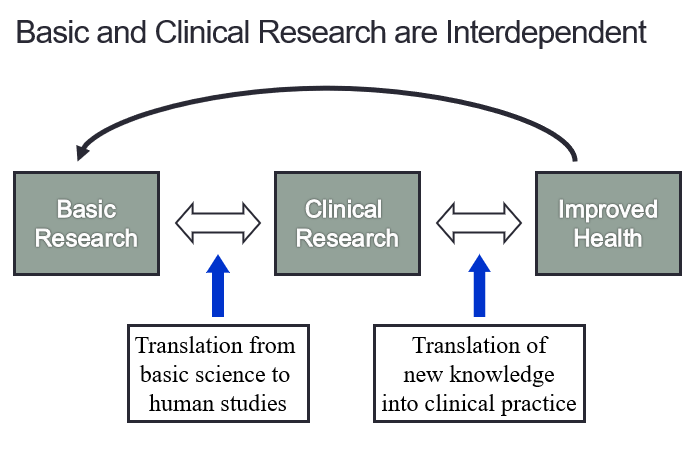

In summary, the various types of research, basic, translational and clinical research are interdependent as shown in figure 3. They are vital for our understanding of scientific processes as well as for the improved health of the patients.

Fig 3. Correlation of Basic & Clinical Research with human health

1.2 Introduction to Laboratory Quality Management System

A clinical or medical laboratory is a laboratory where tests are carried out on clinical or patient specimens to obtain information about the health of an individual. This can aid in diagnosis, treatment, and prevention of disease. At any given point of time these labs may have hundreds or thousands of samples undergoing numerous laboratory tests. At the core of this process, samples are collected, received, registered, and then processed. The concept of quality management will be explained in context of medical laboratories.

Some of the important features of medical laboratories are:

- Highly complex operations

- Individuals doing complex tasks

- Absolute need for Accuracy

- Absolute need for Confidentiality

- Absolute need for Time Effectiveness

- Absolute need for Cost Effectiveness

Seventy percent of clinical medicine decision making is predicated upon or confirmed by medical laboratory test results. In the United States, there are between 7-10 billion laboratory tests reported annually. In a 5 country study, 15% of patients were reported to receive either incorrect or delayed reports on abnormal results[7]. Hence there is an continuous and absolute necessity for quality management.

Quality can be defined as conformance to requirements of a product. In laboratory, quality can be defined as accuracy, reliability, and timeliness of reported test results. The laboratory results must be as accurate as possible, all aspects of the laboratory operations must be reliable, and reporting must be done in timely manner for it to be useful in a clinical or public health setting.

Quality management system can be defined as “coordinated activities to direct and control an organization with regard to quality”[8].

This definition is used by internationally recognized laboratory standards organizations such as International Organization for Standardization (ISO)[9] and Clinical and Laboratory Standards Institute (CLSI)[10].

A quality management system can be described as a set of building blocks needed to control, assure and manage the quality of the laboratory's processes. A system used in this tool is the framework of 12 building blocks, called quality system essentials (QSEs). These quality system essentials are a set of coordinated activities that serve as building blocks for quality management[11].

|

Good quality in processes, equipment and performance is indispensable for the overall good performance of the laboratory.

1. Organization

Aims to create a functioning quality management system

- The structure and management of the laboratory must be organized

- Quality policies can be established and implemented.

- There must be a strong supporting organizational structure with commitment from the management to ensure a functioning quality management system.

- There must be a mechanism for implementation and monitoring

2. Personnel

The most important laboratory resource is competent and motivated staff.

- The quality management system addresses many elements of personnel management and oversight.

- The importance of encouragement and motivation

3. Equipment

Each piece of equipment or instrument used in a lab must be calibrated and functioning properly.

- The right equipment must be chosen and installed correctly.

- The new equipment should be tested to ensure that its functioning properly .

- There should be a system for maintenance of equipment.

4. Purchase and Inventory

Proper management of purchases and stock/ inventory are important for cost savings.

- Supplies and reagents must be available when needed

- The procedures that are a part of management of purchasing and inventory must be designed to ensure that all reagents and supplies are of good quality.

- The reagents and supplies must be used and stored in a manner that preserves integrity and reliability.

5. Process Control

The laboratory must institute process control to ensure the quality of the laboratory testing processes.

- Process control factors include:

- Quality control for testing,

- Appropriate management of the sample, including collection and handling

- Method verification and validation.

- The elements of process control are very familiar to laboratories; quality control was one of the first quality practices to be used in the laboratory and continues to play a vital role in ensuring accuracy of testing.

6. Information Management

The product of the laboratory is information, primarily in the form of test reporting.

- Information (data) must be carefully managed to ensure accuracy and confidentiality.

- It should be accessible to the laboratory staff and to the health care providers.

- Information may be managed and conveyed with either paper systems or with computers.

7. Documents & Records

Documents are needed in the laboratory to inform how to do things. Laboratories always have many documents.

- Records must be meticulously maintained so as to be accurate and accessible.

- Many of the 12 quality system essentials overlap.

- A good example is the close relationship between "Documents and records" and "Information management".

8. Occurrence Management

An “occurrence” is an error or an event that should not have happened.

- A system must be instituted to detect these problems or occurrences.

- The system must include steps to handle the “occurrence” properly, and include provisions to learn from mistakes

- Take action so that they do not happen again.

9. Assessment

The process of assessment is a tool for examining laboratory performance and comparing it to standards, benchmarks or the performance of other laboratories.

- Assessment may be:

- Internal (performed within the laboratory using its own staff)

- External (conducted by a group or agency outside the laboratory).

- Laboratory quality standards are an important part of the assessment process, serving as benchmarks for the laboratory.

10. Process Improvement

The primary goal in a quality management system is continuous improvement of the laboratory processes.

- This must be done in a systematic manner.

- There are a number of tools that are useful for process improvement.

11. Customer Service

Customer service is essential for medical laboratory as it is a service organization.

- It is essential that clients of the laboratory receive what they need.

- The laboratory should understand who the customers are, and should assess their needs and use customer feedback for making improvements.

12. Facilities & Safety

Many factors must be a part of the quality management of facilities and safety. These include:

- Security—which is the process of preventing unwanted risks and hazards from entering the laboratory space.

- Containment—which seeks to minimize risks and prevent hazards from leaving the laboratory space and causing harm to the community.

- Safety—which includes policies and procedures to prevent harm to workers, visitors and the community.

- Ergonomics—which addresses facility and equipment adaptation to allow safe and healthy working conditions at the laboratory site.

In the quality management system model, all 12 quality system essentials must be addressed to ensure accurate, reliable and timely laboratory results. It is important to note that the 12 quality system essentials may be implemented in the order that best suits the laboratory. Approaches to implementation will vary with the local situation.

Laboratories that do not implement a good quality management system will result in many errors and problems that could even go undetected. Implementing a quality management system may not guarantee an error-free laboratory, but it does yield a high-quality laboratory that detects errors and prevents them from recurring.

[1] First-Hand: Starting Up Cetus, the First Biotechnology Company - 1973 to 1982 - Engineering and Technology

History Wiki. www.ethw.org

[2] Russo, E. (2003). "Special Report: The birth of biotechnology". Nature. 421 (6921): 456–457. Bibcode:2003Natur.421..456R. doi:10.1038/nj6921-456a. PMID 12540923. S2CID 4357773.

[3] https://sciencecenter.org/news/top-10-u-s-biopharma-clusters

[4] https://en.wikipedia.org/wiki/List_of_largest_biotechnology_and_pharmaceutical_companies

[5] http://www.biohealthcapital.com

[6][6] https://www.fda.gov/patients/drug-development-process/step-3-clinical-research

[7] Boone DJ, IQLM, 2005

[8] https://www.iso.org/iso-9001-quality-management.html

[9] www.iso.or

[1] Merriam-Webster (n.d.). In Merriam-Webster.com dictionary. Retrieved February 13, 2021, from https://www.merriam-webster.com/dictionary/laboratory.

Quality Control, Assurance & Management

Where do you hear the word ‘quality’ in daily life? When you call a company's customer service line, you often hear the words "this call may be monitored or recorded for quality assurance purposes," at the beginning of the conversation. Quality is of utmost importance for every organization that serves people in any way.

The word has quality originates from Latin word qualitas. Qualitas means “general excellence” or “a distinctive feature”[1]. Good quality is like reputation, it takes a long time to build but can be ruined with one mistake. If you set 99% as a level of quality it means that you are willing to accept a 1% error rate.

One percent error rate would mean: 5 bad landings/ take offs out of 500 flights every day at a medium sized airport. It could mean 0.2 million out of 20 million packages lost or delivered to wrong address around US every day by USPS. None of these are acceptable outcomes, hence we know that highest standards of quality are needed in every aspect of life.

Quality is defined as the totality of features and characteristics of a product or service that bears on its ability to satisfy stated or implied needs (ISO 1994).

Quality varies from different viewpoints:

- User-based: better performance, more features

- Manufacturing-based: conformance to standards, making it right the first time

- Product-based: specific and measurable attributes of the product

Every pharmaceutical product must meet four attributes namely: Identity, Strength, Safety and Purity[2].

Achieving quality means that these attributes are achieved for every product.

In health care system essentially, quality means laboratory results that are

- Accurate,

- Reliable,

- Timely

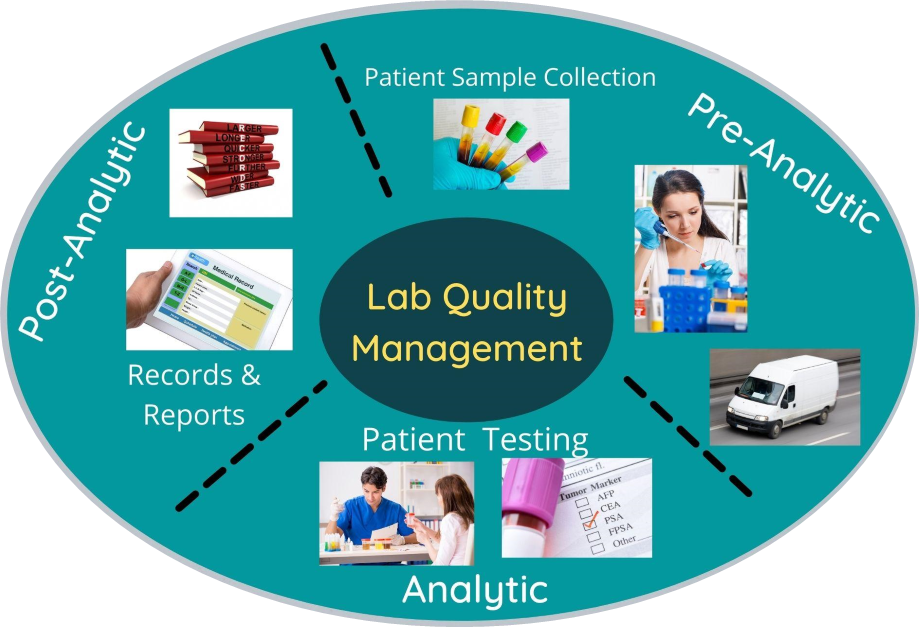

Quality in a complex laboratory system could be divided in three phases pre-analytic, analytic and post-analytic as shown below[3].

Fig. 6 Quality in a Complex Laboratory System

There are 4 types of complexities in the laboratory system:

- Mistakes & defects

- Breakdowns & delays

- Inefficiencies

- Variation

These laboratory errors can cost in 1) Time 2) Personnel effort and 3) Patient outcomes. A report published in 2016 attributed 250,000 deaths per year due to medical errors in the US. This is an average over an eight-year period. In a 2006 publication, it was mentioned that 17% of adult patients in the US experienced medical, medication or lab errors[4], [5].

So as to achieve ‘quality’ or ‘excellent performance’ in the laboratory, according to the US FDA ‘Quality should be built into the product, and testing alone cannot be relied on to ensure product quality”

- Building quality into the product involves having controls at every stage of manufacturing and not only terminal controls.

- These include controls on all input resources like people, facilities, equipment, materials, process and testing etc.

Following variables may affect ultimate quality of product

- Raw material

- In process variations

- Packaging material

- Labeling

- Finish product

- Manual Error

All aspects of the laboratory operation are needed to be addressed to assure quality, this constitutes a quality management system. Quality management system (QMS) is the coordinated activities to direct and control an organization with regards to quality.

The 12 Quality Management System (QMS) essentials that you read in Chapter 1 are an integral part of healthcare and help in achieving high quality standards. They can be divided in three parts for a laboratory quality management systems purpose.

Table 3: QMS essentials categorized into Laboratory, Work and Outome

Laboratory | Work | Outcome |

Organization Facilities & safety Personnel Equipment Purchasing & inventory | Process Control Documents & records Information management | Occurrence management Assessments Customer service Process improvement |

Pareto Principle in Quality Management

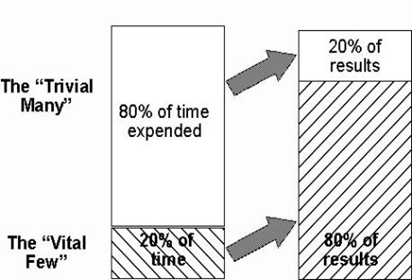

One of the important tools for Quality Management is Pareto chart based on the Pareto principal. Vilfredo Pareto, an Italian engineer and economist, first observed the 80/20 rule in relation to population and wealth. He noted that in Italy and several other European countries, 80% of the wealth was controlled by just 20% of the population. Pareto principal is the 80–20 rule or the predication that for many events, roughly 80% of the effects come from 20% of the causes.

Fig. 7. Pareto Principle – Time vs Results

Quality management pioneer Dr. Juran implemented Pareto’s principle to Quality management. In simple words, we spend 80% of time doing 20% of tasks, and we spend only 20% time doing 80% of tasks that matter most. In a manufacturing process, 80% of the downtime might result from 20% of the problems. Pareto chart is a bar chart showing how much each cause contributes to an outcome or effect[1], [2].

Total Quality Management (TQM) approach of an organization is centered on quality, based on the participation of all its members and aiming at long term success through customer satisfaction and benefits to all members of the organization and society. – ISO (International Organization of Standardization).

Total Quality Management (TQM) involves quality management of company, processes and products. Quality control is a part of quality assurance and quality assurance is a part of quality management.

- TQM involves coordinated and customized management effort with focus on quality.

- QA is responsible for ensuring quality requirements are being met.

- QC tests & maintains established quality criteria for products.

Fig. 8. Relationship – QC, QA and QM

Table 4: Differences between QA & QC

Basic Differences between QA & QC | |

QA approves test, method, standards & ensure that high standard must confirm with Good Manufacturing Practices & with QC facility. | QC is one compartment of multicompartment QA system. |

QA is interested in what happened yesterday, what is happening today, what is going to happen tomorrow. | QC is interested in what is happening today. |

Definition | |

QA is a set of activities for ensuring quality in the processes by which products are developed. | QC is a set of activities for ensuring quality in products. The activities focus on identifying defects in the actual products produced. |

QA is a managerial tool | QC is a corrective tool |

Goals & Focus | |

QA aims to prevent defects with a focus on the process used to make the product. It is a proactive quality process. | QC aims to identify (and correct) defects in the finished product. Quality control, therefore, is a reactive process. |

The goal of QA is to improve development & test processes so that defects do not arise when product is being developed. | The goal of QC is to identify defects after a product is developed and before it's released. |

What & how does it work? | |

QA works by prevention of quality problems through planned and systematic activities including documentation. | QC works by the activities or techniques used to achieve and maintain the product quality, process and service |

QA establishes a good quality management system and the assessment of its adequacy. | QC finds & eliminates sources of quality problems through tools & equipment so that customer's requirements are continually met. |

Whose responsibility is it & example? | |

Everyone on the team involved in developing the product is responsible for quality assurance. | Quality control is usually the responsibility of a specific team that tests the product for defects. |

Verification is an example of QA. | Validation is an example of QC. |

Quality Assurance Unit comprises of personnel or organizational element, designated by management to perform the duties relating to QA of nonclinical laboratory studies.

Responsibilities of QA department:

- The QA department is responsible for ensuring that the quality policies adopted by a company are followed.

- It must determine that the product meets all the applicable specifications and that it was manufactured according to the internal standards of GMP.

QA also holds responsible for quality monitoring or audit function.

The concept of total quality control refers to the process of striving to produce a perfect product by a series of measures requiring an organized effort at every stage in production. Although the responsibility for assuring product quality belongs principally to QA personnel, it involves many departments and disciplines within a company. To be effective, it must be supported by team effort. Quality must be built into a drug product during product and process, and it is influenced by the physical plant design, space, ventilation, cleanliness and sanitation during routine production.

Advantages of TQM: The advantages of TQM are 1) Improves reputation - faults and problems are spotted and sorted quicker. 2) Higher employee morale - workers motivated by extra responsibility, teamwork and involvement in decisions of TQM. 3) Lower cost - decrease waste as fewer defective products and no need for separate. 4) Quality control inspector.

Disadvantages of TQM: The disadvantages of TQM are 1) Initial introduction cost. 2) Benefits of TQM may not be seen for several years. 3) Workers may be resistant to change.

QMS Essentials model and TQM provides the roadmap that can successfully provide the laboratory’s best contribution to patient care and ensure safety and quality control of products in the pharmaceutical industry.

[1] Pareto, Vilfredo; Page, Alfred N. (1971), Translation of Manuale di economia politica ("Manual of political economy"), A.M. Kelley, ISBN 978-0-678-00881-2

[2] Code of Federal Regulations, Title 21, https://www.accessdata.fda.gov/scripts/cdrh/cfdocs/cfcfr/CFRSearch.cfm?CFRPart=211&showFR=1

[3] Laboratory Quality Management System Handbook. https://www.who.int/ihr/publications/lqms/en/

[4] Health for a life. https://www.aha.org/system/files/content/00-10/071204_H4L_HighestQualityCare.pdf

[5] Makary Martin A, Daniel Michael. Medical error—the third leading cause of death in the US BMJ 2016; 353 :i2139

[6] Pareto, Vilfredo; Page, Alfred N. (1971), Translation of Manuale di economia politica ("Manual of political economy"), A.M. Kelley, ISBN 978-0-678-00881-2

Quality Management Regulation – US Regulatory Agencies

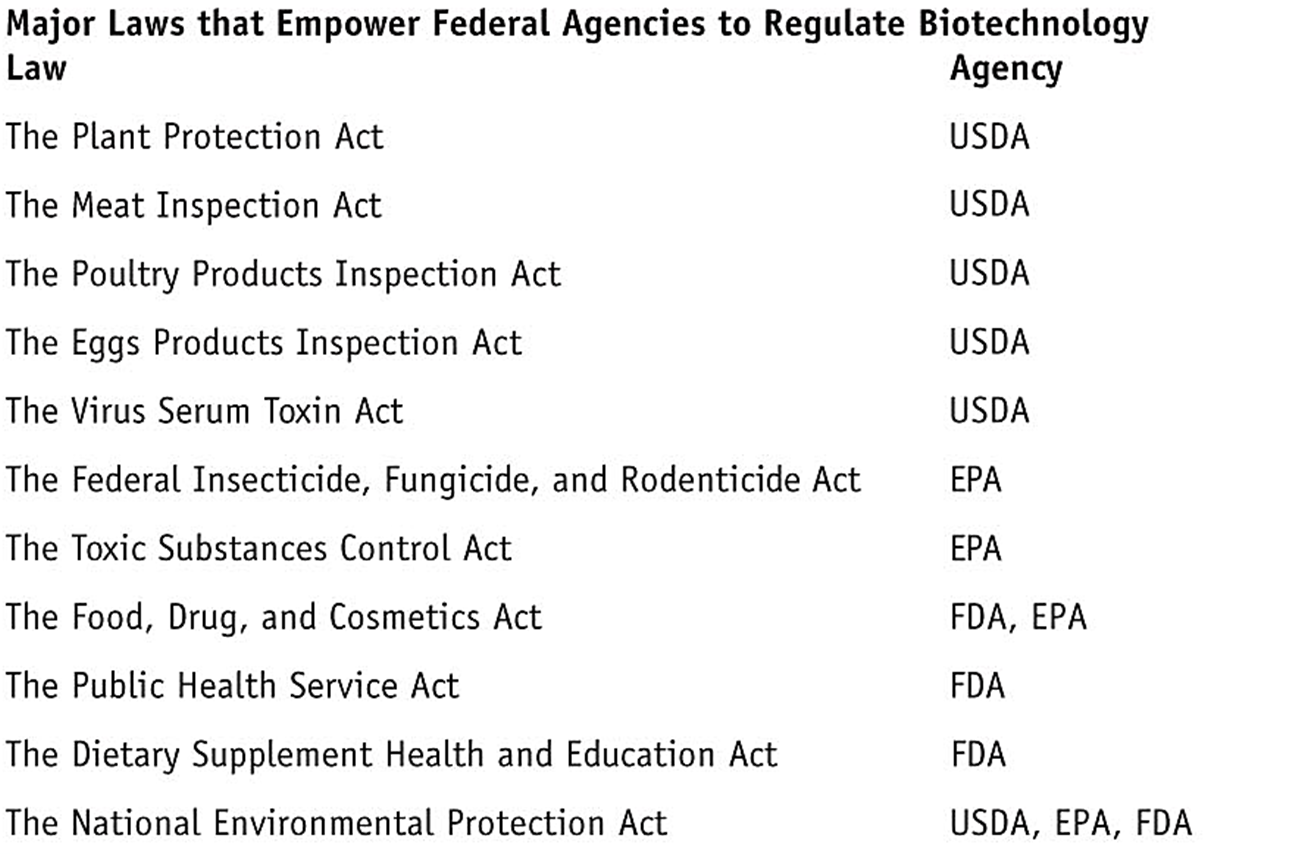

We have learned that the QMS ensures that the product meets quality standards set by local and national governing bodies. QMS helps bring products to market that meet the requirements of regulatory agencies and customers. Different regulatory agencies are responsible for different products. For example, US Department of Agriculture (USDA) regulates plants, plant-pests, animal vaccines; Environmental Protection Agency (EPA) regulates microbial/ plant pesticides, toxic substances, microorganisms, animal producing toxic substances; Food & Drug Administration (FDA) regulates food additives, human & animal drugs, human vaccines, medical devices, transgenic animals, and cosmetics. These regulatory agencies have laws that empower them to regulate biotechnology products in the market. Some of the laws and respective regulatory agencies are listed here.

Our focus in this chapter will be on the FDA.

FDA’s Role and Responsibilities:

- FDA regulates drugs, foods, cosmetics, biologics, medical devices.

- FDA is responsible for administration, enforcement, interpretation of US drug law and has power to establish regulations which have the force and effect of law

- FDA has developed policies, procedures, and regulations to implement its Regulatory initiatives.

History of drugs and regulations

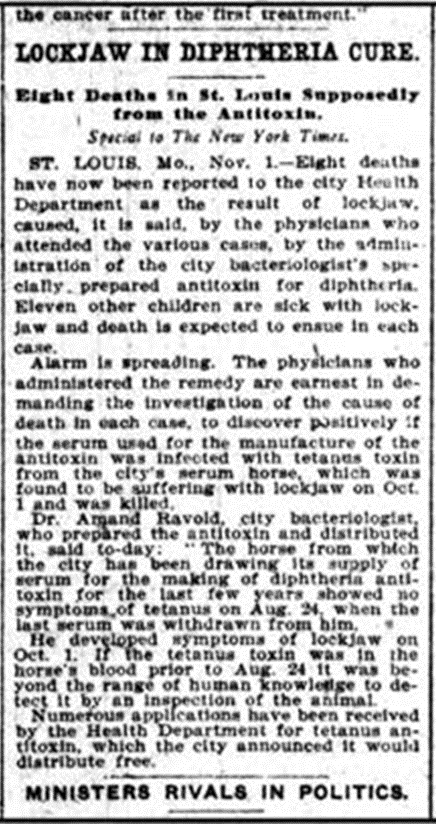

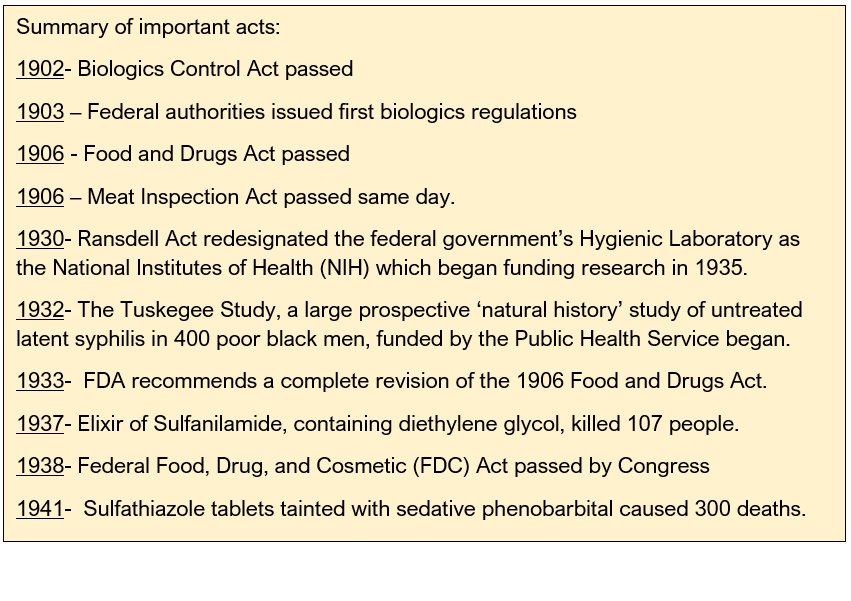

In 1848, most of the drugs were imported in the USA . Unregulated production of tetanus antitoxin. In 1902, unregulated productions of tetanus antitoxin resulted in illness and death. On October 26, 1901, a five-year-old St. Louis girl died from tetanus. 13 more children in St. Louis died of tetanus, and the cause was traced to a supply of diphtheria antitoxin prepared by the St. Louis Board of Health from a tetanus-infected horse. The St. Louis disaster wasn't the only such incident in the United States and Europe. Camden, New Jersey, was the site of almost a hundred cases of post-vaccination tetanus, including the deaths of nine children, in the fall of 1901.

1902: Biologics Control Act was passed on July 1, 1902. Biologics had to be labeled with the name and license number of the manufacturer, and the production had to be supervised by a qualified scientist. Establishments were required to have annual licensing.

The Hygienic Laboratory, forerunner of the National Institutes of Health, was authorized to conduct regular inspections of the establishments and to sample products on the open market for purity and potency testing. Later in 1972 FDA was handed over this role.

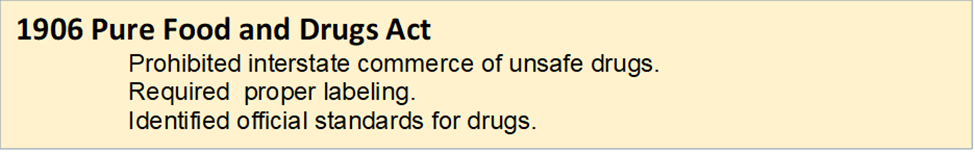

1906: Harvey Wiley, head of the Bureau of Chemistry of the U. S. Department of Agriculture, led the way toward consumer protection by working towards a law, Pure Food and Drugs Act.

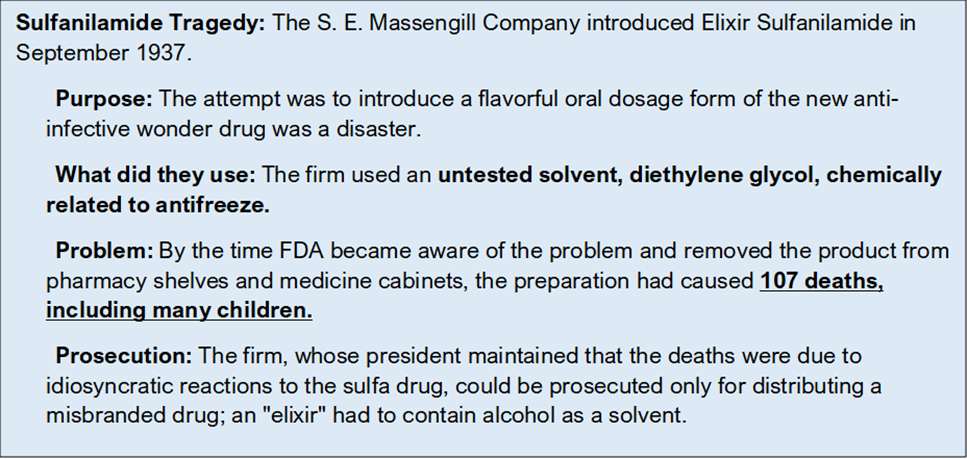

The Food & Drugs Act was not completely devoid of problems, it resulted in the Sulfanilamide tragedy. The 1906 act failed to regulate medical devices or cosmetics, the lack of explicit authority to conduct factory inspections, the difficulty in prosecuting false therapeutic claims following a 1911 Supreme Court ruling, and the inability to control what drugs could be marketed.

1938: The Elixir Sulfanilamide disaster reinvigorated a bill to replace the 1906 act.

Roosevelt signed the Food, Drug, and Cosmetic Act into law on June 25, 1938. The 1938 act required that firms had to prove to FDA that any new drug was safe before it could be marketed--the birth of the new drug application. The new law covered cosmetics and medical devices, authorized factory inspections, and outlawed bogus therapeutic claims for drugs. Drugs had to bear adequate directions for safe use, which included warnings whenever necessary.

1951: The 1938 act was vague on issues such as what a prescription was and who would be responsible for identifying prescription versus non-prescription drugs. This lack of statutory direction created many battles between FDA, regulated industry, and professional pharmacy, and within some of these groups as well. The 1951 Durham-Humphrey Amendment to the 1938 act helped clarify some of these disputed issues.

1962: Kefauver Harris amendment was introduced. The amendment was a response to the Thalidomide tragedy, in which thousands of children in Europe were born with birth defects as a result of their mothers taking thalidomide for morning sickness during pregnancy. Thalidomide had not been approved for use in the United States. The bill by U.S. Senator Estes Kefauver, of Tennessee, and U.S. Representative Oren Harris, of Arkansas, required drug manufacturers to provide proof of the effectiveness and safety of their drugs before approval.

1983: Orphan diseases are serious and debilitating rare diseases affecting less than 200,000 people, which typically receive little funding toward their prevention or treatment. About 20 million Americans suffer from at least one of the more than 5000 known rare diseases.

Representative Henry Waxman of California initiated hearings into the lack of drugs for orphan diseases. The Orphan Drug Act finally became law in 1983.

1990s: By 1990s, Standard drug testing and approval process was in place. This included:

- Preclinical testing

- Clinical studies – Phase I, II, III

- Pre Market Analysis (PMA)

- Post marketing surveillance

The Prescription Drug User Fee Act (PDUFA) of 1992 authorized FDA to charge user fees for certain drugs and biological product applications. These user fees support timely reviews for drugs. PDUFA has enabled the FDA to bring access to new drugs fast while maintaining the same thorough review process. PDUFA may not need to be paid for orphan drugs as exception.

Since PDUDA was passed, more than 1000 drugs and biologics have come to the market including new medicines to treat cancer, AIDS, cardiovascular disease and life-threatening infections.

Current Role of FDA in Drug Development

The FDA’s role is to evaluate data in an investigational drug and determine if it should be made available to the patients. The benefits of the proposed drug must outweigh risks and side effects. The FDA also issues directive on the right dosage and information on how to use the medication properly.

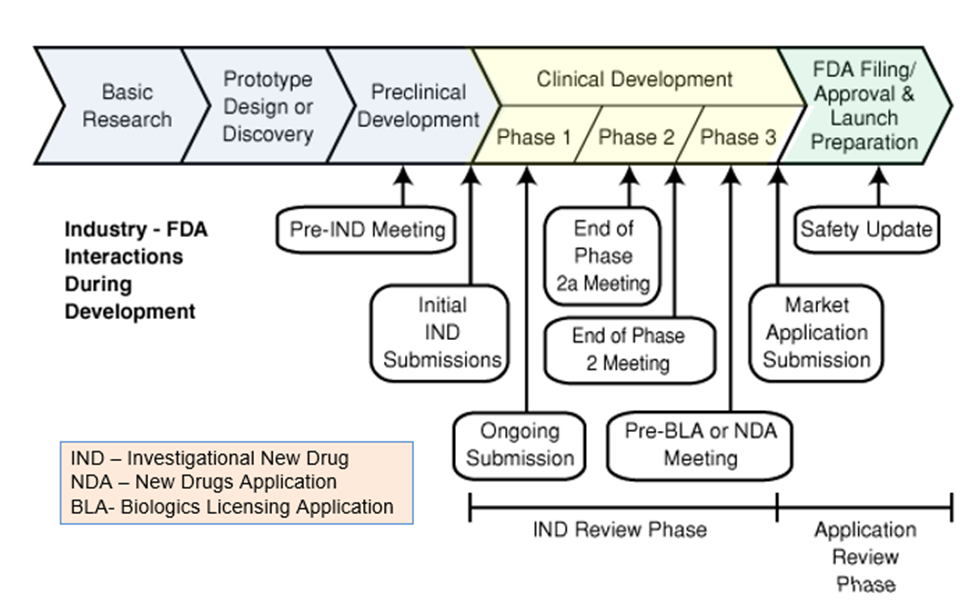

The FDA outlines its drug approval process as a 12 step process that includes 4 phases. The first step involves animal testing. If the drug proves to be non-toxic to animals, then the sponsor can submit an IND (Investigative New Drug Application), after which the drug goes into clinical trials and or studies. The clinical trials are separated into three initial phases.

In case of emergencies such as the COVID-19 pandemic, FDA has created the Coronavirus Treatment Acceleration Program (CTAP). This program is responsible for managing the delicate balance between expediting the process of getting much-needed therapies approved for public use while simultaneously monitoring the safety of said therapies.

Despite various reforms to FDA’s processes, developing new medicines has become increasingly expensive and time-consuming. The Critical Path Initiative is FDA’s effort to address the need for up-to-date scientific means of evaluating the safety, efficacy, and quality of medicines. The object of this initiative is to reduce the time, cost, and uncertainty of product development.

The Critical Path Initiative

° Creates new scientific tools for safety, efficacy, and quality

° Strives to reduce time, cost, and uncertainty

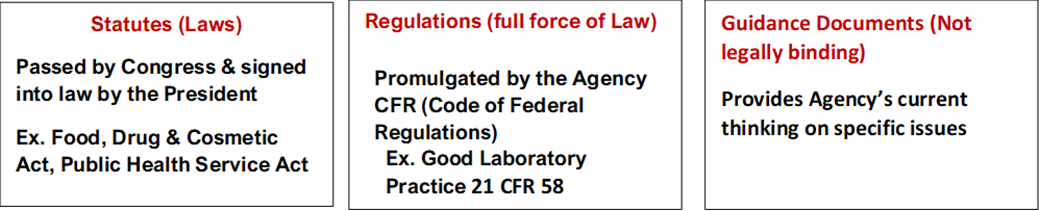

Regulatory Framework

Regulatory framework comprises of three parts:

FDA’s Quality Systems Inspection Techniques

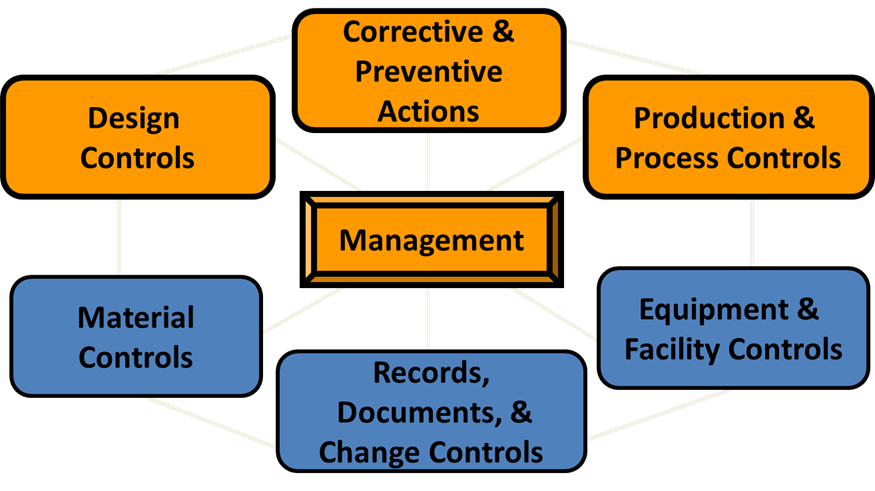

The Quality System Inspection Technique, or QSIT, is a plan for investigators to follow when evaluating a manufacturer’s compliance with regulations for Quality Systems, Medical Device Reporting, Corrections and Removals, and Tracking.

The QSIT handbook also includes guidance for covering sterilization when the Production and Process Control subsystem is inspected.

FDA has identified 7 subsystems in the Quality System.

- You can think of these subsystems as types of activities.

- You can find these subsystems identified clearly in the Quality System Regulation.

The four subsystems in focus are:

- Management Control

- Design control

- Corrective & Preventive Actions

- Production & Process Control

Management Control:

The purpose of this subsystem is to provide 1) adequate resources, 2) ensure the establishment and effective functioning of the quality system, and 3) monitor the quality system and make necessary adjustments.

Management Subsystem has the following requirements:

- Conduct management reviews

- Appoint Management representative and required personnel

- Establish Quality Policy along with objectives & organizational structure.

- Establish Quality audit procedures and quality audits

Design Controls:

The purpose of this subsystem is to provide 1) Control of the design process 2) Assure the device design meets used needs, intended uses and the specified requirements.

Design Controls subsystem has the following requirements:

- Establish a plan that describe or reference design and development activities

- Identify design inputs

- Develop design outputs

- Verify that design outputs meets design inputs

- Validate the design (include software validation and risk analysis)

- Control design changes

- Review design results

- Transfer the design to production

- Compile a design history file

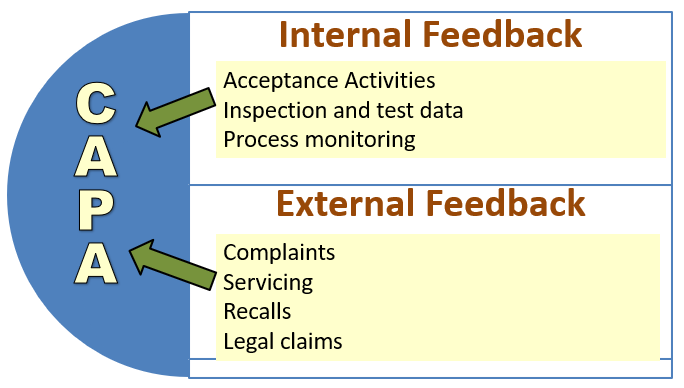

Corrective & Preventive Action (CAPA)

The purpose of this subsystem is to 1) Collect and analyze information/data 2) Identify and investigate product and quality problems 3) Identify, implement and validate effective corrective and preventive action 4) Communicate CAPA to appropriate personnel.

CAPA has the following requirements:

- CAPA procedures must be established.

- Investigations must be conducted to identify root cause of failures.

- Appropriate corrective actions and preventive actions must be carried out.

- Personnel must be trained on CAPA activities

- Management must review of CAPA activities

Post-Inspection:

The company where the audit is completed, will send a letter to FDA identifying how they have corrected deficiencies or will correct them. They may also provide documentation of any corrections that have been completed. They may also provide a timetable or estimated completion date for future corrections

Types of warning letter

If a company/ manufacturer has significantly violated FDA regulations, FDA notifies the manufacturer in the form of a Warning Letter. The Warning Letter identifies the violation, such as poor manufacturing practices, problems with claims for what a product can do, or incorrect directions for use. There are different types of warning letters

- General FDA Warning Letters

- Tobacco Retailer Warning Letters

- Drug Marketing and Advertising Warning Letters (and Untitled Letters to Pharmaceutical Companies)

The Office of Prescription Drug Promotion (OPDP) regulates prescription drug promotional materials made by or on behalf of the drug’s manufacturer, packer, or distributor, including: 1) TV and radio commercials 2) Sales aids, journal ads, and patient brochures 3) Drug websites, e-details, webinars, and email alert.

FDA’s Bad Ad Program

This program was launched in 2010. It was designed to educate healthcare providers about the role they can play in helping make sure that prescription drug advertising is truthful. It provides an easy way to report misleading information to the agency (E-mail BadAd@fda.gov or call 855-RX-BADAD or by going to Fda.gov/badad)

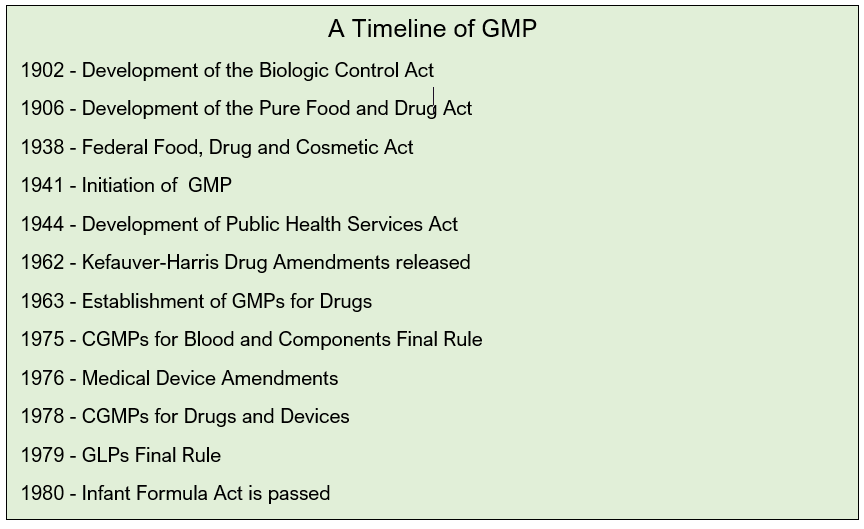

Good Laboratory Practices

In early 1900s, a number of acts began the regulatory process in USA. FDA was recognized by its name in 1930s but its function had begun much earlier :

1947: Nuremberg Code was drafted in 1947. This was a 10 point code that described the basic principles of ethical behavior in the conduct of human experimentation

Three pivotal points were stressed:

- 1. Voluntary consent of the subject must be obtained.

- 2. Prior animal experimentation to determine risk must be performed.

- 3. Human experimentation must be performed by qualified medical personnel

1958- Food Additives Amendment came into effect in 1958. This act enforced the following:

- Required manufacturers of new food additives to establish safety

- Prohibited the approval of any food additive shown to induce cancer in humans or animals

1960- Color Additive Amendment passed in 1960 enforced the following measures of food safety:

- Required manufacturers to establish safety of color additives in foods, drugs, and cosmetics.

- Prohibited approval of color additives shown to induce cancer in humans/ animals.

1962- Thalidomide Tragedy

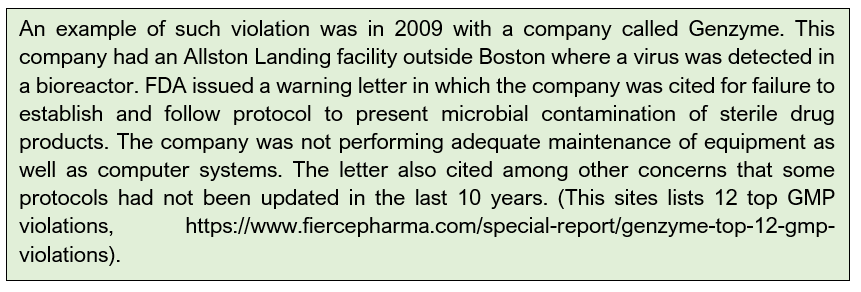

In 1962, a new sleeping pill caused birth defects in thousands of babies in Europe. Dr. Frances Kelsey refused to allow the new drug to become effective in the US because of insufficient safety data and saved thousands of life. In 1963, overseas thalidomide catastrophe was instrumental in the FDA completing the first draft of Good Manufacturing Practices (GMPs) and making them legal requirements. GMPs set requirements for sanitation, inspection of materials and finished products, record-keeping, and other quality controls.

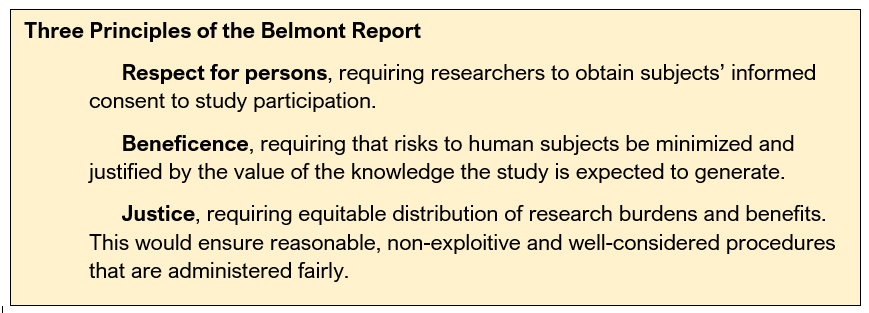

1972- The Tuskegee Syphilis Experiment was an infamous clinical study conducted between 1932 and 1972 by the U.S. Public Health Service to study the natural progression of untreated syphilis in rural African-American men. They were told that they were receiving free health care from the U.S. government. The 40-year study was controversial for many reasons related to ethical standards because researchers knowingly failed to treat patients appropriately after the 1940s validation of penicillin as an effective cure for the disease they were studying. Revelation in 1972 of study failures by a whistleblower led to major changes in U.S. law and regulation on the protection of participants in clinical studies.

1974- As a result of this the National Research Act was passed. A commission was set up to define ethical standards under which research in the United States was to be conducted. The Commission, created as a result of the National Research Act of 1974, was charged with identifying the basic ethical principles that should underlie the conduct of biomedical and behavioral research involving human subjects and developing guidelines to assure that such research is conducted in accordance with those principles. Informed by monthly discussions that spanned nearly four years and an intensive four days of deliberation in 1976, the Commission published the Belmont Report, which identifies basic ethical principles and guidelines that address ethical issues arising from the conduct of research with human subjects. It was written in response to the infamous Tuskegee Syphilis study in which African Americans with syphilis were lied & denied treatment for more than 40 years. It established federal law requiring the review of all clinical research by an Institutional Review Board (IRB).

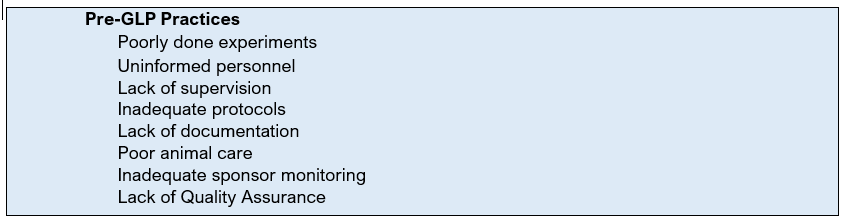

Events at Industrial Biotest that led to US Good Laboratory Practices (GLP)

There were several events that led to the eventual establishment of Good Laboratory Practices. However, the most infamous was the research discrepancies noted at a leading contract research laboratory, Industrial Biotest Corporation (IBT). IBT was an American industrial product testing facility in the US. IBT conducted studies for the federal government, as well as for private companies. In 1970s, IBT performed 35% to 40% of all toxicology tests.

However, based on FDA and EPA investigations, numerous discrepancies were uncovered. 618 of 867 (71%) of studies audited by the FDA were invalidated for having "numerous discrepancies between the study conduct and data.“ Consequently, IBT was at the center of one of the most far-reaching scandals in modern science, as thousands of its studies were revealed through EPA and FDA investigations to be fraudulent or grossly inadequate.

An employee of Syntex Corp. notified the FDA of problems (Whistle blower, a person who exposes any kind of information or activity that is deemed illegal, dishonest, or not correct within an organization that is either private or public).

Naprosyn, an antiarthritic drug tested by IBT. Syntex employee expressed concern to IBT officials about submitted report. The file provided enough evidence to warrant an inspection of the facility by the FDA.

Irregularities in IBT's data were discovered in April 1976 by Adrian Gross, an investigator at the FDA, whose aide retrieved one of the laboratory's naproxen studies that had been conducted for Syntex, a pharmaceutical company. The FDA proceeded to probe IBT. During Gross' physical inspection of the laboratory, he gained access to the study's raw safety data and found frequent references to an unknown acronym, "TBD/TDA," which he said perplexed him until learning that it denoted a testing animal whose body had "too badly decomposed.

IBT had a building where all of the rodents were housed, it was nicknamed "The Swamp". The Swamp was a horrible place. Its automatic watering system had faulty nozzles, that sprayed a chilling mist over the animals. The water filled their food jars, and some subsequently drowned. Some of those that didn't drown, died of exposure. They were wet and cold and could not survive. There were routinely 80% mortality rates in chronic studies. Technicians later told the FDA that the animals would decompose so rapidly that the bodies would ooze through the cage bottom. There were other problems... rats would escape through wire cages that were bent. There was a wild breeding colony that lived on the floor of the animal rooms. These wild rats would chew the toes off of the caged rats. Technicians would use chloroform to slow the wild rodents down to try to catch them. The chloroform fumes would kill some of the caged study animals.

The section Head for Rat Toxicology submitted fraudulent mortality data. 1000 new mice ordered to replace those that had died during the study. Blood and urine analyses were fabricated at the end of a 2 year rat study. Management forged signatures on reports. Eventually, there were indictments on 8 counts for conducting and distributing false scientific data.

IBT had over 22,000 studies to their credit. IBT studies were the basis for safe product rating for hundreds of drugs and pesticides. Of 1205 key pesticide studies, only 214 (18%) of the studies were found to be valid after retesting. Of 867 agency audits, 618 (71%) of the studies were found to be invalid. IBT was found to be engaging in extensive scientific misconduct and fraud, which resulted in the indictment of its president and several top executives in 1981 and convictions in 1983.

Firms that used IBT to test their products could not assume that the scientific data and reports submitted to the agencies were accurate and complete. Data was found to have been inaccurately reported as protocols weren't followed. Technicians may not have known that there was a protocol. Techs were not trained to keep accurate records or to make careful observations. Management did not assure proper supervision or critical review of data. There was no documentation of the qualification of technicians or scientists. Thousands of critical research projects were performed by IBT for nearly every major American chemical and drug manufacturer.

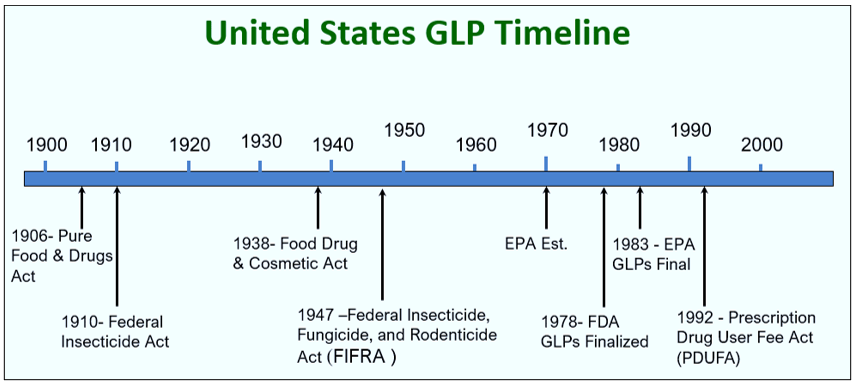

Problems at laboratories such as IBT, and many other laboratories prompted the need for a set of industry guidelines. Though intended to be guidelines, the problems with IBT forced the FDA to enact the Good Laboratory Practices (GLP) as law. This figure gives a basic timeline about the regulations and shows the development of the GLPs as we know of them today.

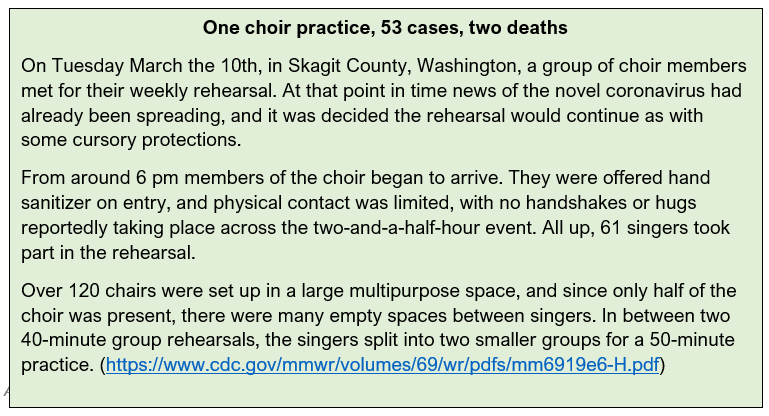

Good laboratory practice or GLP refers to a quality system of management controls for research laboratories and organizations to try to ensure the uniformity, consistency, reliability, reproducibility, quality, and integrity of chemical (including pharmaceuticals) non-clinical safety tests; from physio-chemical properties through acute to chronic toxicity tests.

GLP was first introduced in New Zealand and Denmark. It was proposed in USA in November 1976, implemented in June 1979, revised in Sep 1987 and minor changes were made to it in July 1991.

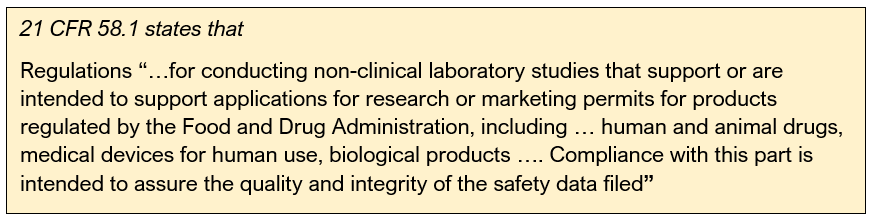

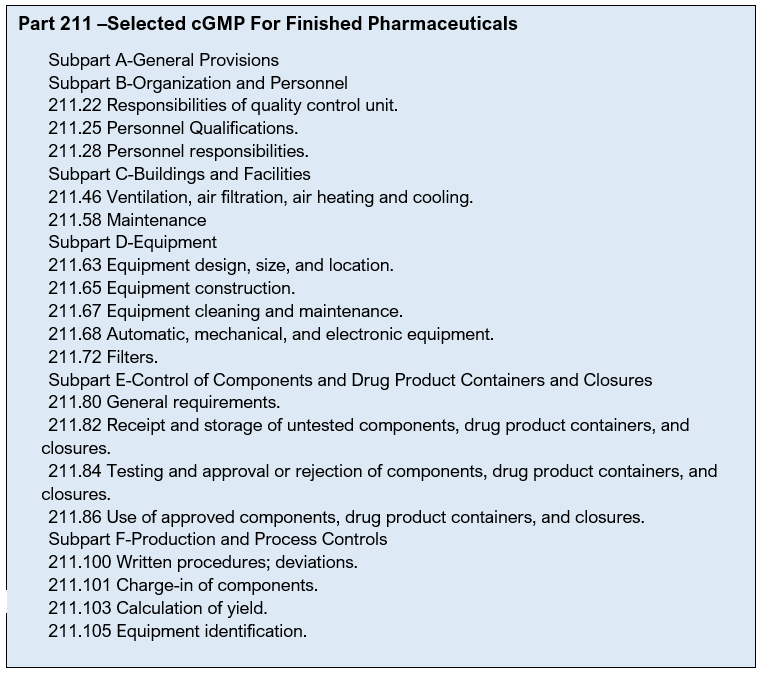

The United States FDA has rules for GLP in Title 21 is the portion of the Code of Federal Regulations (21 CFR 58) that governs food and drugs within the United States. Preclinical trials on animals in the United States of America uses these rules prior to clinical research in humans.

Scope of GLP:

GLPs apply to all studies supporting application/permits for FDA (Food & Drug Administration), EPA (Environmental Protection Agency), or international agencies.

GLPs do not apply to

- Basic exploratory studies

- Clinical studies (GCP)

- Testing in support of manufacturing (GMP)

GLP is a regulation that covers the quality management of non-clinical safety studies. The aim of GLP is to ensure that scientists organize and conduct the studies in a way that promotes the quality and validity of test data.

The purpose of GLP in scientific studies is

- To implement clear structures in the study.

- To ensure right procedures are being followed that are in compliance with GLP.

- To ensure that tests data and results are reliable.

- GLP is not involved in “Science” of the study but “Organization” of the study.

GLP does not aim to guide scientific studies or

- Tell what tests are to be performed.

- Tell what protocols are to be used.

- Assess the scientific value of the study.

These are achieved by scientific guidelines of the study not GLP.

GLP aims to make more obvious the incidence of FALSE NEGATIVES

(False negative : Results demonstrate non-toxicity of a toxic substance)

This doesn’t translate to incorrect clinical studies or harm to human but often results in waste of efforts and resources prior to clinical studies.

It also aims to make more obvious the incidence of FALSE POSITIVES

(False negative : Results demonstrate toxicity of a non-toxic substance)

This doesn’t translate to clinical studies as the compound may be valuable and useful but is discarded before clinical trials.

GLP aims to promote mutual recognition of studies across international frontiers.

30 countries are OECD members. If or when there was no GLP , many countries would refuse the outside drug or authenticity of studies and retrials had to be done.

OECD members and even non-OECD members now accept that studies have been conducted under acceptable organizational standards.

Essence and coverage of GLP:

GLP is a managerial concept for organization of the studies. This is particularly in reference to non-clinical studies. It includes dood & color additive petitions, NDA (New Drug Applications) & NADA (New Animal Drug Applications) and toxicity studies (in vitro & in vivo). It excludes human subject trials, clinical or field trials in animals, basic exploratory studies.

GLP defines the conditions under which studies are:

- Planned (Study plan/ protocol)

- Performed (Standard Operating Procedures)

- Recorded (Collection of raw data and deviations)

- Reported (The resulting final report has to be accurate)

- Archived (Study data, samples and specimens must be properly archived.)

- Monitored (Monitoring by study staff, Quality assurance personnel and quality inspectors)

The purpose of GLPs is to assure the quality & integrity of data submitted to FDA in support of the safety of regulated products. GLPs have heavy emphasis on data recording, record & specimen retention. The equipment should meet the following requirement.

- Equipment shall be adequately inspected, cleaned & maintained

- Equipment used for assessment of data shall be tested, calibrated and/or standardized

- Scales & balances should be calibrated at regular intervals (usually ranging from 1-12 months)

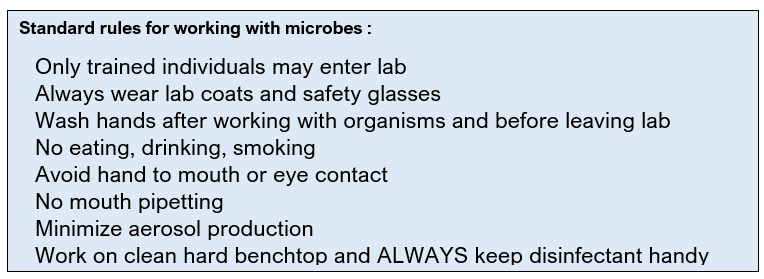

Testing facility shall have Standard Operating Procedures (SOPs) adequate to insure the quality & integrity of the data generated in the course of a study. All deviations from SOPs shall be authorized by the study director & documented in the raw data.

SOP for animal care: SOPs are required for all aspects of animal care. Newly received animals shall be isolated & health status evaluated. Animals shall be free of any disease or condition that might interfere at beginning of study. Animals of different species shall be housed in separate rooms. Feed & water are analyzed periodically for contaminants. Contaminant analysis of food & water for each & every study is not a requirement nor is analysis for a laundry list of contaminants.

This table summarized the GLP regulations and what tools are used to manage them.

GLP Regulations (Rules) | Documentation (Tools) |

Organization & Personnel | Training records, CVs, GLP Training |

Facilities | Maintain adequate space/ separation of chemicals from office areas |

Equipment | Calibration, logbooks of use, repair and maintenance; check freezers |

Facility Operation | Standard Operating Procedures |

Test, Control & Reference Substances | Chemical and sample inventory, track expiration dates, labeling |

Records and reports | Timely reporting, storage of raw data & reports |

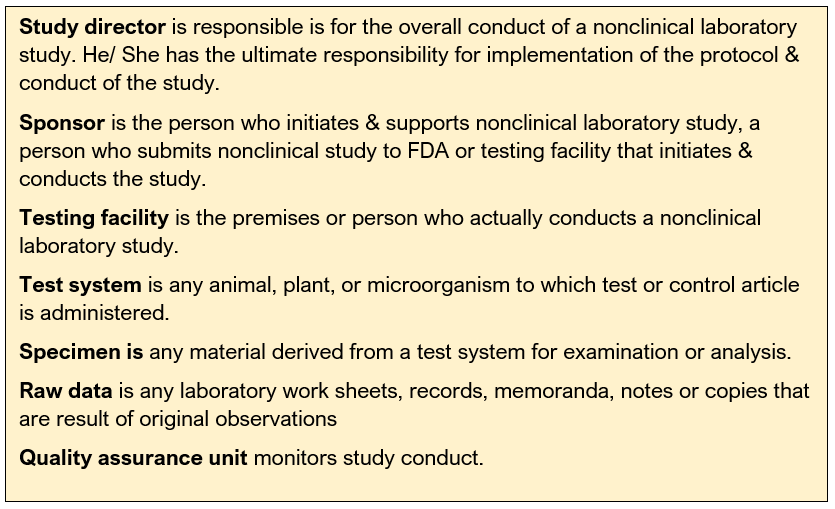

Laboratory management responsibilities and organizational requirements take up about 15% of the GLP text, clearly demonstrating that the regulators also consider these points as important. Management has the overall responsibility for the implementation of GLP including both good science and good organization within their institution.

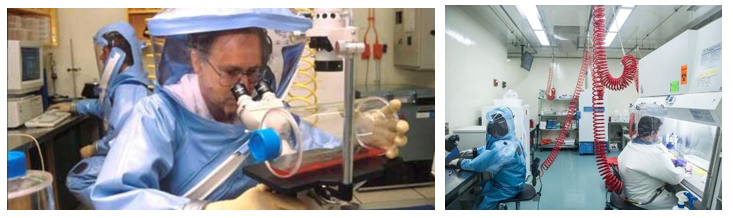

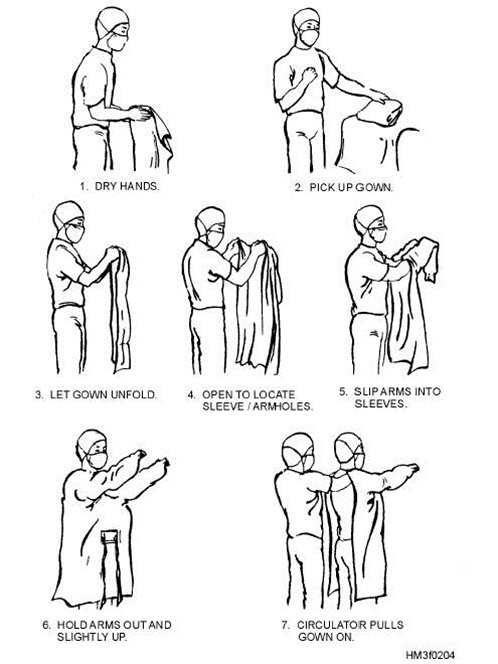

GLP Personnel should have sufficient education, training, and/or experience. They have access to protocols and SOPs. They are required to wear appropriate clothing. They avoid contamination through maintaining personal sanitation and health precautions. They are required to report if they are sick.

FDA regulations that require management responsibilities. The management needs to designate a Study Director and replace promptly if necessary. They need to assure there is a Quality Assurance Unit (QAU). They also need to assure that personnel, resources, facilities, equipment, materials, and methodologies are available as scheduled. They need to make sure that the personnel clearly understand the functions they are to perform and corrective actions are taken and documented as a result of deviations noted by the QAU. All the test and control article must be characterized.

The GLP regulation had a worldwide impact. Primary reason for that was world’s 30% of the pharmaceutical product market was and continues to be in USA.

OECD – Organization for Economic Cooperation and Development. The OECD is a worldwide organization dedicated to economic development.

JMAFF – Japanese Ministry of Agriculture, Forestry and Fisheries

Test site is Only defined by OECD and JMAFF. It is the location(s) at which a phase of the study is conducted.

Test Site Management is defined by OECD and JMAFF. It refers to the person(s) responsible for ensuring that the phase(s) of the study, for which he is responsible, are conducted according to these Principles of GLPs.

A term not defined by FDA or EPA, is Principal Investigator.

When the OECD regulations were updated in 1997, the term Principal Investigator was made a legal term. Only OECD and JMAFF regulations define the term Principal Investigator. An individual who, for a multi-site study, acts on behalf of the Study Director and has defined responsibility for delegated phases of the study

The Study Director’s responsibility for overall conduct of the study cannot be delegated to the Principal Investigator(s)

While the Principal Investigator is responsible for the portion of the study that is delegated to them, the Study Director is still the central point of control and must be notified of all study occurrences.

In the larger scheme of Drug Development, Good Laboratory Practices are the first set of regulations to be followed even before filing of an Investigational New Drug (IND) application, along with sometime Good Clinical Practice (GCP) and Good Manufacturing Practices (GMP). Once a candidate drug has passed through all clinical trials, the manufacturers can file New Drug Application for a drug to be marketed for medical purposes.

SUMMARY

Good Laboratory Practices apply to

- Non-clinical studies conducted

- It is for ensuring assessment of safety/ efficacy of chemicals/ pharmaceuticals.

- It is in compliance with regulatory agency guidelines

LINK

FDA CFR Title 21 Part 58- Good Laboratory Practices for Nonclinical Laboratory Studies

Standard Operating Procedures

Chapter 5: Standard Operating Procedures

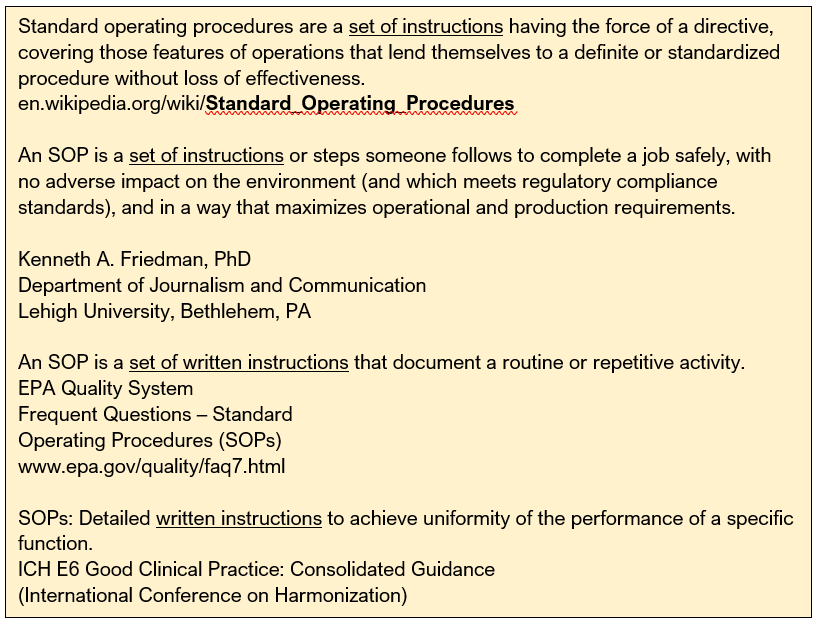

Standard Operating Procedures (SOP) are a set of step-by-step instructions in a process or making a product to achieve a predictable, standardized, desired result.

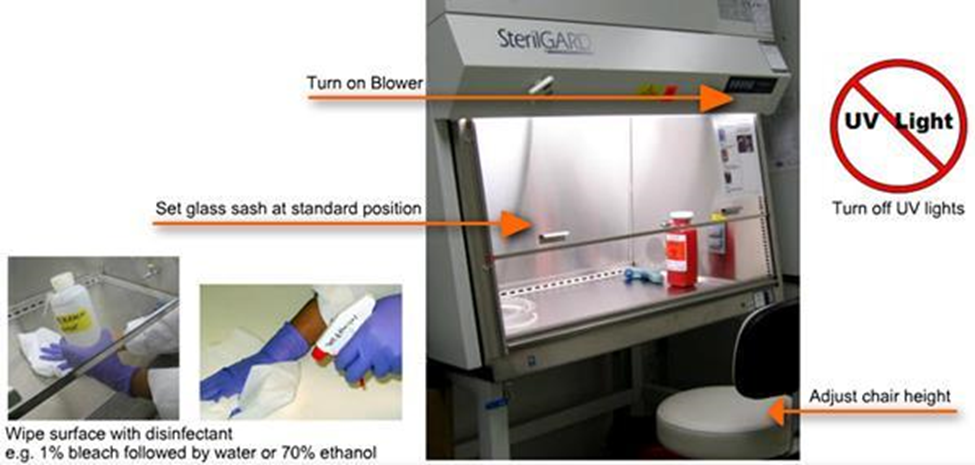

SOPs are essentially backbone for achieving GLP, GMP and/or GCP using Quality Management System. Without Standard Operating Procedures, things can get complicated and confusing.

The need for SOPs: The FDA has placed us in an environment of regulatory compliance. Most regulatory and accrediting agencies require that those who perform procedures have the education, experience and training to do so.

SOP is mentioned in several guidelines as stated here.

Good Laboratory Practice 21 CFR 58.81(a)

A testing facility shall have standard operating procedures in writing setting forth nonclinical laboratory study methods that management is satisfied are adequate to ensure the quality and integrity of the data generated in the course of a study.

Good Manufacturing Practice 21 CFR 211.100

There shall be written procedures for production and process control designed to assure that the drug products have the identity, strength, quality, and purity they purport or are represented to possess.

Good Tissue Practice 21 CFR 1271.180

You must establish and maintain procedures appropriate to meet core CGTP requirements for all steps that you perform in the manufacture of HCT/Ps. You must design these procedures to prevent circumstances that increase the risk of the introduction, transmission, or spread of communicable diseases through the use of HCT/Ps.

ICH Guidance For Industry

E6 Good Clinical Practice: Consolidated Guidance

Principles of ICH GCP § 2.13

Systems with procedures that assure the quality of every aspect of the trial should be implemented.

SOPs can have different formats as different agencies, institutions, and companies may write them in different ways. The employees are trained on the SOP format and must enforce it.

A good SOP should always be:

- Accurate

- Up to Date

- Easy to understand and follow

- Accomplishes the purpose for which is written

SOPs are the foundation of training and have specific purpose:

- To provide people with all the information necessary to perform a job properly (i.e. a training tool)

- To ensure that the procedures are performed correctly and consistently

- To ensure compliance with university and government regulations

- To serve as a checklist for auditors

- To serve as an explanation of steps in a process so they can be reviewed in accident investigations.

- To serve as a historical record of the how, why and when of steps in an existing process occurred (for inspectors and attorneys)

- To Ensure Safety

- Maximize operational and production requirements

- To Ensure Consistent Training

- To Ensure Correct and Consistent Performance

- To Ensure Regulatory Compliance

- To Ensure Consistent Training

- To Ensure Correct and Consistent Performance

- To Ensure Regulatory Compliance

- Just Because It Makes Good Sense

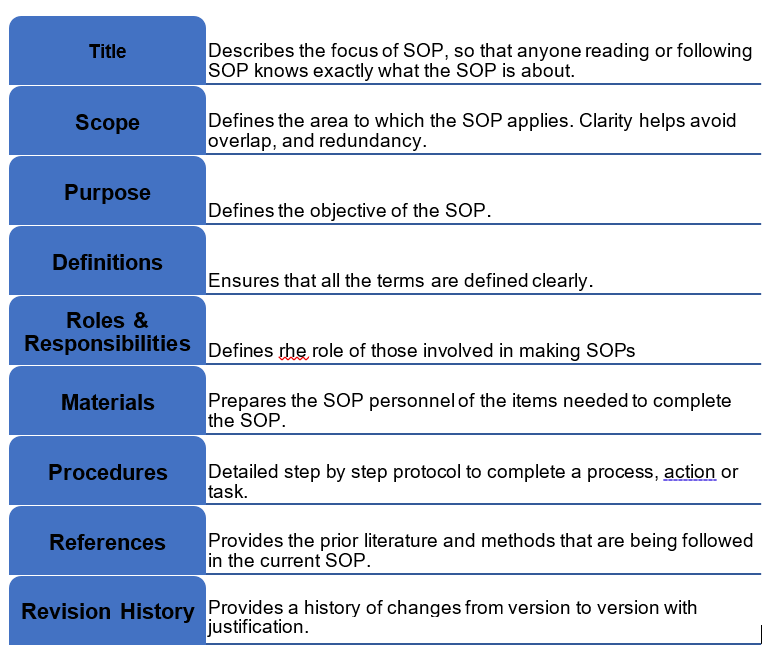

Elements of SOP

SOPs must always begin with active verbs like, begin, enter, start, analyze, submit, etc. Also if there are any forms, logs or other documents they should be attached to SOP. Some examples of the attachments are coversheet text for document approval, authorized copy log template, staff training documentation record or annual review coversheet.

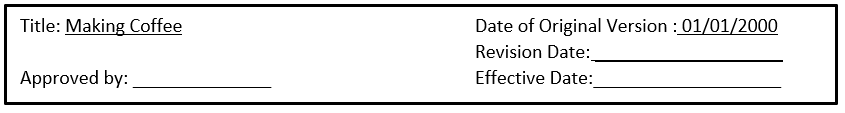

Revision history of an SOP is extremely important. In includes what has changed and when. It is helpful specially in case of inspections, accidents or any legal implications.

Example of SOP Revision

SOP is an important element of FDA inspection.

|

Good Clinical Practices

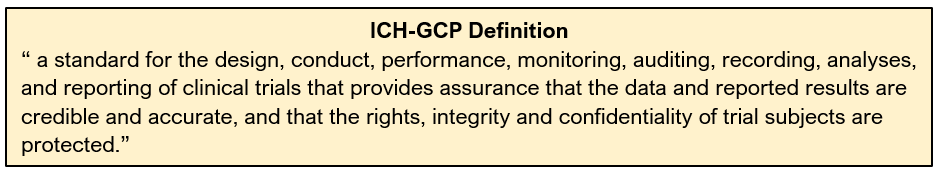

Good Clinical Practice/ GCP is defined as an international standard for the design, conduct, performance, monitoring, auditing, recording, analysis and reporting of clinical trials or studies. GCP compliance provides public assurance that the rights, safety and well-being of human subjects involved in research are protected.

GCP is an international quality standard that is provided by the International Conference on Harmonisation (ICH). ICH-GCP harmonizes technical procedures and standards; improves quality; speeds time to market and decreases the cost to sponsors and the public.

Infamous cases such as Nazi physicians conducted large-scale trials on unwilling prisoners during World War II, black American men in syphilis studies (1932 –1972) who were not provided information on the study. This was followed by the declaration of Helsinki Agreement between countries that there needed to be a global standard by which all trial are conducted.

This is Good Clinical Practice – protects those in a trial, but also those who’s treatment will depend on the data. It essentially ensures that the rights of the patient are protected and by all those given a drug or intervention in the future based upon that data.

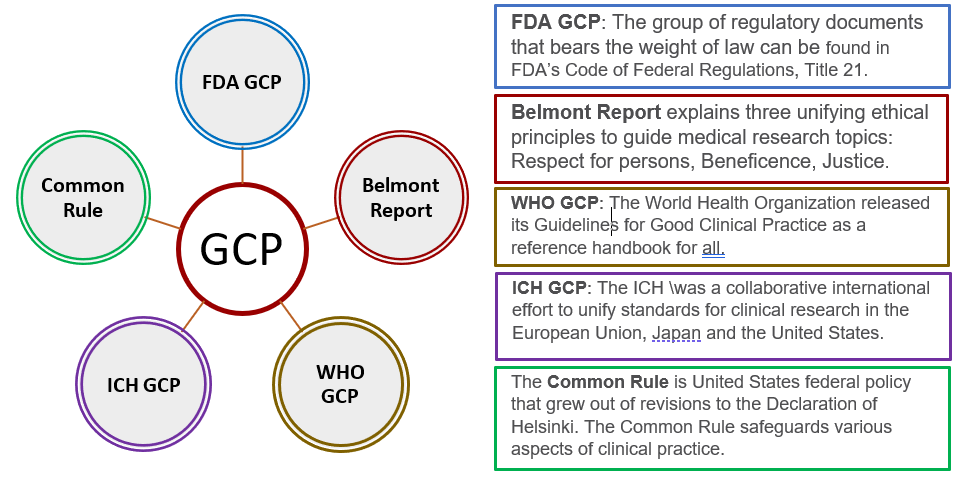

There are several acts/ laws that laid the foundation of GCP, The Nuremberg Code (1947), The Declaration of Helsinki (1964), The Belmont Report (1979), International Conference on Harmonisation (ICH-GCP), International Standards Organization 14155 and Code of Federal Regulations.

In 1997, the FDA endorsed the GCP Guidelines developed by ICH. ICH guidelines have been adopted into law in several countries (UK/ Europe), but used as guidance for the FDA in

the form of GCP.

Goals of GCP are to adhere to ethical standards while generating quality data by:

- Protecting the rights, safety and welfare of humans participating in research

- Assuring the quality, reliability and integrity of data collected

- Providing standards and guidelines for the conduct of clinical research

The steps to following a GCP in a protocol include:

Other things to think about while writing and SOP include clinical trial insurance / non-negligent harm cover, safety reporting, ethics committee safety and annual updates, clinical trial registries, sponsor reports, publication planning, logistics, transport, budgeting, drug/vaccine storage, sample transportation, export, storage, data archiving and maintaining SOP’s, training records and equipment service contracts.

ICH-GCP has 13 core standards:

Ethics 1. Ethical conduct of clinical trials 2. Benefits justify risks 3. Rights, safety, and well-being of subjects prevail Protocol and science: 4. Nonclinical and clinical information supports the trial 5. Compliance with a scientifically sound, detailed protocol Responsibilities: 6. IRB/IEC approval prior to initiation 7. Medical care/decisions by qualified physician 8. Each individual is qualified (education, training, experience) to perform his/her task Informed Consent: 9. Freely given from every subject prior to participation Data quality and integrity: 10. Accurate reporting, interpretation, and verification 11. Protects confidentiality of records Investigational Products 12. Conform to GMP’s and used per protocol (Quality Control/Quality Assurance) 13. Systems with procedures to ensure quality of every aspect of the trial. |

In order to ensure compliance with GCP, several partners are required:

- Regulatory Authorities who review submitted clinical data and conduct inspections

- The sponsor Company or institution/organization which takes responsibility for initiation, management, and financing of clinical trial

- The project monitor who is usually appointed by sponsor

- The investigator who is responsible for conduct of clinical trial at the trial site. Team leader.

- The pharmacist at the trial location is responsible for maintenance, storage and dispensing of investigational products eg. Drugs in clinical trials

- Patients are the human subjects

- Ethical review board or Committee for protection of subjects are appointed by Institution or if not available then the Authoritative Health Body in that Country will be responsible

- Committee to monitor large trials that overseas Sponsors eg. Drug Companies.

Any medical product/ device that comes to the market research studies have to be conducted to collect data on usual and unusual events, conditions, & population groups, to test hypotheses formulated from observations and/or intuition and to understand better – improve health outcomes with change. There are different types of medical research studies:

- Non-directed Data Capture

- Vital Statistics

- Directed Data Capture & Hypothesis Testing

- Cohort Studies, Case Control Studies

- Clinical Trials

- Investigation of Treatment/Condition

- Drug Trials

A properly planned and executed clinical trial is a powerful experimental technique for assessing the effectiveness of an intervention. Clinical trial is different from ‘Standard of Care’

- Involves human subjects

- Test an ‘intervention’ – be it a product, procedure or health care sytem….in order to improve standard of care

- Measures effects over a period

- Most have a comparison CONTROL group

- Must have method to measure intervention

- Focuses on unknowns: effect of intervention

- Must be done before medication is part of standard of care

- Standard of Care all about clinical judgement decision/flexibility – trials need all to stick with the protocol, no deviation – within your clinical judgement

Examples of clinical trials

They could be small investigator-led fellowship type studies that are addressing a disease management question, through to large multi-center programs within collaborations or with product development sponsors assessing new products for licensure. Clinical trials may be dealing with Improving disease management in very sick children such as severe malaria, malnutrition and management of seizures and in-patient trials for product development such as PK studies. It could be phase II and III regulatory trials in drug and vaccines for malaria and HIV.

SOPs in Clinical Research

International Conference on Harmonization (ICH) defines a SOP as “Detailed, written instructions to achieve uniformity of the performance of a specific function.” (ICH GCP 1.55).

In simple terms a SOP is a written process and a way for the clinical site to perform a task the same way each time it is completed.

SOPs are not specifically mentioned in the FDA regulations. However, there is guidance and regulations that infer responsibility and SOPs formalize investigator responsibilities.

21 CFR312.53 of FDA states that the investigator will “ensure that all associates, colleagues, and employees assisting in the conduct of the study(ies) are informed of their obligations in meeting the above commitments.”

Some of the examples of SOP topics include, preparing and Submitting Initial IRB (Institutional Review Board) documents, preparing and submitting continuing review IRB documents, preparing and submitting amendment IRB documents, establishing and training the clinical study team, and delegating responsibilities, establishing study files and establishing Source Documents

Benefits of SOP in clinical trials include:

- Ensures that all research conducted within the clinical site follows federal regulations, ICH GCP, and institutional policies to protect the rights and welfare of human study participants.

- Provides autonomy within the clinical site.

- Improves the quality of the data collected, thereby improving the science of the study.

- Utilized as a reference and guideline as to how research will be conducted within the clinical site

- Excellent training source for new employees and/or fellows

Elements of SOP

Just like SOPs discussed in chapter 5, the potential elements of the SOP related to clinical trials include

- Header – title, original version date, revision date, effective date, approved by

- Purpose – why one has the policy

- Responsibilities – who the policy pertains to

- Instruction/Procedures – how to accomplish the items of the policy

- References – what the policy is based on

- Appendix – source documents/case report forms

SOPs should be written in clear, concise language, use active voice, avoid names and use titles instead. The process mapping for writing SOPs include determining which clinical site task needs mapping, laying out all the steps currently used to complete that task. “Mapping” involves taking each step in the task and making it more efficient and easier to follow.

Once the process of mapping is finished, the process map is converted to an outline for easy use. Once a task has been mapped, it should be tested.

Also, the implementation and monitoring of SOPs should be introduced gradually, prioritizing the most relevant SOPs and present them first. Principle Investigator should approve all SOPs and designate an effective date. SOPs should be reviewed on a regular basis (usually annually) to ensure policy based regulations are up-to-date. Previous versions of SOPs should be retained. All staff should have SOP training. Training should be documented. SOP should be accessible to staff.

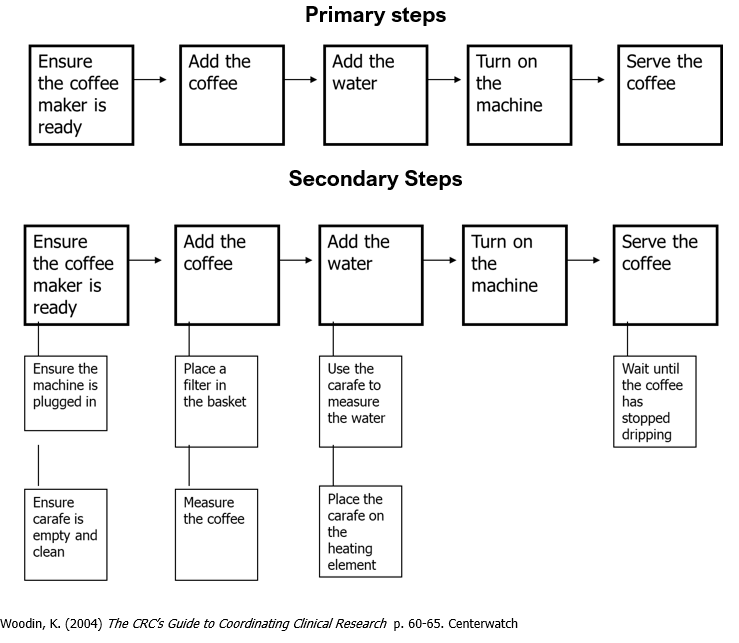

Process Mapping for Making a Cup of Coffee

The process mapping for making a cup of coffee can be divided in primary and secondary steps. One advantage of a two-tiered system is that SOPs will rarely need to be changed, whereas guidelines may need to be changed or updated more frequently due to changes in organizational structure or equipment.

1.0 Purpose

To ensure company employees wanting coffee do so appropriately and according to clinical site standards.

2.0 Responsibilities

Clinical site personnel who want to make coffee.

3.0 Instructions

3.1 Ensure coffee maker is plugged in and the carafe is clean and empty.

3.2 Place a filter in the coffee receptacle and add the appropriate amount of coffee.

3.3 Fill the carafe with the desired level of water and pour into the water reservoir.

3.4 Place the carafe on the heating element and turn the machine on.

3.5 When the coffee has stopped dripping into the carafe, it is ready to serve.

SOP vs MOP

Standard Operating Procedure (SOP) and a Manual of Procedures (MOP) terms are used interchangeably.

SOP provides general information that is to be utilized throughout any research study.

MOP is specifically written for a particular research study which will incorporate elements of the SOP.

- The MOP should be written so that anyone in your clinical site can follow the procedures for that study and find all relevant materials.

- The MOP should be extremely detailed.