- Author:

- Matt Lewin, Kathleen Hart

- Subject:

- Computer Science, Information Science

- Material Type:

- Textbook

- Level:

- Community College / Lower Division, College / Upper Division, Graduate / Professional, Career / Technical

- Tags:

- License:

- Creative Commons Attribution

- Language:

- English

- Media Formats:

- Downloadable docs, Text/HTML

Washington State Department of Licensing: Data Stewardship

Overview

The Washington State Department of Licensing contracted with the University of Washington to create an educational resource to provide an introduction to data stewardship principles. The course breaks down key concepts to familiarize individuals that are new to data stewardship and for those that wish to learn to think of data as an asset.

Author Note & Copyright Statement

Author Note

Nic Weber, Information School, University of Washington

Kathleen M. Hart, Data Stewardship Program, Washington State Department of Licensing

Jessica Christie, Data Stewardship Program, Washington State Department of Licensing

Correspondence concerning this e-book should be emailed to datastewards@dol.wa.gov.

Copyright Statement

Except where otherwise noted, Data Stewardship: Washington State Department of Licensing, is available under the CC-BY 4.0 license. All logos and trademarks are property of their respective owners. Sections used under fair use doctrine (17 U.S.C. § 107) are marked.

This resource may contain links to websites operated by third parties. These links are provided for your convenience only and do not constitute or imply any endorsement or monitoring by the Department of Licensing. Please confirm the license status of any third-party resources and understand their terms of use before reusing them.

Introduction

Data is a relational concept. It can mean different things to different stakeholders in different contexts. In the context of the Washington State Department of Licensing (DOL) this relational aspect of data, and the challenges of data management, was succinctly described as follows:

DOL has a diverse collection of applications to support its operational needs. There are many common data elements across these applications that are currently being defined and used separately and inconsistently.

While this usually has no impact on the operation of the individual applications, it creates problems when trying to match and accumulate data from multiple systems for analysis and forecasting. The solution to this problem is to centrally define and manage this data so it is referenced and used consistently by all applications [1]

The goal of this course is to both introduce terminology and techniques for working with data, as well as foreground principles in effective data stewardship. In doing so, we will try to help reduce the complexity of managing and providing services for data across DOL.

Structure of course

The book is structured around three chapters:

Data Stewardship - Fundamental Concepts: In this chapter a working definition of data is introduced. We will discuss how data and collections of data can be differentiated by types and roles. We will also cover metadata and the process of organizing and structuring documentation to make data more accessible and useful to stakeholders. We will conclude with an overview of data governance (and where a data steward fits into governance at DOL) as well as data ethics.

Data Stewardship - In Practice: The second chapter covers best practices in data management and records management and disposal, and how to apply concepts of data quality. We will also cover the selection and use of standards related to data and metadata, and how to serve stakeholders of data at DOL through data interviews.

Data Stewardship - Applications: In the final chapter we will discuss data infrastructures including repositories for storing and preserving data, how to select and apply standards to data (and metadata), techniques for cleaning or tidying data, and some general applications for managing databases, understanding emerging technologies like artificial intelligence, and principles of visualizing data.

Each chapter has a set of Intended Learning Outcomes (ILOs), in other words what you should be able to take away from and understand upon reading the chapter; suggested readings where you can dive deeper into a topic of interest; as well as general working definitions that you can use for future reference.

[1] Quest Information Systems, Inc. (2008). Data Acquisition and Management Practices Study: Project Summary Report. Washington State Department of Licensing.

Data Stewardship - Fundamental Concepts

This first chapter introduces basic concepts related to data stewardship and working with data more broadly in the context of the public sector. We begin by first unpacking the concept of data and explaining how to approach various contextual ambiguities about what constitutes data. We then review some basic concepts related to data stewardship such as management of data and data quality. We wrap up this introduction by discussing the concept of data governance and data ethics.

Defining Data

The Department of Licensing defines data as:

Numbers and facts that have not been grouped or analyzed. (Data that is grouped becomes statistics. Data that is analyzed becomes data analysis.) This includes numbers and facts in electronic records, paper records, emails, text messages, recordings, and images.[1]

This working definition clarifies what the Department of Licensing considers data and how data is to be successfully managed over time. This definition also draws a clear distinction between a type of information object (e.g., electronic records, paper records, text messages, etc.) and the role that the information object is supposed to play (e.g., grouped numbers become statistical data, analyzed information objects become data analysis, etc.). The point is that data need to have an application to become meaningful to a customer, but in the abstract data are simply information objects with potential for use in many different contexts.

It is often overwhelming to think of all the different ways that data may be used. Instead, it is more helpful to think about data as having type and role distinctions—A type is rigid (such as a format) and a role is fluid (it can change given a context). A simple example outside the context of data will help make this clear:

- Jay Inslee is a person. This is a type.

- Jay Inslee is the Governor of Washington State. This is a role. He will play this role for a fixed amount of time. After his term as governor expires, he will cease to play this role. But he will still be a person regardless of whether he is the Governor of Washington State.

Data have similar types and roles—A tabular dataset such as a comma separated values (CSV) or Excel document will have a type of structure (rows, columns, and values). Unless we take some purposeful action to transform this data it will remain tabular as a type of data.

This tabular data (type) might be evidence of some real-world example—it might be a set of species occurrence records, the precipitation and temperature of a particular place, the number of vehicles that pass through a certain point at a certain time, or even a vehicle registration number. These are different roles that data can play. Without context these are just numbers or files (information objects) that are waiting to be used as evidence by a data stakeholder (such as the definition offered by DOL above).

This evidential role of data can shift and change depending on who is using the data, and for what purpose. Thinking about data as having roles and types helps us as data stewards to think about what exactly a stakeholder wants and needs. If we understand what role the data is supposed to play, we can better find the right type of data for a customer or stakeholder.

Types: Structured vs Unstructured Data

Structure is often a helpful distinction in identifying types of data.

Structured data means that there is a predetermined form or logic to how information is arranged and presented to a user. A spreadsheet is an example of structured data—it has rows, columns and values This structure communicates to a user how the data values should be interpreted.

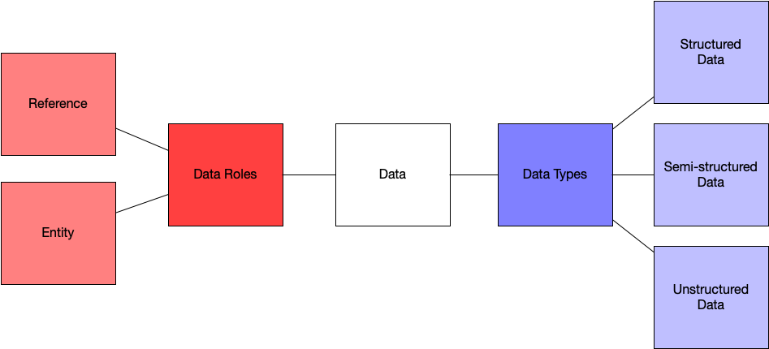

Unstructured data means that is no predetermined way in which data are supposed to be presented and used by a customer. Unstructured data for example can be emails, text messages, other formal documents, videos, or even audio recordings. As with much of data stewardship, real world data rarely falls exactly in one category or the other. Often data are semi-structured, which is when a set of data has a file format (such as XML or JSON) but no predefined form or scheme that explains, for example, what a column of data should be. Below is a helpful figure—we can think about data as being structured or unstructured, as well as having semi-structures (such as XML or JSON). We will discuss these types of data as well as metadata in the next section of the course.

Roles: Entity vs Reference Data

The Department of Licensing, like any complex organization, has a variety of data that it collects and manages over time to execute business functions. DOL data can serve a variety of purposes, and these “data roles” vary by not just the type of data that are collected and managed, but how they are used. This is described succinctly by DOL in the following way:

DOL has a diverse collection of applications to support its operational needs. There are many common data elements across these applications that are currently being defined and used separately and inconsistently. While this usually has no impact on the operation of the individual applications, it creates problems when trying to match and accumulate data from multiple systems for analysis and forecasting. [2]

A helpful way to think about the roles that data plays at DOL is that they can be either as reference data or entity data.

Reference Data: This is the most straightforward type of data. It is comprised of simple lists which are directly defined at the enterprise level for use in all applications. This includes items like States, Counties, Fee Types, etc. These lists tend to be defined by outside sources and change very infrequently.

Entity Data: This is the more complex type of data. It is comprised of data that is common across applications, but instead of being centrally defined, is entered or generated and updated within multiple applications. The classic example of this is customer data. This data tends to be complex in structure and change very frequently.

Both reference and entity data in DOL are roles that different information objects can play. This relational nature of the data helps us to understand when it is appropriate to, for example, apply a broad standard for editing or cleaning data, applying a data quality checklist, or even recommending a data source to a customer. Reference data should depend upon external sources for validation and reliability (components of data quality that we will review in the next chapter). Entity data, as described above, can have a more relational or real time application that can shift or change given a context.

A breakdown of what we’ve covered in this section so far: Data have types and roles that impact how it is used as evidence. Data types are distinguished by the structure or format of the data, and data roles are distinguished by how the data might be used (as reference data or as entity data).

Categories of Data

Thus far, we’ve described data by types and roles that are pre-established based on some aspect of the data or its use. Another way to differentiate the roles that data might play within an organization is by categorizing data with respect to its content.

DOL has helpful developed a four-part categorization that includes the following types of data content:

Category | Explanation |

Category 1: Public Information | Information the Agency can or currently releases to the public. It does not need protection from unauthorized disclosure, but does need integrity and availability protection controls. Examples of Category 1: Agency information available on the internet, through brochures and other publications. |

Category 2: Sensitive Information | Information the Agency doesn’t generally release to the public unless specifically requested and allowable by law. Examples of Category 2: Internal business procedures, policies, and standards. |

Category 3: Confidential Information | Information specifically protected from disclosure by law. This may include: a. Personal information. b. Information concerning Employee personnel records. c. Information regarding Information Technology infrastructure and security of computer and telecommunication systems. Examples of Category 3: Driver license numbers, driver license photos, Employee personnel files, and computer or network passwords. |

Category 4: Confidential Information requiring special handling | Information specifically protected from disclosure by law and with: a. Especially strict handling requirements dictated by statutes, regulations, or agreements. b. Serious consequences that can arise from unauthorized disclosure, such as threats to health and safety, or legal sanctions. Examples of Category 4: Social Security numbers, bank account or debit card numbers, tort claim or lawsuit files, medical or disability information, firearm serial numbers. |

Table 1 DOL Data Classification and Handling Standards

Note. This categorization comes from the following publications OCIO 141.10[3] and DOL policy 1.7.11[4].

These categories are essential to understanding not only how data should be governed (discussed in depth below), but also for identifying the role that data might appropriately play. For example, if a customer requests Category 4 data, as a steward of DOL, we would need to ensure that the customer has proper credentials to accept this data and verify that the data is transferred to the customer in a secure and reliable manner.

Data Collections

Another helpful distinction to draw is whether data are standalone products, or collections of multiple data sources that are of value to a stakeholder. For example, the WA State Department of Transportation collects traffic data from each route or highway in the state. Individually a data source may be just about one specific Highway, but in total all data about all Highways in Washington constitute a collection of data.

The National Science Board provides a helpful distinction between three types of data collections:

- General Data Collections: These data have minimal processing or quality checks and may not conform to standards for format and structure—if such standards exist at all. These collections usually are developed by and for a specific internal DOL client and may not be preserved beyond the end of a project. Many thousands of these collections likely exist throughout the department—stored on shared drives or even personal desktops.

- Community Data Collections: These collections may follow standards for a community of potential clients, whether by adoption of existing standards or by developing new standards. Resource data collections may receive some direct funding from DOL or be created to comply with a particular records management requirement for making data accessible to the public.

- Reference Data Collections: Are those that serve large communities, conform to robust standards, and are sustained indefinitely. These collections have large budgets, diverse and distributed stakeholders, and clients that depend on these data as well as established governance structures.[5]

FAIR Data

An emerging shorthand description for open data—that is applicable to any sector— is the concept of F.A.I.R. FAIR data should be Findable, Accessible, Interoperable, and Reusable. We will discuss this concept in a bit more depth throughout this course. But, having this shorthand definition of what we try to achieve in doing data stewardship is a helpful reminder for the steps needed to make data truly accessible over the long term.

Data Stewardship

As a data steward our job will include a variety of department specific tasks, but more generally stewardship is used to describe “accountability and responsibility for data and processes that ensure effective control and use of data assets. Stewardship can be formalized through job titles and descriptions, or it can be a less formal function driven by people trying to help an organization get value from its data.[6]

Some general tasks that might be included in data stewardship:

- Creating and managing metadata.

- Documenting rules and standards.

- Managing data quality issues.

- Executing operational data governance activities.

- Setting and managing guidelines around data access.

Data Quality

From the ISO 8000 definition we assume data quality are “…the degree to which a set of characteristics of data fulfills stated requirements.”[7] In simpler terms data quality is the degree to which a set of data or metadata are fit for use. Examples of data quality characteristics include completeness, validity, accuracy, consistency, availability, and timeliness. In the final chapter of this book, we will discuss strategies and techniques for applying data quality standards to DOL data.

Data Governance

Data Governance is a collection of practices and processes which help to ensure the formal management of data assets within an organization. Data governance includes not just rules or regulations, but also clear definitions about what data management means in a specific organizational context and how data quality, security, and preservation should be carried out (e.g., through stewardship). While not exhaustive most data governance models will include some combination of the following elements:

- Authority and Control - Who will make decisions, and where are decision making processes documented, for planning, monitoring, and enforcing data management?

- Security - What are the requirements for secure, trusted, authentic data and access regulations in an organization? This includes identity management, as well as the following:

- Planning - How is security described across the organizational assets under management?

- Monitoring - How often are security plans and responsibilities updated, what will constitute a necessary revision of security plans?

- Evaluating - How are security plans and monitoring evaluated and by whom?

- Accountability - If planning, monitoring, or security is not followed, what are the consequences and to whom does responsibility for enforcement ultimately lie with?

- Quality - What constitutes quality assurance and who is responsible for carrying out either evaluation of quality or updating of data quality standards?

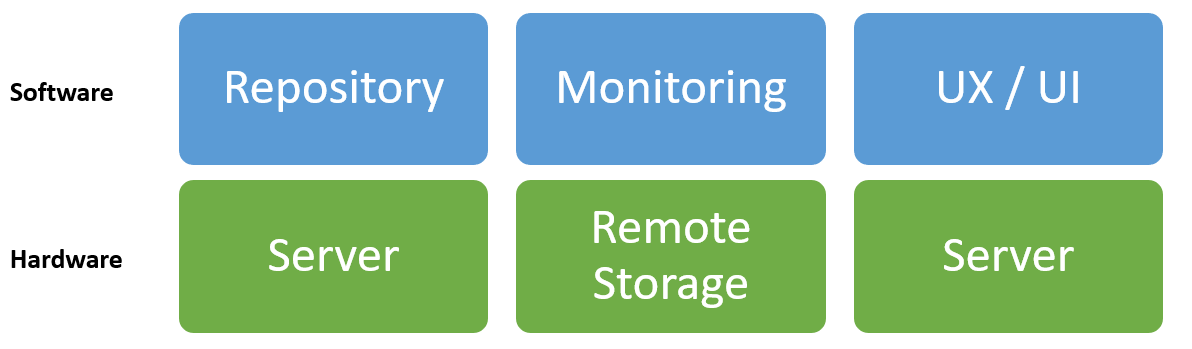

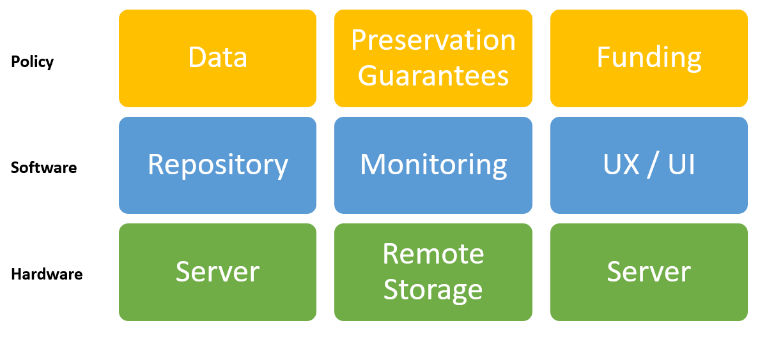

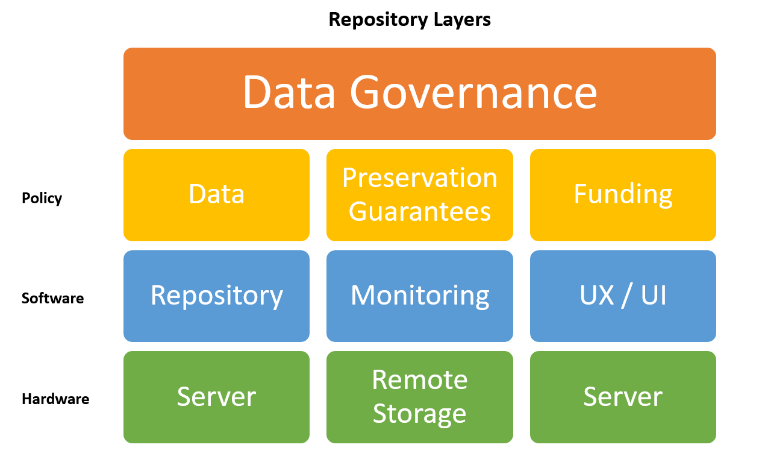

In future chapters we will discuss data storage and data infrastructures, but it helps to foreground those discussions with a simple model depicting the central role of data governance. Data governance includes decisions about not just data, but also the hardware, software, and implementation of policies throughout a data lifecycle. A good data governance model will specify how each of these different aspects of data management should be carried and will be the basis for creating policies that govern individual data stewardship work.

Data Governance in Washington State: The One Washington[8] project is the largest data and technology project is recent memory for the State of Washington. Between 2021 and 2027 it will restructure and replace almost all administrative systems with an Enterprise Resource Planning tool and will reorder all the data those various systems contain. The project has published a Data Governance Strategy document[9] that provides some insight into data governance in practice for the state of Washington.

Data Ethics

Ethics can be practically framed as “the study of the general nature of morals and of the specific moral judgments or choices to be made by a person” (Burns, 2012).[10] This definition situates ethics as a matter of individual choice, but of course the choices we make as individuals have broad impacts on the communities we are part of, serve, and wish to see flourish. That is, ethics is often practically framed as the result of individual choices and actions, but ethics also encompasses the implicit and explicit values of an institution, community of practice, or even group of data stakeholders.

The relationship between individual choice and collective action is particularly relevant for data stewardship where you will need to collaboratively work to provide regular and unfettered access to resources needed to conduct research, develop guidelines or regulations that govern ethical behavior, and practically implement standards that encode or formalize these rules in a data governance model.

It is important to acknowledge at the outset that data ethics, morals, and judgements, whether individual or collective, don’t arise from the ether—they are grounded in beliefs about what is right, just, or serves the greater good given an alternative set of choices. The ethical dilemmas faced by a community of practitioners are often about deciding what is right, how is justice enacted and preserved, or what choices we make can produce the greatest good for the communities on whose behalf we work.

Data ethics can generally include any and all of the following aspects of data:

- Ownership - Who is the responsible party that can make a claim of data—this is often based on licenses, rights of individuals who own data about themselves, or even state and federal regulations about data access.

- Transparency - What do data contain, and how are data about individuals or institutions clearly and coherently documented for potential use? This should include any restrictions of the quality or validity of data.

- Consent - If data contains information about people or groups of people then there must be some documented consent that the data is able to be used for a specific purpose. Consent often goes beyond a license— because it entails not just that the data can be accessed and used, but whether or not the data subjects have given their permission to do so.

- Privacy - If data contains information that may be traceable to an individual or institution then there should be guidance or regulation on how the privacy of those stakeholders can be preserved, and who has access to data at what level of privacy preservation.

- Openness - Data access often falls on a spectrum from freely accessible to anyone with an internet connection to accessible only through a data sharing agreement, or even through a formal data records request.

While these are not the only issues that you may face as a data steward working at DOL— it is often helpful to refer to these different ethical questions to determine what ethics are at stake when making a decision about how to prepare data, how to decide upon data access controls, and what stakeholder privacy means in the context of data release.

DOL’s Policy 1.7.12[11] addresses data ethics directly. The initial statements of the policy emphasize the trust relationship between a government agency and the people of the state as the basis for data ethics in the agency.

Here is an excerpt:

- Customer Trust: As the steward of customers’ data, the Agency has an obligation to ethically handle data in a manner that builds and maintains trust.

- Respecting the Person Behind the Data: The Agency respects customers’ right to privacy and will only collect the minimum personal information required by law to fulfill business purposes.

- Transparency in the Collection and Use of Data: Customers have the right to know how the Agency and others use their data. The Agency will be transparent about: What data we collect; How we use it; and Who we share it with and for what purposes.

Summary

In this chapter we have introduced several important concepts for data stewardship including how data might be differentiated based on its type or its role— types of data are based on structure, and these types are usually fixed and unchanging whereas the role of data, such as entity data or reference data, can vary given the application or context of how data are used. We also covered four broad categories used to describe data that are managed in DOL— these include public information, sensitive information, confidential information, and confidential information requiring specific handling. These categories help to further differentiate data by the role they might play for analysis by a customer, or in data governance that attempts to systematically create rules for data access. We also discussed some principles that govern ethical handling of data, including ownership of data, privacy, and consent.

Further Reading

- Borgman, C. L. (2015). What are Data? In Big data, little data, no data: Scholarship in the networked world. MIT press.

- Washington State Department of Licensing. (2020). Data Stewardship Framework. https://www.dol.wa.gov/privacy/docs/data-stewardship-framework.pdf

- Washington Consolidated Technology Services Agency (WaTech). (2021). Washington State Privacy Principles. (https://watech.wa.gov/sites/default/files/public/privacy/WSAPP.pdf)

Example 1

Throughout the first chapter of this book we've discussed the idea that data are fundamentally relational— they mean different things to different customers at different points in time. To understand this relational nature of data it can be helpful to look at an example of how the same data are displayed to customers in different settings. These settings drive the stewardship of data, given the needs of a customer that may vary over time.

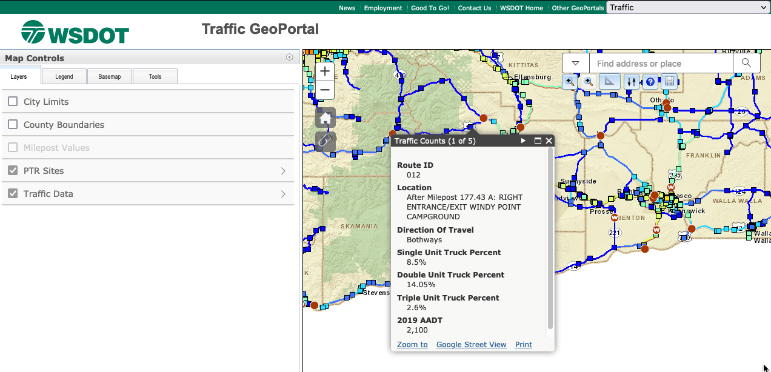

Below is a screenshot of the Washington Department of Transportation's Traffic GeoData Portal. This map displays real-time traffic count data from routes and interstates throughout Washington.

This traffic map is interactive— it allows a customer to select a route, or even a point on a route, where data traffic is collected and see the real-time estimates of things like "How many single unit trucks are on this route in the last hour". This view of the data is useful for getting a quick overview of what the state of traffic is at any one moment in time. But let's assume that instead of a quick overview of the real-time data an analyst at DOL wants access to the data that is powering this visualization. They may want this data for a variety of reasons—they may want to map all the single use trucks on the road for June 1, 2021 and determine what percentage of single use trucks are licensed in the state of the WA. The WSDOT traffic geodata portal allows us to view this data by selecting a polygon and "printing" the data. If we choose to do this, then we get an easy to manipulate data table that looks like the following:

Obj. ID | RT. ID | Location ID | Direction of Travel | Sngl Unit Trk% | Dbl. Unit Trk % |

1220 | 410 | At Milepost 116.26 A: PERMANENT TRAFFIC RECORDER S818 WEST | Bothways | 9.87 | 2.14 |

3624 | 12 | At Milepost 185.62 A: PERMANENT TRAFFIC RECORDER S818 EAST | Bothways | 4.93 | 4.31 |

2005 | 12 | Before Milepost 185.44 A: RIGHT WYE CONNECTION SR 12 | Bothways | - | - |

3809 | 12 | At Milepost 185.25 A: PERMANENT TRAFFIC RECORDER S818 SOUTH | Bothways | 7.45 | 7.18 |

2759 | 12 | After Milepost 185.48 A: RIGHT WYE CONNECTION SR 12 | Bothways | - | - |

4329 | 12 | From Milepost 188.65 A to Milepost 189.87 A | - | - | - |

2163 | 12 | From Milepost 185.48 A to -~Milepost 188.65 A | - | - | - |

2323 | 410 | From Milepost 114.40 A to Milepost 116.37 A | - | - | - |

4299 | 12 | From Milepost 178.86 A to Milepost 183.45 A | - | - | - |

1434 | 12 | From Milepost 183.45 A to Milepost 185.48 A | - | - | - |

Table 2 Polygon printing of WSDOT traffic geodata portal data.

Note. This table is much more informative and useful for analysis than a map. It gives us variables (such as Object ID, Route ID, Location). It also gives us observations of things like the number of single use trucks on Route 410 at milepost 116.2 on June 1, 2021 at 12:00pm.

Questions:

- In the example above from WSDOT—is this entity data or reference data?

- Is the map an example of structured, unstructured, or semi-structured data? Why?

- According to the DOL data categories, what kind of data is this table?

[1] Department of Licensing. (2017). Structured Data Collection, Storage, and Retention (Administrative Policy 1.7.7). Inside Licensing: Internal Department of Licensing Intranet.

[2] Quest Information Systems, Inc. (2008). Data Acquisition and Management Practices Study. Washington State Department of Licensing.

[3] Office of the Chief Information Officer (OCIO). (2017). Securing Information Technology Assets Standards (Policy 141.10). https://ocio.wa.gov/policy/securing-information-technology-assets-standards

[4] Department of Licensing. (2020). Data Security: Employee’s Data Security Requirements (Administrative Policy 1.7.11). Inside Licensing: Internal Department of Licensing Intranet.

[5] National Science Board. (2005). Long Lived Digital Data Collections: Enabling research and education in the 21st Century. National Science Foundation. https://www.nsf.gov/geo/geo-data-policies/nsb-0540-1.pdf

[6] DAMA International. (2017). DAMA-DMBOK: Data Management Body of Knowledge (2nd Edition). New Baskin, NJ: Technics Publications.

[7] International Organization for Standardization (ISO). (2011). Data Quality: Part 1 – Overview. https://www.iso.org/standard/50798.html

[8] Office of Financial Management. (2022). One Washington. https://one.wa.gov/

[9] Data Governance Advisory Committee. (2020). One Washington Data Governance Strategy. https://ofm.wa.gov/sites/default/files/public/onewa/OneWa_Data_Governance.pdf

[10] Burns, S. A. (2012). Evolutionary pragmatism, A discourse on a modern philosophy for the 21stCentury.

[11] Department of Licensing. (2020). Data Ethics (Administrative Policy 1.7.12). Inside Licensing: Internal Department of Licensing Intranet.

[12] Washington Department of Transportation. (2022). Traffic Count Data: Geospatial Open Data Portal. https://wsdot.wa.gov/about/transportation-data/travel-data/traffic-count-data

Data Stewardship - In Practice

Introduction

Library and Information Science (LIS) is a field that is concerned primarily with organizing, providing access to, and structuring information for effective use. In some cases this will mean working directly with people seeking information, and in other cases it will involve applying standards for the efficient discovery of information. As a data steward working in the public sector it will be helpful to first gain a working knowledge of these concepts from LIS, and then how to apply these in a public sector data context. We will first review metadata and knowledge organization practices more generally. Then, we will look at the concept of a data management plan (DMP) and how these DMPs can be useful in a public sector setting. Then, we'll discuss the concept of a data interview that can be used to meaningfully interact with customers that are seeking data.

Metadata

Metadata is often described as “data about data”—in some ways this is true, but this simple definition tends to obscure as much as it reveals about the important of data documentation.

Metadata is most simply a set of standardized attribute-value pairs that provide contextual information about an object or artifact playing the role of data. In the following sections we will try to unpack concepts like attributes and values, and how they relate to descriptions of data. Perhaps the best way to do this is introducing some features of metadata as it is typically used to organize, describe, and provide supplementary information about data. These features (such as: class, sub-class, attribute, or instance) can apply to data at various levels of "granularity" or the level of specificity that goes from general (e.g., a type of data) to concrete example (e.g., a dataset that lives on your hard drive and has the exact tittle `mydata101.csv`). Here are features that are consistent across most metadata applications:

- Classes are the broadest concept in metadata applications. A class is used to categorize or identify features that can be used for a domain of objects. Domain here means something like an area of application, a group of users, or even a set of intended customers. For example, a class of alcoholic drinks might be wine. Classes can be further refined by sub-classes, which are made up of instances, properties, and values (or attributes of an instance).

- Sub-classes refine a class. A sub-class can be a more specific example of a class. Returning to our wine example, a sub-class of wines might be red, white, or rosé. Another sub-class could divide wines into sparkling or non-sparkling. Another sub-class could refine wine by their vintage, country of origin, or even the blend of grapes used to make a wine (Cabernet, Sauvignon Blanc, Malbec, etc.) The point is that a sub-class provides some way to divide or simplify the class that belongs to a domain. In the context of data stewardship, sub-classes aren't necessarily correct or incorrect—they simply help structure metadata about digital objects so that they can be more easily and accurately described. We judge class and sub-classes of metadata based not on their correctness but based on their utility—How well they help describe, simplify, and retrieve data. For example, if we have class of data called "Customer" and a sub-class called "Female Customers" we've likely created some distinction based on gender identity. Will this be a useful way to retrieve data for customers? Maybe, but the retrieval of data based on gender is likely too narrow of a use to be a reliable way to create a sub-class for all Customer data. Instead, we might create sub-classes like "Customer-Demographics" which is more general and likely solves use cases where any kind of analysis can make use of customer identities (e.g., height, weight, education, gender identity, religion, reported race, etc.).

- Instances are observations or concrete examples of a class or sub-class. Returning to our wine, the class of Red Wine has a sub-class called Malbec. An instance of Malbec would be a bottle that was available for purchase—such as a Catena Malbec. A class or sub-class instance also has attributes or properties that further refine the object and specify why it is a member of that class or sub-class.

- Attributes are defining features of a class or sub-class and refer to instances. An instance is a member of a class if it has all the attributes of that class. For example, a mammal has certain features (reproductive organs, respiratory system, etc.) that define its base or necessary attributes for class membership. Canines as a sub-class have a more specific set of attributes that define membership in that sub-class. Returning to our wine example, a Catena Malbec is a Malbec, and a red wine if and only if it has all the attributes that are used to define a Malbec AND red wine hold true.

- Relations are the ways that we relate different instances and classes to one another. An instance or a class can be related in one or many ways.

These different features of metadata are known as semantic features. That is, classes and attributes define the meaning of, and relationships between, data as individual items or records and collections of items and records. Another distinction in metadata is known as syntax or the features that structure or govern a particular application of semantic metadata features. The simplest way to understand metadata syntax is, like data, through how a metadata record is structured (or unstructured) and how the metadata conforms to a standard.

Metadata Structures

The structure of a particular metadata record will often be either formal or informal and this means that, like data, we can think of there being structured metadata and unstructured metadata syntaxes.

Structured metadata is quite literally a structure of attribute and value pairs that are defined by a scheme. Most often, structured metadata is encoded in a machine-readable format like XML or JSON. Crucially, structured metadata is compliant with and adheres to a standard that has defined attributes—such as Dublin Core, EML, DDI.

Metadata schemas are used in structured metadata to define semantics, such as attributes (e.g., what do you mean by “creator” in ecology?); suggest controls of values (e.g., dates = MM-DD-YYYY); define requirements for being “well-formed” (e.g., what fields are required for an implementation of the standard); and provide example use cases that are satisfied by the standard.

Structured metadata is, typically, differentiated by its documentation role.

- Descriptive Metadata: Tells us about objects, their creation, and the context in which they were created (e.g., title, author, date).

- Technical Metadata: Tells us about the context of the data collection (e.g., the instrument, computer, algorithm, or some other tool that was used in the processing or collection of the data).

- Administrative Metadata: Tells us about the management of that data (e.g., rights statements, licenses, copyrights, institutions charged with preservation, etc.).

Unstructured metadata is meant to provide contextual information that is human readable. Unstructured metadata often takes the form of contextual information that records and makes clear how data were generated, how data were coded by creators, and relevant concerns that should be acknowledged in reusing these digital objects. Unstructured metadata includes things like codebooks, README files, or even an informal data dictionary.

A further important distinction about metadata is that it can be applied to both individual items, or collections, or groups of related items. This is known as the difference between item level or collection level metadata.

Metadata Standards

Metadata standards are based on broad community agreement about what is essential to record and communicate to stakeholders about a set of data. A metadata standard includes clear and unambiguous definitions of what attributes are, and what the accepted values are for any attribute. The total collection of attributes that make up a standard are often called a “schema” or “core elements”. For example, one of the most broadly used metadata standards for image resources is ExIF or the Extensible Interchange Format[1]—If you have ever taken a picture with your phone and later uploaded it to a computer the metadata captured by your phone’s camera is based on ExIF this allows any device to see attributes such as the date, time, camera model, or geolocation of where an image was taken. ExIF includes a mix of both descriptive and technical metadata attributes.

Dublin Core is another metadata standard that is used broadly for describing images, web-resources, documents, and other multimedia, including data. The Dublin Core is made up of 15 essential attributes that must be recorded and stored alongside any object. Each attribute (or term) in Dublin Core[2] is defined by a standards making body that provides guidance on how attributes are to be used in describing a resource. An example of the attributes of a metadata record following the Dublin Core standard can be found below:

Attribute | Definition | Example |

Title | A name given to the resource, either supplied by the individual assigning metadata or from the object | Example: "A Pilot's Guide to Aircraft Insurance" |

Creator | Entity responsible for making the resource. | Example: "Duncan, P. A." |

Subject | The topic of the resource, typically represented using keywords. | Example: "Colonial medicine" |

Description | An account of the resource. | Example: "Illustrated guide to airport markings and lighting signals for airports with low visibility conditions." |

Publisher | An entity responsible for making the resource available. | Example: "The University of Texas Press" |

Contributor | An entity responsible for making contributions to the resource (e.g. editor, transcriber, illustrator). | Example: "Austin Citizen Photograph" |

Coverage | The spatial or temporal topic of the resource. | Example: "Austin, TX" |

Date | A point or period of time associated with the resource. | Example: "1998-02-16" |

Type | The nature or genre of the resource. For a list of possible types, visit the DCMI Type Vocabulary. | Example: “Image” |

Format | The file format, physical medium, or dimensions of the resource. | "[128] p. : ill. ; 15 cm." |

Rights | Information about rights held over the resource. | Example: "This electronic resource is made available by the University of Texas Libraries solely for the purposes of research, teaching and private study." |

Source | A related resource from which the described resource is derived. | Example: "ZA 3075 Y69 2007" |

Language | Language(s) of the resource. | Example: "Spanish" |

Relation | A related resource. For a list of possible relations, visit the Summary Refinement and Scheme Table. | Example: "HasVersion 13th Edition" |

Identifier | A unique reference to the resource. | Example: "doi: 10.15781/T2251FN91" |

Table 3 Dublin Core Metadata Standard attributes[3]

There are thousands of metadata standards that have been developed to record descriptive, technical, and administrative information about data. Each of these standards differs based on the type of data that is being described, and the organizational context of data customers. Choosing or selecting the right metadata standard is typically a data governance issue rather than an individual choice. However, there are some general guidelines that can be helpful for selecting and applying a metadata standard where one does not yet exist:

- Purpose: Will the metadata be used to record descriptive, technical, or administrative information about data? (It could include all three.)

- Creation: At what point in the data lifecycle will metadata be created? This decision will also drive who is responsible for creating the metadata. If a data collector is also charged with creating metadata then as a data steward your role might be to validate or confirm that metadata standards are applied correctly (the next chapter provides guidance for checking metadata quality).

- Data types and role: Determining the type of data that is being collected can be the first and often most important aspect of selecting a metadata standard. Image, text, or numeric data all have different controlled standards that might be relevant to selecting a standard. Recording the roles and types of data in advance of choosing a standard will usually help reduce the decision.

- Consult a metadata catalog: Lists of metadata standards can be found across the web—these will help to narrow the selection of a particular standard to meet the needs of data types and roles.

An example of a metadata catalog include The Research Data Alliance’s Metadata Standards Catalog.[4]

Data Documentation

In addition to metadata there are a number of other forms of ‘documentation’ that can be valuable for stakeholders of data or a data collection. These include data dictionaries, readme files, and codebooks.

README files provide narrative explanation of what a dataset contains, how it was produced, and how it can or should be used. README files are simply a narrative for the data—they provide a way for data to be described informally for stakeholders. Below are some examples and resources for creating a README file for data documentation.

- A standard for README files (https://github.com/RichardLitt/standard-readme)

- Some advice on creating README files for data and data collections (https://data.research.cornell.edu/content/readme)

- A template for creating README files for data (https://drive.google.com/file/d/1OXQYDoGMB1xE2ocs98hS1cCTLLzK0ZvQ/view)

Data Dictionaries define the variables and values of a dataset (and constraints on the values of those variables). Most often this takes the form of a table—where data values are explained with a clear definition and the value that data should take (e.g., a number, text string, etc.). Data dictionaries typically contain four elements:

- Variable labels - this provides the name of the variable as it appears in the dataset that a client or stakeholder will download.

- Variable definitions - this explains what the variable is meant to record or measure. The definition should use plain language—informing the client what the data is meant to represent. Often this is hard to determine—so, if you are creating a new data dictionary you may have to guess what exactly the variable means.

- Variable value types - this can typically be a description such as date, a number (or integer), or a text string. The point of defining a variable value is to tell a potential user what type of data they should expect to see in a dataset.

- Allowed values - this could include the type of value (e.g., string, dateTime) or the control on that value (e.g., postal codes from the USPS). Value constraints—or allowed values—are extremely important to document because they help a user understand why 05-21-2021 is not just a random assortment of numbers, but in fact follows a standard convention for representing a date (MM-DD-YYYY).

Data dictionaries can be a little overwhelming to think about initially, but there are a number of valuable resources to consult when getting started with this form of data documentation:

- Introduction to creating data dictionaries (https://help.osf.io/article/217-how-to-make-a-data-dictionary)

- Example of a simple data dictionary (https://missing-pieces.s3.amazonaws.com/missing_pieces_data_dict_11-20-2017.pdf)

CodeBooks are more formal documentation that is often created for statistical or quantitative data. A codebook defines how data were coded, and how those codes were created in order to analyze or summarize a dataset. Codebooks are often very helpful if a dataset contains a number of non-standard data values or variables.

- Introduction to the concept of a codebook (https://www.icpsr.umich.edu/icpsrweb/content/shared/ICPSR/faqs/what-is-a-codebook.html)

- Example of a codebook that follows best practices described in the link above (https://www.icpsr.umich.edu/web/pages/instructors/setups/codebook/)

Data Management and Data Management Plans

Data management is simply defined as the active and ongoing stewardship of data throughout a lifecycle of use, including its reuse in unanticipated contexts. A lifecycle of data can include the planning for data collection, the collecting of data, the creation of documentation about data, the storage of data, the services that help clients find and use data, and the long-term preservation of data.

Data management is active—that is, it requires purposeful interactions with both data creators and data users, as well as stewardship of the data itself (cleaning, applying standards, updating data, etc.).

Simply, we can think of data in an organizational context as having three layers: The data itself, the documentation (which we discussed above), and its storage. We will discuss storage in depth in the final chapter of this book. For now, it’s good to have a working mental model of what data management applies to—all three layers of the organizational context in which data are collected, described, and stored.

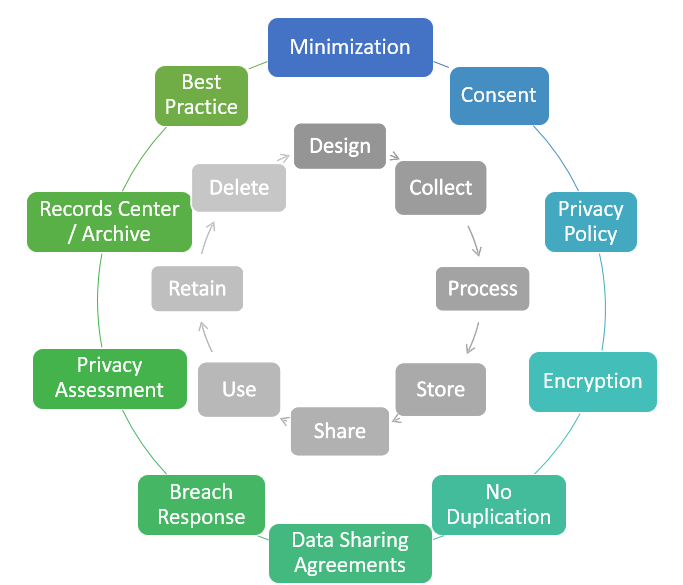

In the definition of data management, I referred to what might seem like a complex “lifecycle” of data. A lifecycle model is meant to be a conceptual model that spells out, broadly, the different stages that data might go through and the various people that will be involved in each stage. A lifecycle model doesn’t necessarily have a clear start or finishing point—the idea is that data might move through, or “cycle” through, each of these stages. As data stewards there is an important role to play each stage of the lifecycle—At the point of collection all the way through to the preservation or destruction of data. Below is a helpful depiction of the most basic stages of a data management lifecycle.

Figure 3 Data Lifecycle Management. Image “Data Management Lifecycle” by Will Saunders (of DOL), CC BY 4.0.

Data Management Plan

A data management plan (DMP) is a written document that describes how data will be managed at each stage of a data lifecycle. As a data steward serving internal DOL stakeholders this might include asking a client: “How do you expect to acquire or generate during the course of a project?”, “How will you manage, describe, analyze, and store those data during the project?”, and “What mechanisms will you use at the end of your project to share and preserve your data?” DMPs are created by data producers with consultation of data stewards. Ideally a DMP is created before data collection begins, but oftentimes it is necessary to develop data management plans during or even at the conclusion of a project. Although an ideal data management plan documents each stage in the lifecycle above, it must include at least four elements:

- Data Types: What types of data are being created, collected, and how should they be preserved

- Data Roles: Who might use the data, and how might those needs change over time?

- Data Documentation: What types of metadata standards are appropriate? What kinds of other documentation might also be useful?

- Data Quality: Should data be cleaned and / or the quality be checked before being stored?

There is a wealth of support for data management planning on the web:

- DMPTool provides interactive templates for creating standards compliant DMPs (https://dmptool.org/)

- Stanford University's Library has a very thorough overview of DMPs for research and public use (https://library.stanford.edu/research/data-management-services/data-management-plans)

- USGS guide on federal agency DMPs (https://www.usgs.gov/data-management/data-management-plans)

Data Interviews

Traditional to LIS is the idea of an information (or data) interview—that is, when people seek information what kinds of mental models do they use to structure a question, how do they translate that question into a query for information in a database, and what types of techniques are helpful for reformulating a query to actually answer their question (not just what the information system returns from a query)?

Behind all information interviews—whether it is between people or between people and an information system—are “mental models.” A mental model is a simple working assumption about how a domain should work. For example, we have a simple working model of a vending machine—we enter money, we select an item, and the machine delivers our selected item. This mental model breaks down if what we expect is not what is returned. If we put in a dollar, select ‘Diet Coke’, and receive a Sprite—something went wrong. Did we press the wrong button? Was the Diet Coke mislabeled? When our mental model doesn’t match reality, we often have to diagnose the problem to understand what went wrong.

Data interviews are not so different than a vending machine in terms of the basic steps used to retrieve an item (input and delivery), but our satisfaction with retrieved items is often much more complicated.

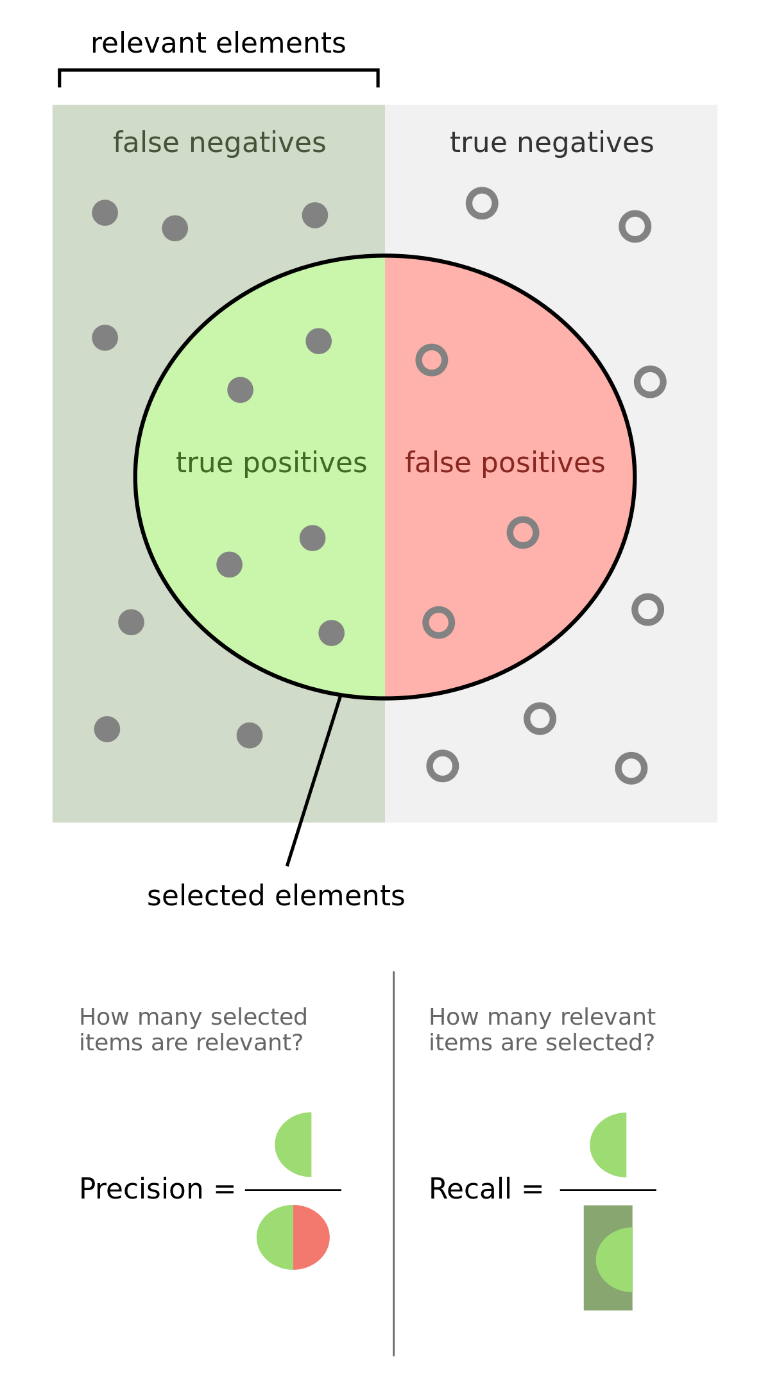

In data interviews—where a user is seeking a piece of structured information about a topic—the mental model we often use is related to information retrieval. We pose a query to an information system and expect to get results back that are relevant to that query. The relevance of a query might be judged either by recall or precision.

- Recall is related to the total amount of information returned—Did we retrieve all the information or data that a system had relevant to our query?

- Precision is about the accuracy of the returned results—Did the information system give us back a subset of the relevant information that is most useful to our query? (See, Figure 4 for a visual example.)

Figure 4 Representation of the values precision and recall in search query results. Image “Precision and Recall” by Walber, CC BY-SA 4.0.

Recall and precision are both useful for a data interview. Sometimes what we desire, especially in exploratory searching, is to cast a broad net and find all the information that might exist about a topic. In this case, recall will be a helpful mental model to judge relevancy.

If, however, we want to retrieve a particular type of information (sometimes called a ‘known item’ search) then precision will be a much better model to judge whether or not an information system retrieved the item we were seeking. Knowing in advance what kind of search is being conducted, and then building a search strategy with the correct mental model in place is the key to successful data interviews.

As a data steward, it will be rare that you encounter a user who has not already done some searching and come up empty. It is much more likely that a user will come to you having been frustrated by not finding an answer to their question, or the data they expect to already exist. In a library setting these types of interviews are often called a “reference interview” and helpfully the field has evolved some of the traditional techniques to be applicable to data users or customers. The existing resources on “data reference interviews” can range from very formal—such as a worksheet used for scientific data to more simple and straightforward models for general data users. All of these approaches share some basic things in common: helping a user understand the question they are trying to answer, asking questions about what resources might accessible and what might be useful, and then following up with the user to ensure that they have a match between expectation and reality. Below is a breakdown of this process into just four simple steps to follow during a data interview with a customer:

The first two steps in a data interview should be identifying the type of question being asked, and the relevancy of the expected results:

What question is the user trying to answer? (In other words, why does the customer want data?)

- Exploratory - Locating what resources might be available and what might be useful for a project (for example).

- Known Item - There is a clear and distinguishable right answer, and the data likely exist somewhere in the institution. The customer is simply trying to find the right data to answer their question.

What mental model will be used to judge the relevance of data? (In other words, what model will the user apply to the data they receive?)

- Recall - Most likely an exploratory search is interested in finding everything that might be relevant to their search—so, recall is almost always the mental model to have in mind when guiding exploratory questions.

- Precision - For a customer that knows that a resource exists but cannot locate it.

When interacting with a customer executing these two steps will help clarify what data is most relevant, and how that data’s relevance will be judged by the user. The next two steps are focused on locating data and ensuring that the data meets the customer’s needs. It's helpful to think of these steps as being about a data query and evaluation.

Query: What data sources are available and what is optimal query for those sources? (In other words—what are the search terms and facets for browsing that will likely yield a relevant dataset?)

- A query or search strategy will always require iteration to learn and experiment with a search interface to retrieve relevant data. This is often helpful to do, initially, with a customer. For example, going to a database, looking at the available holdings and then executing some searches alongside the user to make sure the types of results match their expectations.

- Once the customer understands how to best formulate searches—it is often best to let them continue experimenting and trying to find the best data source.

Evaluation: After getting a customer on the right path to finding data set up a time to check-in and make sure they found what they were looking for, and if not, what the next steps might be in their search process. Next steps might include, designing a project to collect new data, filing a records request to get information that might not be publicly available, or even directly contacting other people in the organization to help with their search.

Throughout this process it can be helpful to create a log of the data interview. This could simply record the time, the question being asked, and the search strategy you helped devise. The log plays two important roles:

- Check-in: The log can help be an external reminder about when to re-contact a user to help evaluate their search process.

- Record of searches: The log can also help to document for yourself and other data stewards the kinds of searches that customers are executing. Over time this log will be useful in understanding changes that are necessary to a database, to data description standards, or even coming up with documentation or guidance that can answer repeated questions from customers.

A template for a basic data log might look like this simple spreadsheet.

Data Licensing and Copyright

Most jurisdictions throughout the world have some provision for intellectual property rights that govern how and under what conditions information objects can be shared and reused. In the United States, most data that are considered factual information cannot be subject to a copyright claim—for example, I could not claim that information about a highway is my property and anyone who references or uses information about highways is subject to legal action based on copyright infringement (this was enshrined in law through a famous case, Feist Publications vs Rural Telephone Service Company[5]). However, when data is arranged or organized in a specific way (e.g., a database) then data collectors do have some copyright protection for the intellectual effort that went into arranging and organizing the data. The copyright protections that are afforded to database creators is limited and varies (slightly) by state and municipality in the US. Regardless of whether data can (or should) be subject to copyright protections, most federal, state, and municipal agencies are subject to open government or public records legislation that requires transparency and access to government information (in Washington this is codified under the Washington Public Records Act[6]). For data, and particularly what is deemed to be non-proprietary and non-confidential data, most government agencies have been motivated to make structured information “as open as possible and as closed as is necessary”. Washington state has long been a leader in open government, and its longstanding open data program has been successfully organized around principles of transparency and accountability.

Regardless of the success of open government, in order to truly achieve the goals of an open data program data needs to be specifically licensed for use and redistribution. When data are published to an openly accessible location on the web a license acts a declaration or reference to an existing legal document that specifies how data should be accessed, used, and any limitations on redistribution. At the federal level, open data license requirements are described in the Foundations for Evidence-Based Policymaking Act of 2018[7]. These requirements are interpreted as follows:

Agencies must apply open licenses, in consultation with the best practices found in Project Open Data, to information as it is collected or created so that if data are made public there are no restrictions on copying, publishing, distributing, transmitting, adapting, or otherwise using the information for non-commercial or for commercial purposes.

Common licenses used for open data include the following:

- Open Data Commons Attribution License (ODC-By) v1.0 (https://opendatacommons.org/licenses/by/1-0/)

- Open Data Commons Public Domain Dedication and License (PDDL) (https://opendatacommons.org/licenses/pddl/1-0/)

- Creative Commons Zero Public Domain Dedication (CC0), e.g., "license" (https://creativecommons.org/publicdomain/zero/1.0/)

- Creative Commons Attribution (CC BY), e.g., "license" (https://creativecommons.org/licenses/by/4.0/)

- Creative Commons Attribution-ShareAlike (CC BY-SA), e.g., "license" (https://creativecommons.org/licenses/by-sa/4.0/)

- GNU Free Documentation License, e.g., "license" (http://www.gnu.org/licenses/fdl-1.3.en.html

All that is required for a license to be applied to data is that there is a clear and unambiguous label that states which license and version is applicable to the data (or collection of data) and where possible provides a link to the text explaining limitations implied. Despite the importance of and simplicity in licensing open data these conventions are rarely followed.

Examples of licenses (and lack of licenses) in the wa.data.gov portal

Public Domain license

- Healthcare Provider Credential Data (https://data.wa.gov/Health/Health-Care-Provider-Credential-Data/qxh8-f4bd)

No License

- WA Tax Exemptions - Potential Eligibility by Make/Model Excluding Vehicle Price Criteria (https://data.wa.gov/Transportation/WA-Tax-Exemptions-Potential-Eligibility-by-Make-Mo/aug9-4a7g)

- Vehicle Battery Registration (https://data.wa.gov/Natural-Resources-Environment/Vehicle-Battery-Registration/we9k-a58y)

Reproducibility and Replication through Open Data

Open data is most often lauded as a democratic principle that enables government transparency and accountability. While simply publishing and licensing data so that it is openly accessible and reusable guarantees some amount of transparency there is, almost always, a significant gap between data being accessible and data being meaningfully reusable. This gap—between access and meaningful use—is one of the significant challenges of data stewardship.

When data are used for analysis or policymaking (i.e., they are transformed from raw inputs to some data role) there is an assumption that by having access and license to reuse data one could reasonably arrive at the same conclusions. This is, broadly speaking, an assumption that open data facilitates reproducibility, replication, or verification. And while these terms are often used interchangeably, they have slightly different meanings.

“Reproducibility” refers to instances in which the original data and computer codes are used to regenerate the results. Reproducibility in this sense should be a guarantee that if data and analytic steps are made accessible then anyone with reasonable skills could arrive at the same conclusions as the original analyst. If a customer wants to verify a report by DOL that the number of Antique Automotive transfers in 2021 was greater than 1000, then reproducibility of this claim should be as easy as pointing to a DOL Vehicle Registration dataset.[8] However, reproducibility is often more complicated than simply pointing at numbers in a dataset. Reproducibility often requires transparency in the form of openly accessible and well documented data, clear and unambiguous analytic steps, and any software or tools used to transform a raw number into a textual claim.

“Replicability” refers to instances in which an individual collects new data to arrive at the same findings as a previous study or report. Replicability implies that if one were to have a different method of data collection then the result or claim of an analysis should hold true (regardless of the data that are used). For example, if a report claims that over 60% of all highway traffic in the state of Washington is by commercial vehicles then regardless of which data we use we should be able to verify that the number of vehicles on the road are commercially licensed—and that number should be approximately 60% of all vehicles.[9]

The differences between replication and reproducibility are subtle but important. Reproducibility implies that a specific result can be regenerated. Replicability implies that a broad finding or claim should have veracity—that is, it should be able to be “rediscovered” or hold true if the same type of data are collected and analyzed a second time.

A simple way that I think about this is through cooking:

- If I follow a recipe for making chocolate chip cookies, then I should be able to use the same ingredients and the same oven settings to create multiple sheets of the same exact cookie. That is, I can reproduce a cookie many times.

- If I attend a holiday party, taste an excellent chocolate chip cookie, and ask my friend for the recipe–then (using similar ingredients and similar oven settings) I should be able to recreate the original cookie. That is, I should be able to replicate (in proximate fashion) the original cookie that I tasted.

Reproducibility and replication are often goals of stakeholders or customers that are served by government agencies. When a report or analysis is made public then there is an assumption that we can either reproduce the exact findings, or in the future replicate the claims made using new data. Many times, through a reference interview, it will become clear that the goals of a customer are to verify something they believe to be true or maybe are skeptical about the result holding up over time. Determining first if the customer goal is to regenerate or reproduce a finding, or if the customer wants to replicate a previous study can often guide the resources that are suggested, and the steps taken to serving a customer effectively.

Further Reading

- National Conference of State Legislatures. (2022). State Open Data Laws and Policies. https://www.ncsl.org/research/telecommunications-and-information-technology/state-open-data-laws-and-policies.aspx

- Federal Enterprise Data Resources. (2022). Open Licenses. data.gov. https://resources.data.gov/open-licenses/

- Data.world. (2022). Common Dataset License Types. Data.world. https://data.world/license-help

- Creative Commons. (2022). Open Data. Creative Commons. https://creativecommons.org/about/program-areas/open-data/

- Open Knowledge Foundation. (2022). Guide to Open Data Licensing. Open Knowledge Foundation. https://opendefinition.org/guide/data/

On Metadata

- Data Management. (2001). Metadata Creation. United States Geological Survey (USGS). https://www.usgs.gov/data-management/metadata-creation

- Horsburgh, J. etal. (2022). Identify and use relevant metadata standards. DataONE. https://old.dataone.org/best-practices/identify-and-use-relevant-metadata-standards

- Federal Enterprise Data Resources. (2014). DCAT-US Schema v1.1 (Project Open Data Metadata Schema). Data.gov. https://resources.data.gov/resources/dcat-us/

- W3C. (2020). Data Catalog Vocabulary (DCAT) - Version 2. Data.gov. https://www.w3.org/TR/vocab-dcat/

On Curating / Preparing Data for Reproducibility:

On Reproducible Research and Replicable Results (using open science principles)

- Psomopoulos, F. (2017). Open Science Tools, Data & Technologies for Efficient Ecological & Evolutionary Research: Transparent, Reproducible and Open Research. https://reproducible-analysis-workshop.readthedocs.io/en/latest/2.Transparent-Open-Research.html

- German National Library of Science and Technology. (2019). Reproducible Research and Data Analysis. Open Science Training Handbook. https://open-science-training-handbook.gitbook.io/book/open-science-basics/reproducible-research-and-data-analysis

The Turing Way Community. (2021). Guide for Reproducible Research. https://the-turing-way.netlify.app/reproducible-research/reproducible-research.html

[1] Extensible Interchange Format (ExIF). (2022). Wikipedia: ExIF. https://en.wikipedia.org/wiki/Exif

[2] Dublin Core Metadata Initiative (DCMI). (2020). DCMI Metadata Terms. https://www.dublincore.org/specifications/dublin-core/dcmi-terms/

[3] Ibid.

[4] Research Data Alliance. (2022). Metadata Standards Catalog. https://rd-alliance.github.io/metadata-directory/

[5] Feist Publications, Inc., Petitioner v. Rural Telephone Service Company, Inc. 499 U.S. 340. (1991). https://www.law.cornell.edu/supremecourt/text/499/340

[6] Washington State Legislature. Chapter 42.56 Public Records Act. https://app.leg.wa.gov/RCW/default.aspx?cite=42.56

[7] Foundations for Evidence-Based Policymaking Act. HR 4174. (2018). https://www.congress.gov/bill/115th-congress/house-bill/4174

[8] Department of Licensing. (May 2021). Vehicle and Vessel Fee Distribution Reports. https://fortress.wa.gov/dol/vsd/vsdFeeDistribution/DisplayReport.aspx?rpt=2021M05-57.csv&countBit=1

[9] Barba, L.A. (2018). Terminologies for Reproducible Research. arXiv preprint. arXiv, 1802.03311.

Data Stewardship - Applications

In this chapter we will discuss applications of concepts to data stewardship. This will extend concepts covered in previous sections (e.g., data cleaning) and will focus on practical ways to implement these concepts at DOL. The goal of this chapter is to understand how to apply and use existing best practices in data stewardship and where possible resources for further learning. The topics we will cover include:

- Data Quality

- Data Cleaning

- AI and Machine Learning

- Data Visualization

- Databases

Data & Metadata Quality

Data Quality

In the 'Fundamentals of Data Stewardship' section we defined data quality through the International Standards Organization[1] as “…the degree to which a set of characteristics of data fulfills stated requirements.” An ISO standard is developed by experts in consultation with various stakeholders to agree upon a broad definition that can be applied in any setting. ISO standards can be a great starting point for understanding a broad definition or authoritative way to describe a concept like data quality. Additional work has gone into defining internal and external indicators of data quality—these include the following:

Internal Indicators

- Validity - Data should clearly and adequately represent the intended result.

- Reliability - Data should accurately represent what it purports.

- Timeliness - Data should be recorded at frequency, and with regularity, to be reliable.

- Precision - Data should be free of errors.

External Indicators

- Integrity - Data should be verified for being accurate and should have safeguards in place to control data editing so that accuracy can be guaranteed over time.

- Documentation - Information about how data were collected, analyzed, and the context of appropriate use should be accessible alongside data itself.

- Format - Data should be stored in a format that is regularly checked for preservation.

Judging data quality within a particular institution is often a matter of adjusting or refining these indicators. For example, the United States Agency for International Development (USAID) has developed a checklist for conducting data quality assessments. Each time a data steward recommends a data source for use a data quality assessment is conducted to guarantee that the data are “fit for purpose”—that is, the recommended data meet the strict quality standards that are expected of USAID research and policy making.

Some examples of the USAID approach to data quality:

- Data Quality Assessment Checklist and Recommended Procedures (https://www.usaid.gov/sites/default/files/documents/1865/Data_Quality_20Assessment_Checklist.pdf)

- Data Quality Assessment Checklist—Additional Help (https://www.usaid.gov/sites/default/files/documents/1868/597sad.pdf)

As a data steward, understanding and even recommending data quality indicators will be largely dependent upon the rules and regulations of a data governance program. Thus, a good place to start might be finding out where, or even if, data quality is defined by your organization, and where, if possible, there are examples of how master data have been transformed to meet these standards.

Metadata Quality

Metadata quality is, as it sounds, extremely similar in spirit to data quality, but includes some refined indicators that can be useful to documentation that provides a description, rules of access, or rights for data use. There is no ISO or even broadly agreed upon definition of metadata quality (in part because many organizations think of metadata as a sub-class of data). However, there are some broadly agreed upon indicators that can be helpful in evaluating the quality of structured and unstructured documentation that plays the role of metadata. These include:

- Completeness - All necessary descriptive, technical, or administrative attributes are included in a metadata record.

- Accuracy - Information is correct both semantically and syntactically. Meaning that the proper standards for representing information are identified and used (e.g., representing a ‘Date’ the ISO standard 8601 is followed, such as `DD-MM-YYYY` or `01-01-2000` to represent `January 01, 2000`).

- Accessibility - Metadata can be accessed and read by both humans and machines. This is a critical indicator for many business applications because metadata will often play multiple roles—machine-readable metadata will drive data discovery systems, and human-readable metadata will be used to evaluate and judge relevancy. Both forms of metadata govern who can and should access data.

- Conformance to expectations - Values (that is, what the attribute of a metadata record describes) adhere to the expectations of your defined user communities (both internal and external). A good example of this is an attribute like “location”—for some metadata records location might be a plain language description like “Whatcom County” while other records require a more specific locale like the latitude and longitude of the county (e.g., 48.8787° N, 121.9719° W).

- Consistency - Semantic and structural values and attributes are represented in a consistent manner across records. Values remain consistent within a record or type of records, and attributes are defined clearly with an existing schema. As we talked about in previous chapters, having a schema that can be clearly identified and interpreted is the key to metadata consistency across an organization.

- Timeliness - When the resource changes, the metadata is updated accordingly. When additional metadata becomes available or when metadata standards change, the metadata associated with the resource is also consistently updated.

- Provenance - Information about the source of the metadata or data are recorded and captured in the record, and metadata transformations or changes can be traced back to the original record.

Like data quality, metadata quality has a number of specific institutional applications to ensure that this documentation meets the expectations of data customers.

Data and Metadata Quality in Practice

Applying quality standards can happen informally and formally. Informally, the criteria described above can be used heuristically to guide the creation or editing of data to ensure that it meets a customer’s expectations. It is my experience in data curation that informal quality assessments are part of daily work in identifying data for customers and helping stakeholders to assess whether data are being collected with the right level of sophistication or accuracy. Often this informal process of metadata quality assessment will reveal shortcomings or inaccuracies of quality that should be fixed by a data owner. The repair or improvement of data is a matter of contacting the owner and helping them understand where there is a gap between best and current practices.

More formally, data quality assessments can be conducted as part of an inventory process that attempts to systematically evaluate the readiness of data to meet customer needs. A data inventory, like all of data stewardship, can vary based on the needs of an organization and is oftentimes a necessary exercise in establishing data governance.

The general steps to performing a data inventory (also called a data audit) are:

- Establishing an oversight committee.

- Determining the scope and plan of the inventory.

- Choosing which indicators of data or metadata quality will be assessed (and often refining the indicator definitions to meet a particular assessment need).

- Cataloging data assets in accordance with the inventory plan and identified data quality indicators.

- Documenting the inventory for a data governance committee which can then prioritize or guide next steps in improving data quality.

Recommended resources for developing and executing a data inventory:

- GovEx is a non-profit that works with government agencies at the state and municipal level has established an excellent guide to designing and executing data inventories. This guide includes numerous examples of checklists and data quality indicators used by agencies throughout the US. (https://labs.centerforgov.org/data-governance/data-inventory/)

- The US Department of Transportation has developed a sophisticated model for executing data inventories (https://www.transportation.gov/sites/dot.gov/files/docs/DOT%20-%20OpenData%20-%20Data%20Inventory%20Approach.pdf)

Data inventories are a part of good data governance, but they are also increasingly a component of data privacy legislation. For example, the General Data Protection Regulation (GRPR) established by the EU includes an article (30) that requires any entity collecting personally identifiable information (PII) to conduct an inventory of data security and quality.[2]

Similarly, the state of Washington has flirted with the idea of enacting data privacy legislation that is similar to the California Privacy Rights Act (CPRA). Understanding the requirements of a data inventory in these legislative contexts can often lead to more useful and meaningful data quality indicators.